Looking Glass 2021

Lens three: Evolving interactions

Consumers aren’t just demanding availability and accessibility — they expect interactions to be seamless, and richer. Enterprises can deliver this experience through evolving interfaces that blend speech, touch and visuals.

Scanning the signals

Voice and touch interfaces, and virtual and augmented reality continue to evolve at a startling pace. We have higher expectations — we’re no longer amazed by voice recognition and AI-based responses on our phones; instead we’re annoyed when the technology fails or does something unexpected.

Consumers expect to be able to interact with technology in whatever manner fits their current context, and to switch between interaction styles in a way that makes sense. Signals here include:

- Growing adoption of voice in multiple use cases: shopping, ordering food, and booking travel

- VR and AR used beyond specialized, safety-critical cases such as police or military training

- Large platform players such as Google, Amazon and Microsoft creating new offerings including interaction technologies

- Apple set to announce its widely-rumored “Apple Glass,” an augmented reality device

The Opportunity

Consumers want low-friction interactions, and often choose services and products accordingly. You’ll need to be ready or risk being shut out by more proactive competitors.

Evolving interactions can also contribute directly to the bottom line. According to IBM, businesses spend $1.3 trillion on 265 billion customer service calls each year, but chatbots can speed up response times and answer up to 80% of routine questions, allowing agents to focus on higher-value customer service.

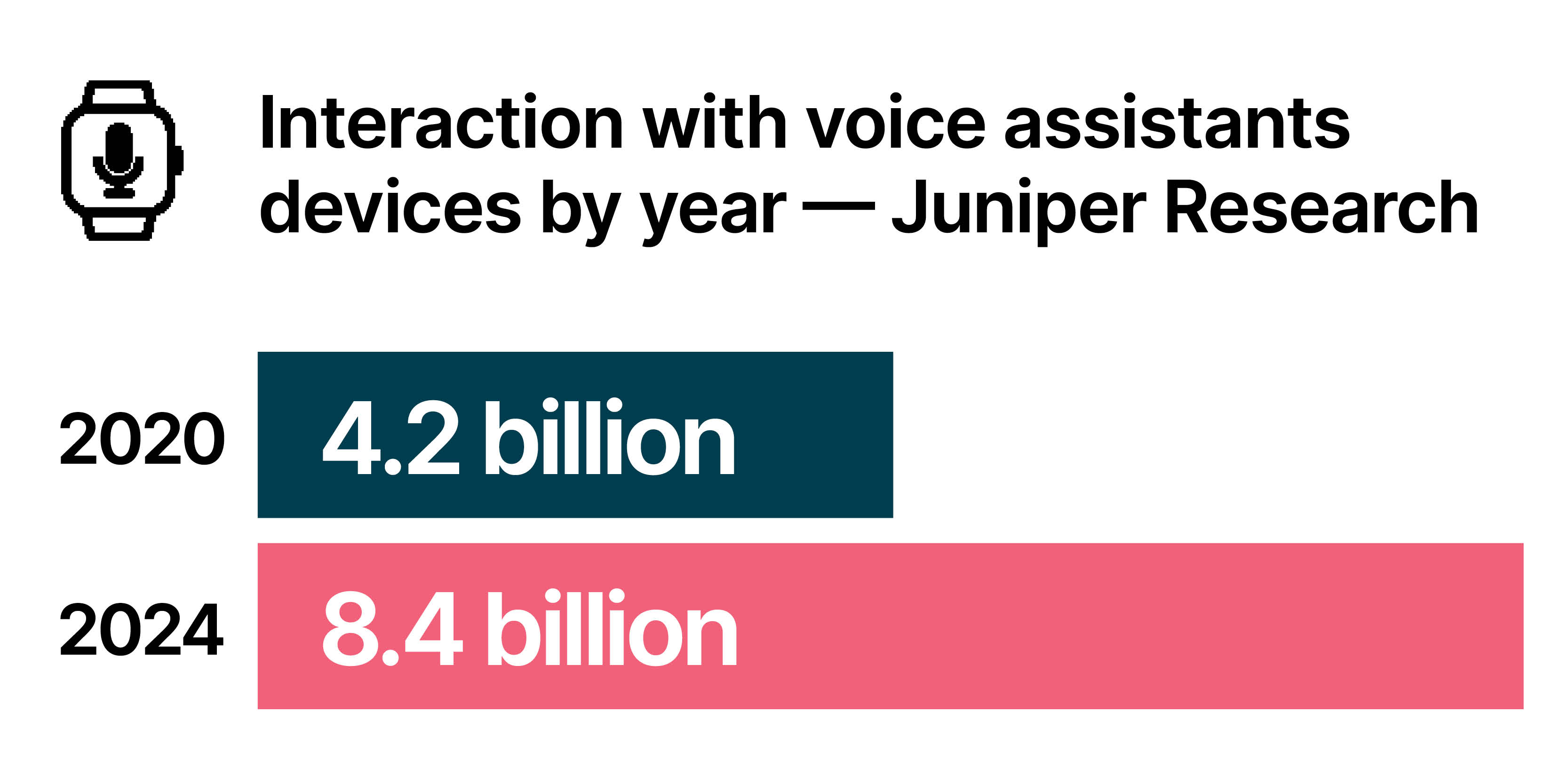

Consumers are eager to use voice interfaces, and make purchasing decisions based on other smart home devices (such as thermostats) that work with their existing voice assistants. According to Invesp voice shopping is expected to reach US$40 billion by 2022. Elsewhere, Juniper Research predicts that consumers will interact with voice assistants on over 8.4 billion devices by 2024.

What we’ve seen

Trends to watch: Top Three

Adopt

Intelligent agents, assistants and bots. The technology behind intelligent assistants and chatbots is developing rapidly. Enterprises should watch this trend carefully, invest in capability building, and start to put this technology to use, as it has clear potential to enhance customer satisfaction and accessibility, cost savings, and product development. Depending on what market they are in, businesses should be prepared to spend more than they would anticipate on content and dialog design. It’s important to treat a chatbot as another user experience channel, not a technical feature.

Analyze

Wearables such as smart watches have become commonplace, but the technology is rapidly expanding in terms of both feature sets and interactions. For example, the new Apple Watch announced in late 2020 includes technology for measuring blood oxygen levels, a clear response to the COVID-19 crisis. Apple is also known to be in the later stages of developing its own augmented reality glasses. While it’s still unclear how far we are along the path to wearables being a “must have” instead of a “want to have,” fitness and medical monitoring are likely to drive the process.

Anticipate

Gesture recognition. Machine understanding and interpretation of human gestures such as waving or making “up” or “down” motions is an ongoing and promising research area, with tech at varying levels of maturity. Leap Motion and Kinect made hand, finger, and body tracking relatively useful, but fine-grained hand and finger tracking using only cameras — as for example in the Oculus Quest — is still developing. In future we may be able to approach “natural” gesture recognition with machines able to recognise gestures such as pinches and finger taps, or two-handed gestures such as resizing, dragging, dropping, or zooming in and out.

Trends to watch: The complete matrix

Technologies that are here today and are being leveraged within the industry

- Enterprise XR

- Smart systems and ecosystems

- Human-machine collaboration

- Intelligent assistants, agents and bots

- Natural language processing

- Increasing role of decentralized workforces

Technologies that are beginning to gain traction, depending on industry and use-case

- Biometrics

- Computer vision

- Voice as a ubiquitous interface

- Facial / expression recognition

- AI-driven interaction

- Mobile AR

- Wearables

- Ambient computing

- Automated workforce

- Touchless interactions

Still lacking in maturity, these technologies could have an impact in a few years

- Digital twin

- Gesture recognition

- Privacy-aware communication

- Personalized medicine

- Accessibility

- Affective (emotional) computing

- Brain-computer interfaces

Advice for adopters

- Monitor opportunities to leverage these technologies. Many are moving beyond the experimentation phase to the exploitation phase, and are attracting heavy investment from big tech companies such as Facebook and Microsoft.

- Invest in the skills to succeed with new interfaces. Many organizations are looking at hiring people from industries such as gaming who already have been working with things like XR for years. But you might be better served by training your existing development teams — the people who already know your business, products and customers — in the new technologies.

- Bear in mind that consumer expectations are extremely high. If you’re going to offer an interface using voice, gesture, or XR, make sure it works well and is a compelling experience.

- Understand that these technologies change the user journey and design process. In XR for example, design must be done keeping spatial three-dimensional environments in mind, and that has deep implications. It’s not simply about replicating reality — there is significant opportunity for innovative interaction design in these environments.

- Beware vendor-lock in. When developing with these interfaces, you will often have to choose a vendor — Google, Apple, Amazon, Microsoft, Oculus or others — in order to take advantage of the acceleration their platform can offer. But intense competition also encourages slow support of ‘rival’ vendors’ ecosystems.

By 2022, businesses will…

… see greater bottom-line impact from a wider adoption of VR/AR technologies. The lower cost of headsets has made it cost-effective for industries from manufacturing to healthcare to communications to use XR for everything from training doctors to treating patients to reducing time-on-task in industry. You should be exploring how to harness the power of this technology in your ecosystem.