If you’ve tried machine learning or data science, you know that code gets complex, quickly. With every experiment, we might write code that adds another moving part. Every new moving part increases complexity and adds one more thing to hold in our head.

While we cannot—and should not try to—escape from the essential complexity of a problem, we often add unnecessary accidental complexity and unnecessary cognitive load through poor coding habits such as:

Not having abstractions. When we write all code in a single Python notebook or script without abstracting it into functions or classes, we force the reader to read many lines of code and figure out the “how”, to find out what the code is doing.

Long functions that do multiple things. This forces us to hold all intermediate data transformations in our head, while working on one part of the function.

Not having unit tests. When we refactor, the only way to ensure that we haven’t broken anything is to restart the kernel and run the entire notebook(s). We’re forced to take on the complexity of the whole codebase even though we just want to work on one small part of it.

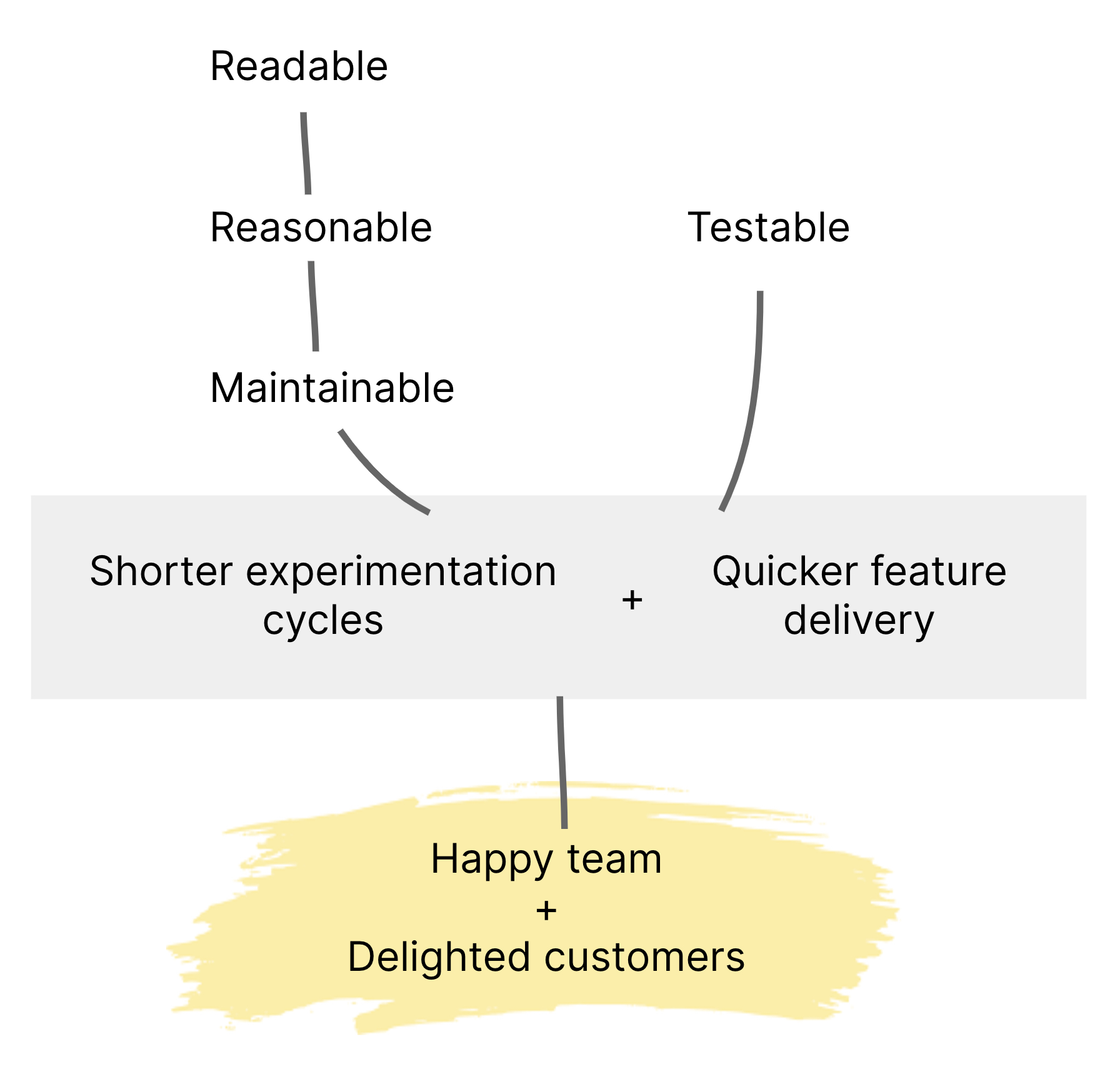

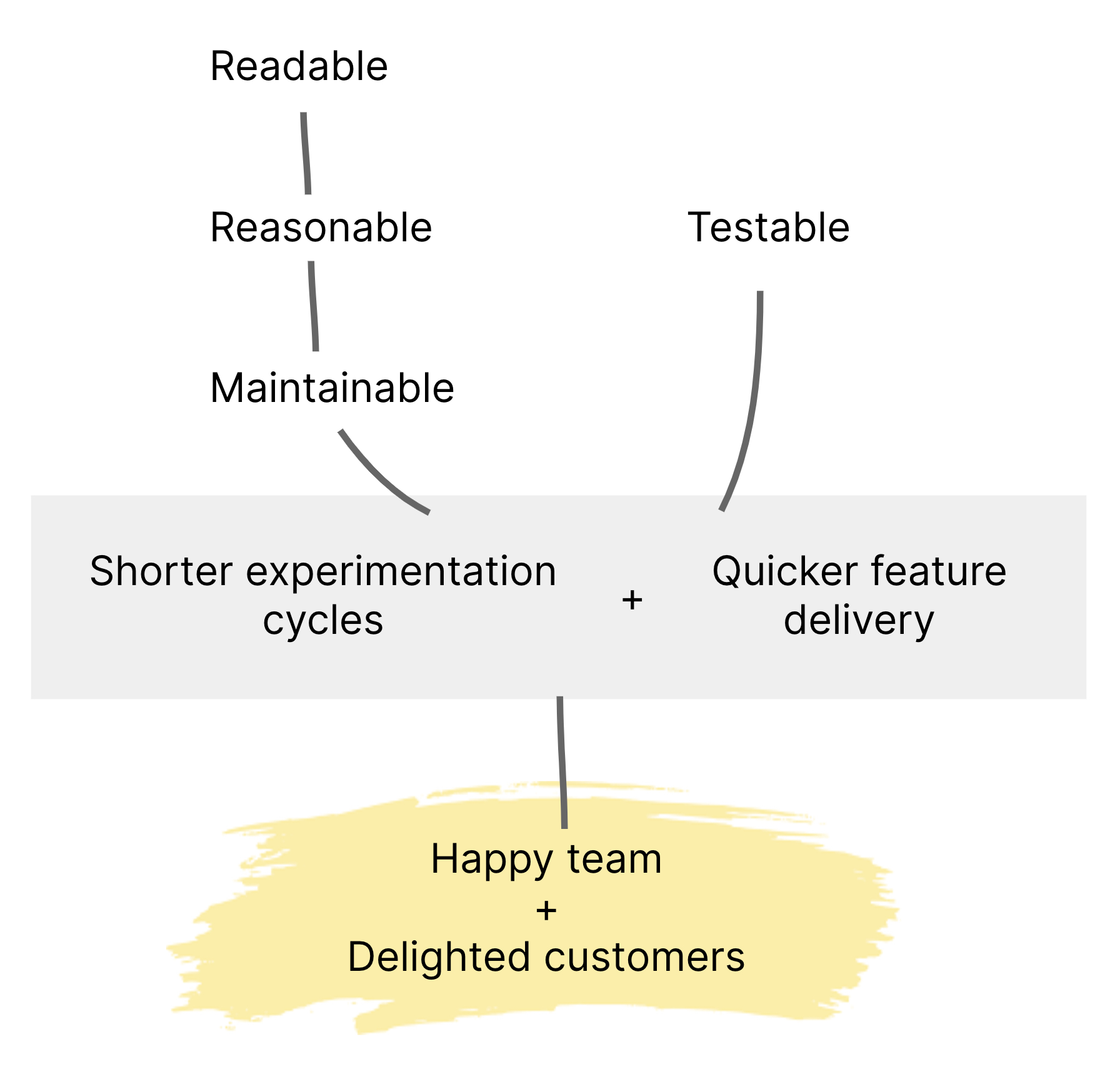

In this article, we share four habits and one refactoring technique from the software development world which have helped us manage complexity in our data science projects. From our experience, it can lead to great delivery outcomes as depicted here:

Figure 1: These coding habits are a means to the ultimate goal of making work fulfilling, by empowering data scientists to work effectively and shipping value to customers more reliably.

If you’re interested in how these practices can be applied in your machine learning or data science projects, get in touch and we’re happy to chat further.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.