Testing

Guidelines for Structuring Automated Tests

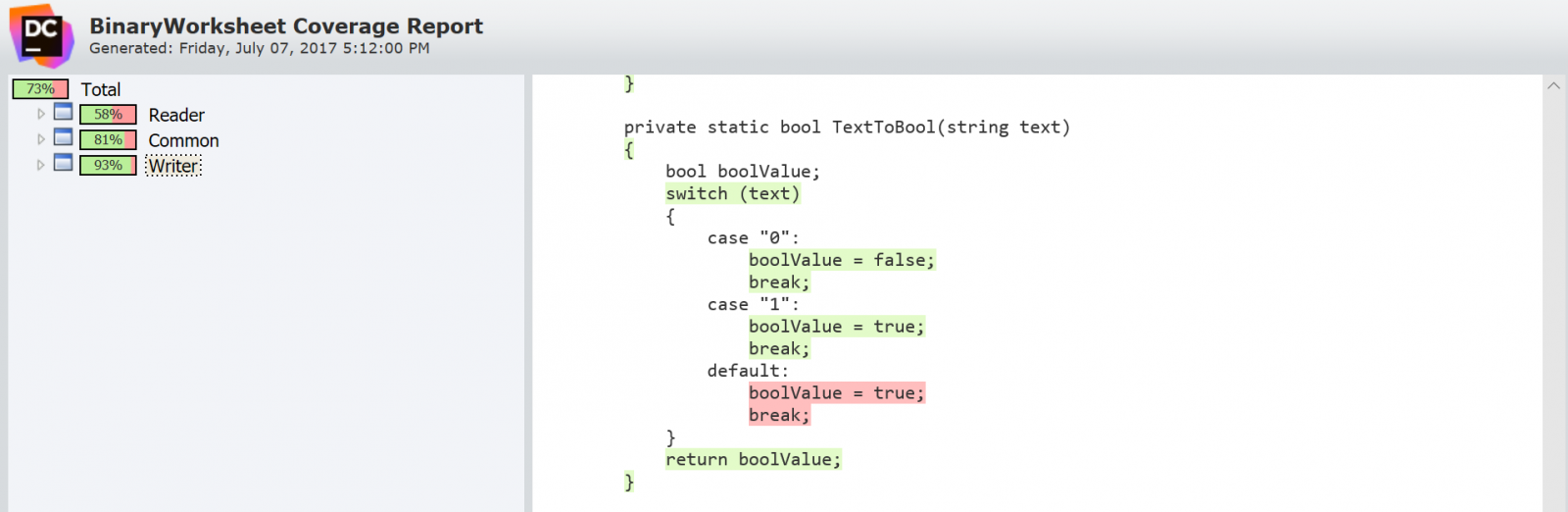

| Language | Code Coverage Tool |

| Dot Net | dotCover |

| Java | Cobertura, JaCoCo |

| Ruby | simplecov |

| JavaScript | Istanbul |

| Python | Coverage.py |

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.