Imagine arriving at a bustling, noisy airport. It's easy to feel overwhelmed and anxious, unsure of where essential facilities like toilets or the right check-in for your flight might be. Panic and uncertainty can escalate quickly for people with disabilities, including those with anxiety, cognitive issues, are neurodivergent or in a wheelchair.

Currently, it's challenging to provide personalized assistance to everyone who needs it, especially at busy times. Being guided or driven through the airport also brings with it a lack of agency, a reduced experience and a sense of otherness – as well as drawing attention that not everyone is comfortable with. Agentic AI could bridge these gaps by acting as a virtual, personalized guide.

Let's break down how agentic AI could assist, focusing on various types of disabilities and the entire journey from home to take-off.

Understanding the challenge

For many people with disabilities, getting to and through a large or unfamiliar space — particularly one like an airport with several essential processes to navigate — requires more than just a map. It also demands:

Pre-planning: Knowing exactly what to expect, from transport options to accessible entrances.

Navigating dynamic obstacles: Process updates, unscheduled or temporary changes, dynamic queuing or changes in routes.

Managing sensory overload: Loud noises, bright lights and chaotic environments can be disorienting.

Individual needs: What's accessible for a wheelchair user might not be for someone with a visual impairment or a cognitive disability.

"Last mile" navigation: While some people with (particularly mobility-based) disabilities seek assistance, many do not but find it difficult to hear announcements or follow complex routes to their gate.

Agentic AI, with its ability to observe, reason, plan, and respond to dynamic inputs, could be a game-changer. Let’s imagine how agentic AI could assist people with various disabilities in navigating an airport.

What happens when the airport changes suddenly?

Airports are dynamic environments. Gate changes, temporary closures, and congestion happen daily. Agentic AI handles this by maintaining multiple accessible routes, monitoring live updates, and switching paths when needed, similar to how modern navigation apps reroute traffic, but with accessibility and cognitive load as first-class priorities.

For visually impaired individuals:

A personalized "virtual guide": An AI agent, integrated into a smartphone app or a wearable device (like Seeing Eyes), can act as a continuous virtual guide.

Perception: Using computer vision (cameras) and GPS/indoor positioning, it constantly analyzes the environment.

Reasoning and planning: It understands the user's destination (e.g., "Terminal 1, Gate 17, Seat 14D") and current location, then plans the most accessible route, factoring in real-time crowd density, additional time that may be needed in security, temporary barriers or processes like additional baggage scans.

Action (guidance): It provides real-time, turn-by-turn audio instructions (e.g., "Walk straight for 10 seconds, then turn left at the sound of the concession stand," or "There is a queue forming five paces ahead, please veer right"). It can even provide haptic feedback (vibrations) for directional cues or obstacle warnings.

Object recognition and description: It can identify and describe objects in the environment upon request or proactively (e.g., "You are approaching the last bathroom before security queues on your left," or "There's a trash bin directly in front of you"). This helps users build a mental map of their surroundings.

Dynamic re-routing: If a planned route becomes blocked (e.g., a water spill or a maintenance cart), the AI agent can instantly re-evaluate and suggest an alternative accessible path.

Some of this is already available through AI assistants (ChatGPT has a vision tool), but joining up real time visuals with a plan, airport information and the individual’s journey information will further improve the experience.

For mobility impaired individuals (e.g., wheelchair users):

Optimized accessible routing:

Pre-trip planning: An AI agent could analyze detailed maps and public transport networks to identify truly accessible routes, considering factors like ramp gradients, lift availability, accessible toilets and security lines. It can also integrate real-time data on lift outages or temporary ramp closures.

Personalized preferences: The agent can learn a user's specific mobility needs (e.g., preference for ramps over lifts, aversion to cobblestones) and customize routes accordingly.

Real-time obstacle avoidance: Using sensors (from the user's wheelchair or a connected device), the AI could detect uneven surfaces, sudden drops or crowded bottlenecks and guide the user around them.

For individuals who are neurodivergent or have a mental health condition (e.g., ADHD, autism, anxiety, depression):

Personalized, predictable guidance:

Clear, concise instructions: The AI agent could be programmed to provide personalized navigation instructions to cut through the overwhelming amount of information and noise in the airport.

Visual supports: Integration with smart displays or AR glasses would provide visual cues (e.g., arrows overlaid on the real world, distinct markers for accessible paths) to complement audio instructions.

Pre-arrival "walkthroughs": The AI could generate a simulated virtual walkthrough, allowing the user to familiarize themselves with the environment and reduce anxiety before arrival.

"Safe space" identification: The AI would highlight designated quiet areas or sensory-friendly zones within the venue, providing routing to these spaces if needed.

Routine and predictability: The agent could learn preferred routines and offer them consistently, reducing cognitive load. It can also manage expectations by informing users of upcoming changes or potential sensory triggers.

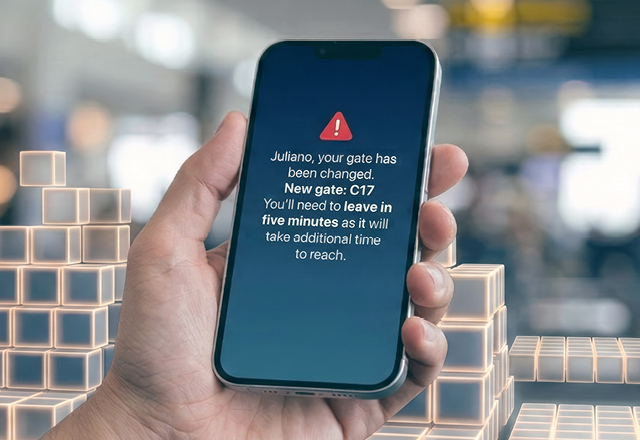

For individuals with hearing impairments:

Visual navigation cues: AI agents could provide visual alerts for important audible announcements like gate changes or final calls) or emergency information through connected devices.

Real-time text descriptions: AI agents could also provide real-time text descriptions of announcements, connection information, passenger calls and requests or important instructions displayed on a device.

Grounding agentic AI in real-world airport systems

Agentic AI does not invent or interpret live airport data. Instead, it consumes and reasons over operational signals that airports and airlines already generate today, and then overlays accessibility logic on top of them.

These signals will include:

Airline and airport operational systems (AODB):

Live data on gates, delays, terminal assignments, and disruptions — the same data used by airport displays and airline apps.Digital indoor maps and wayfinding systems:

Many airports already provide QR-code–based maps showing elevators, escalators, accessibility routes, and temporary path closures due to cleaning or construction.Real-time staff signals (human-in-the-loop):

At some airports, smart infrastructure enables staff to update system status (e.g. assistance delayed, lift unavailable), providing reliable, human-verified inputs.

Agentic AI layers personalized guidance on top of existing systems, it will not replace them.

Agentic AI's core capabilities in action

To summarize, there are a range of ways we can see agentic AI’s capabilities in action in this context.

Autonomy: The AI could process available data about the environment, interpret user needs, plan actions and execute them (e.g., generate navigation instructions).

Learning and adaptation: Over time, the AI could learn individual preferences, common obstacles and airport (or hospital/other complex spaces) specificities, constantly refining its guidance. It can adapt to changing conditions in real-time (e.g., a closed path due to an emergency or cleaning).

Integration: The impact of agentic AI for PWD users scales with each integration:

Venue management systems: Accessing real-time information on facility status (e.g., broken lift), gate allocation and schedules.

Public transport data: Integrating live bus/train times and accessibility information to navigate to and within the airport (eg transfer shuttles).

Mapping APIs: Utilizing detailed indoor and outdoor mapping data.

Wearable devices: Providing discreet and effective feedback (audio, haptic, visual).

Multi-modal interaction: Users would interact with the AI via voice commands, text or even gestures, making the system highly adaptable to different needs.

An opportunity for significant change

Agentic AI can move beyond simple mapping applications to provide a truly personalized, proactive and dynamic navigation experience for people with disabilities. This fosters greater independence, reduces anxiety and significantly enhances people with disabilities’ navigation of the world, opening up many opportunities and experiences.

The opportunity is also significant for business; the ‘purple dollar’ — the spending power of PWD and their families — is estimated to be $13 trillion, and where truly inclusive experiences can be created, loyalty from this sector can be anticipated.

A layered path to a North Star vision

The long-term opportunity is a fully adaptive, personalized accessibility companion across many types of public infrastructure.

However, this vision is achieved in stages, not all at once, and here we have only considered the example of an airport, in which:

Foundation layer:

Integrate existing airline, airport, and mapping systems to deliver reliable guidance.Assistance & safety layer:

Enable staff coordination, caregiver tracking, and emergency escalation.Learning layer:

Improve personalization and airport-specific guidance through usage and feedback.Future layer:

Deeper integration with smart infrastructure as airports evolve.

This layered approach allows impact today, while steadily moving toward a more intelligent, inclusive future.

Ultimately though, the opportunity this presents is that AI innovation can have real impact on people’s lives, specifically people with disabilities, when done thoughtfully. It doesn’t have to be novelty for novelty’s sake: it can be meaningful and its consequences far-reaching.

Matthew Johnston proudly served as our Global Disability inclusion lead, having joined Thoughtworks as a project manager. He was absolutely passionate about making the world more inclusive and was a trailblazer for equal opportunities.

As the UK’s first deaf juror, a trustee and advisor to Scope and a leader in many disabled communities, he helped us shape a more inclusive Thoughtworks and envisaged many ways technology can create equity. This article represents so much of his passion. The idea that he initiated is being developed, detailed and explored with love and gratitude for his contribution, and is shared now, in loving memory. Matthew, rest well and thank you for all you have done to make the world better.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.