The first part of this two-part series discussed serverless functions as a deployment model, as well as, why you should consider it and when you should use it. We had argued that serverless functions might be cost-effective while launching a new service, but not so much when at scale.

Our recommendation was for application teams to be flexible and change their deployment models as the context changes. The second part of the series explores how you could embed such flexibility into your applications.

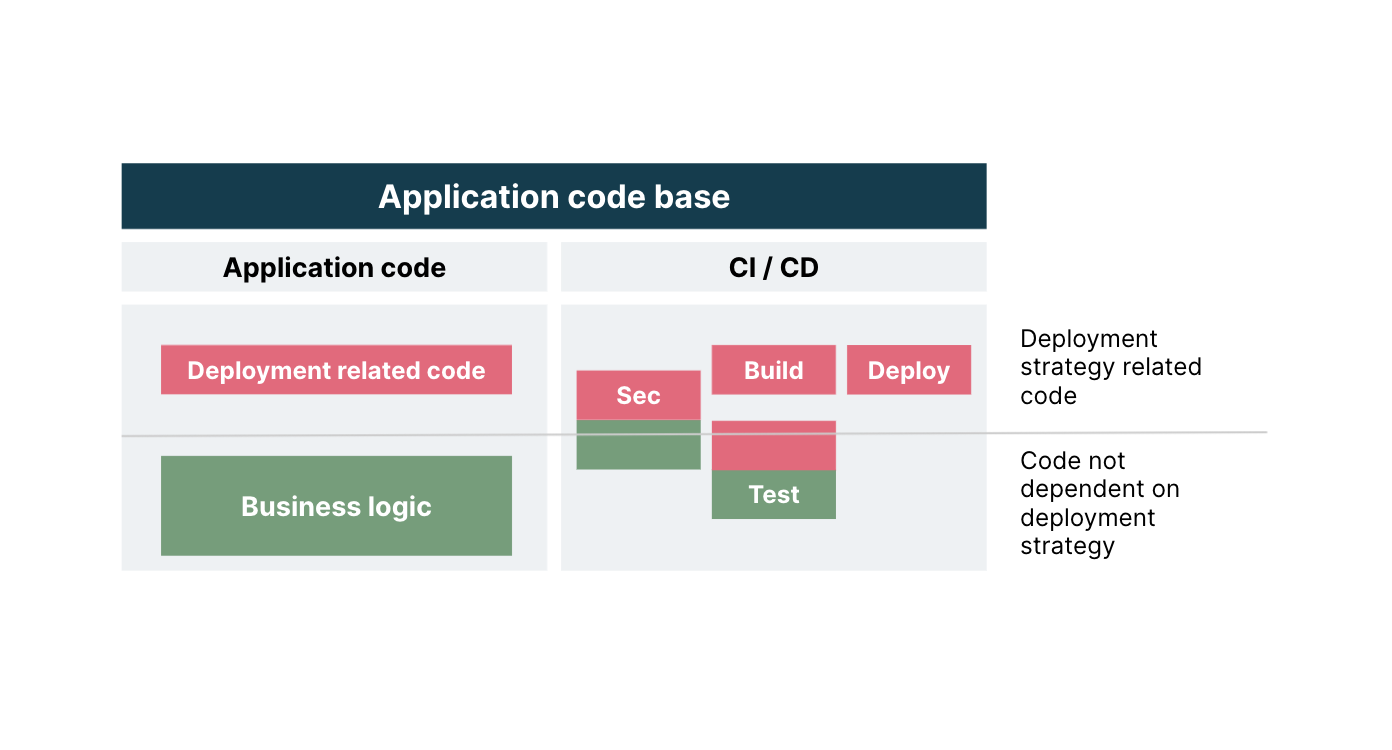

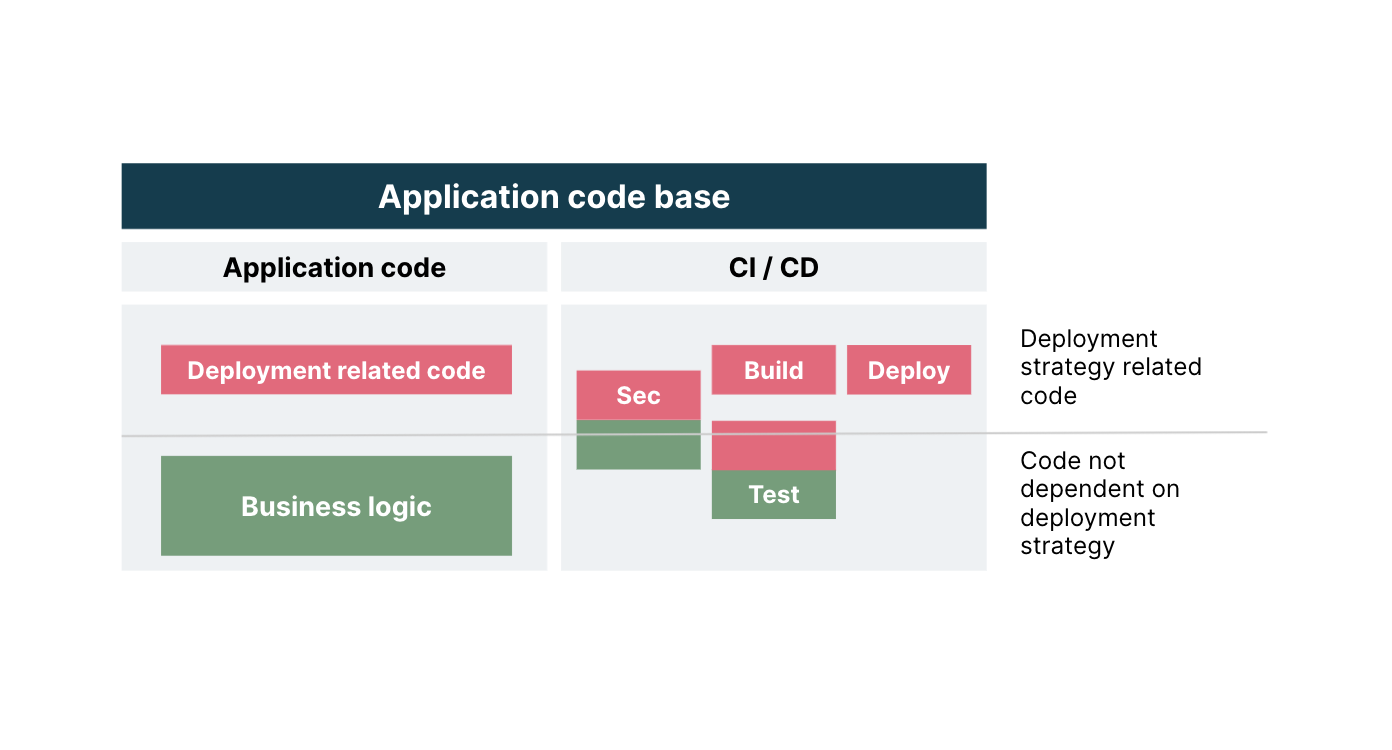

When using modern development practices, the codebase of an application is typically split into two – application logic and DevSecOps. Here’s an overview of what each of them contains and a discussion into how you could optimize flexibility for them.

Application logic

This is the code (usually written in languages like Java, TypeScript or Go) that implements what the application should to do. We can further divide the application logic of a back end service into logical parts.

Business Logic: code that implements the behaviour of a service, expressed in the form of logical APIs (methods or functions). It typically expects some data, maybe as JSON, as input and returns some data as output. This code does not have any dependency on the technical mechanics linked to the actual environment where it runs — be it a container, a serverless function or an application server.

When in a container, these logical APIs can be invoked by the likes of Spring Boot (if Java is the language), Express (with Node) or Gorilla (with Go). When invoked in a serverless function, it will use the specific the FaaS mechanism implemented by the specific cloud provider.

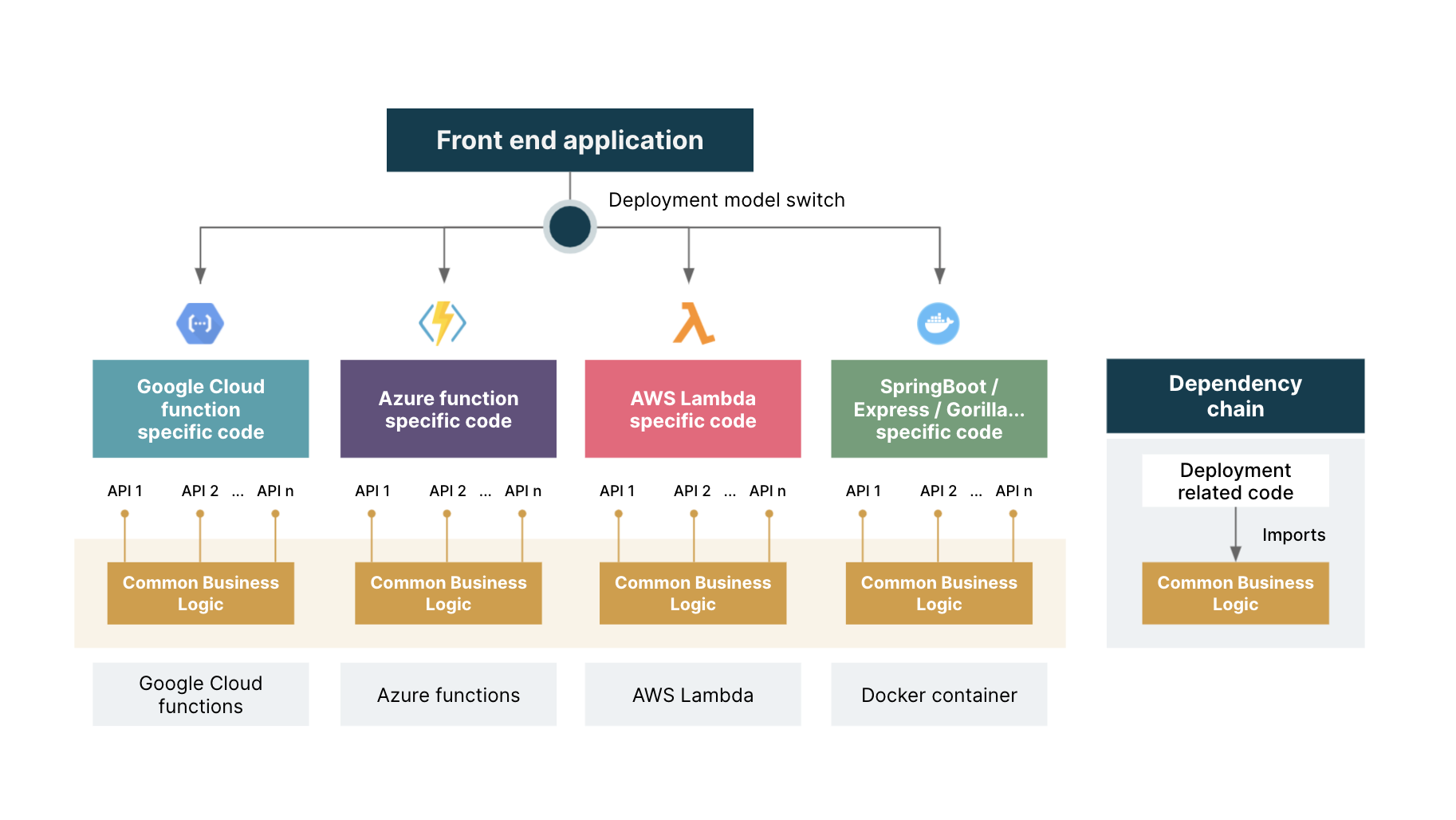

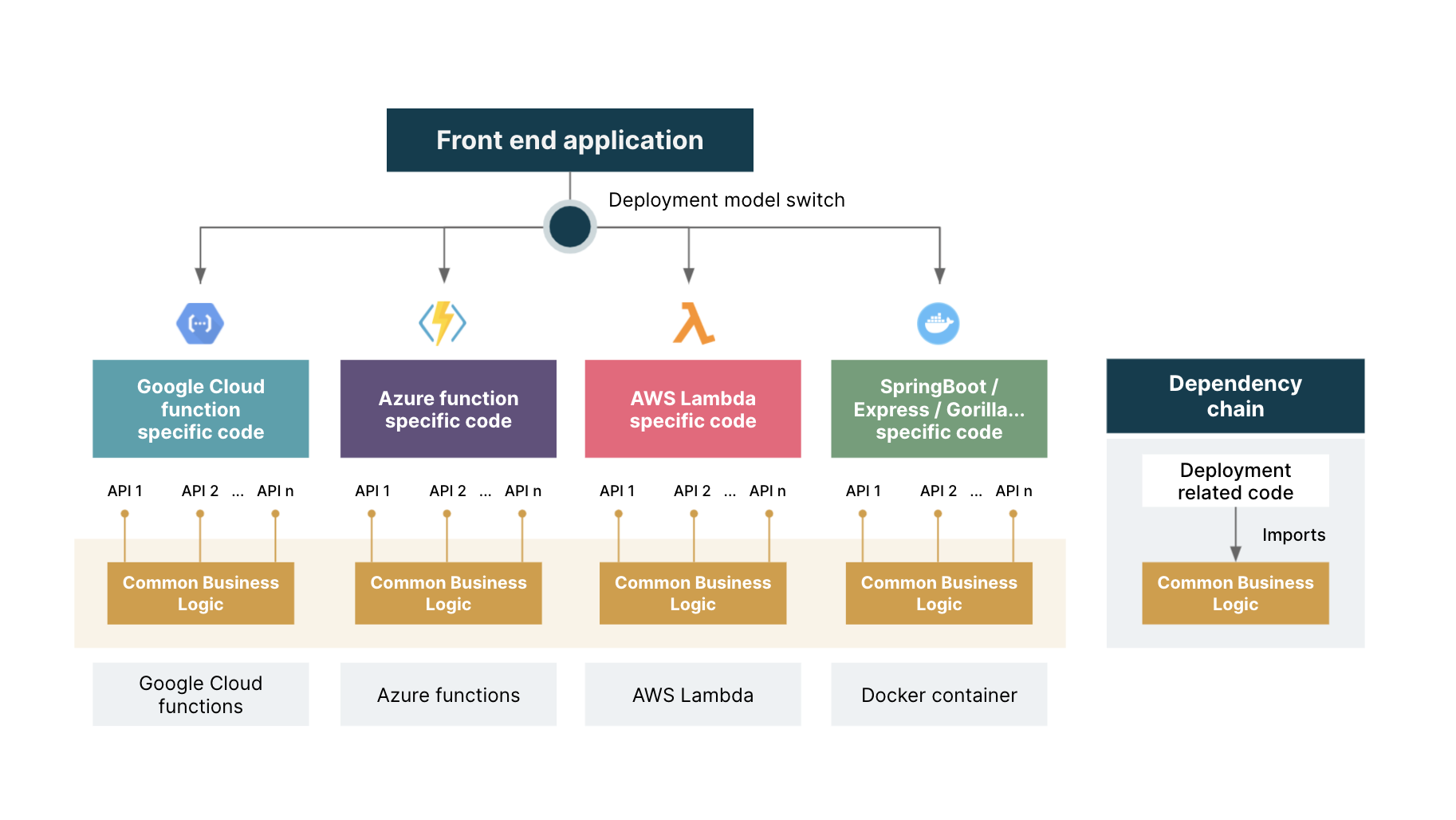

Deployment related code: code that deals with the mechanics of the run environment. If more than one deployment model has to be supported, there must be different implementations of this part (but only this part). In case of container-based deployment, this is the part where the dependencies on Spring Boot or Express or Gorilla are concentrated.

In the case of FaaS, this part will contain the code that implements the mechanics defined by the specific cloud provider (AWS Lambda, Azure functions or Google Cloud functions that have their own proprietary libraries to invoke the business logic).

Separation of deployment related code from common business logic to support switching among different deployment models

To ensure flexibility in the deployment strategy at the least possible cost, it is crucial to clearly separate these two parts. So, set it up in such a way that:

The deployment related code imports modules from the business logic

The business logic never imports any module/package which depends on a specific runtime

By following these two simple rules, we maximize the amount of (business logic) code shared among all deployment models, and therefore minimize the cost of moving from one model to another.

It is impossible to measure in abstract the relative sizes of the business logic and deployment related code. To give you an estimate, analysis of a simple interactive game deployable both on AWS Lambda and Google Application Engine, shows the deployment related code weighs 6% of the codebase (about 7,200 lines of code total). So 94% of the codebase is the same, regardless of whether the service runs in a Lambda or in a container.

DevSecOps

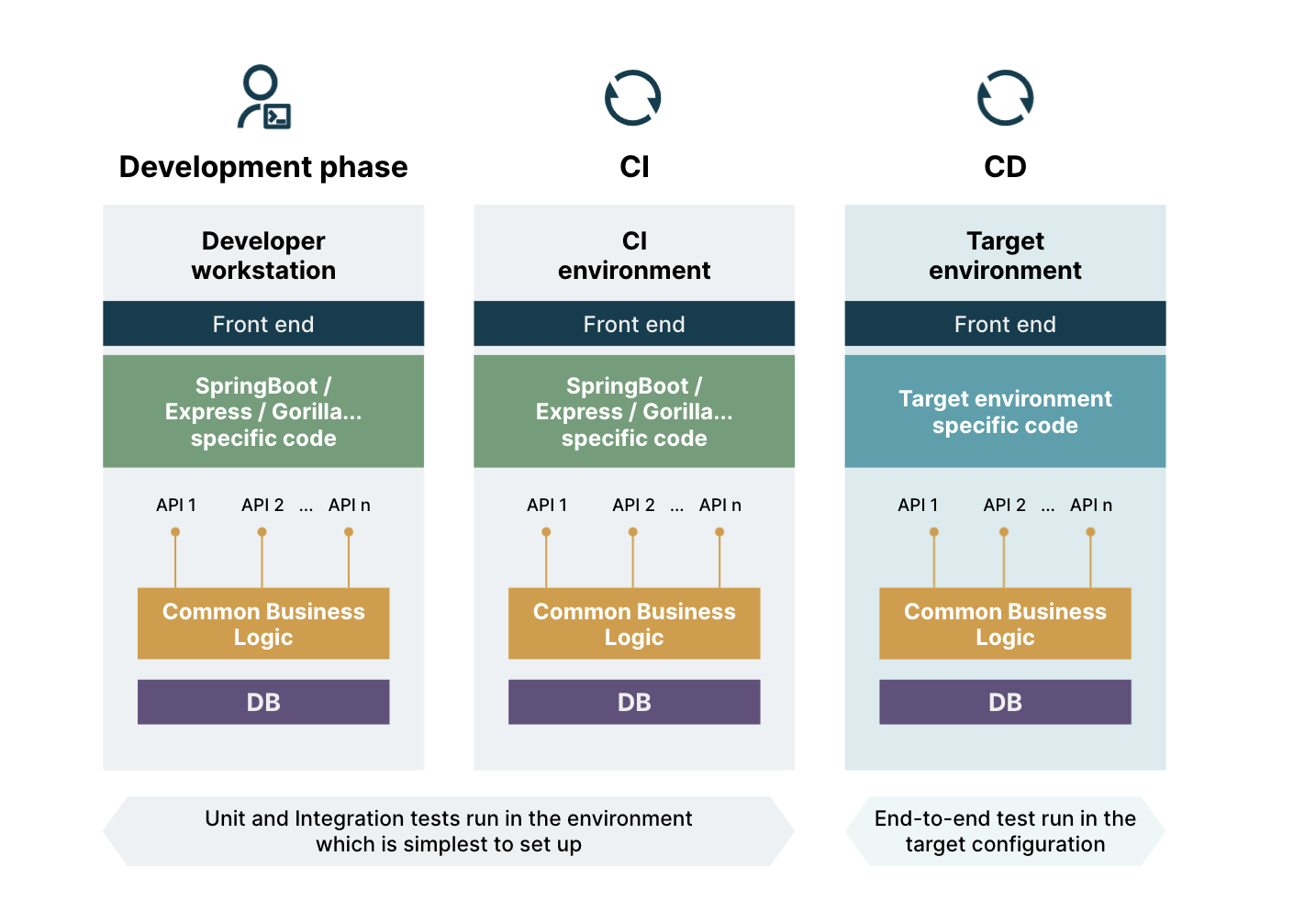

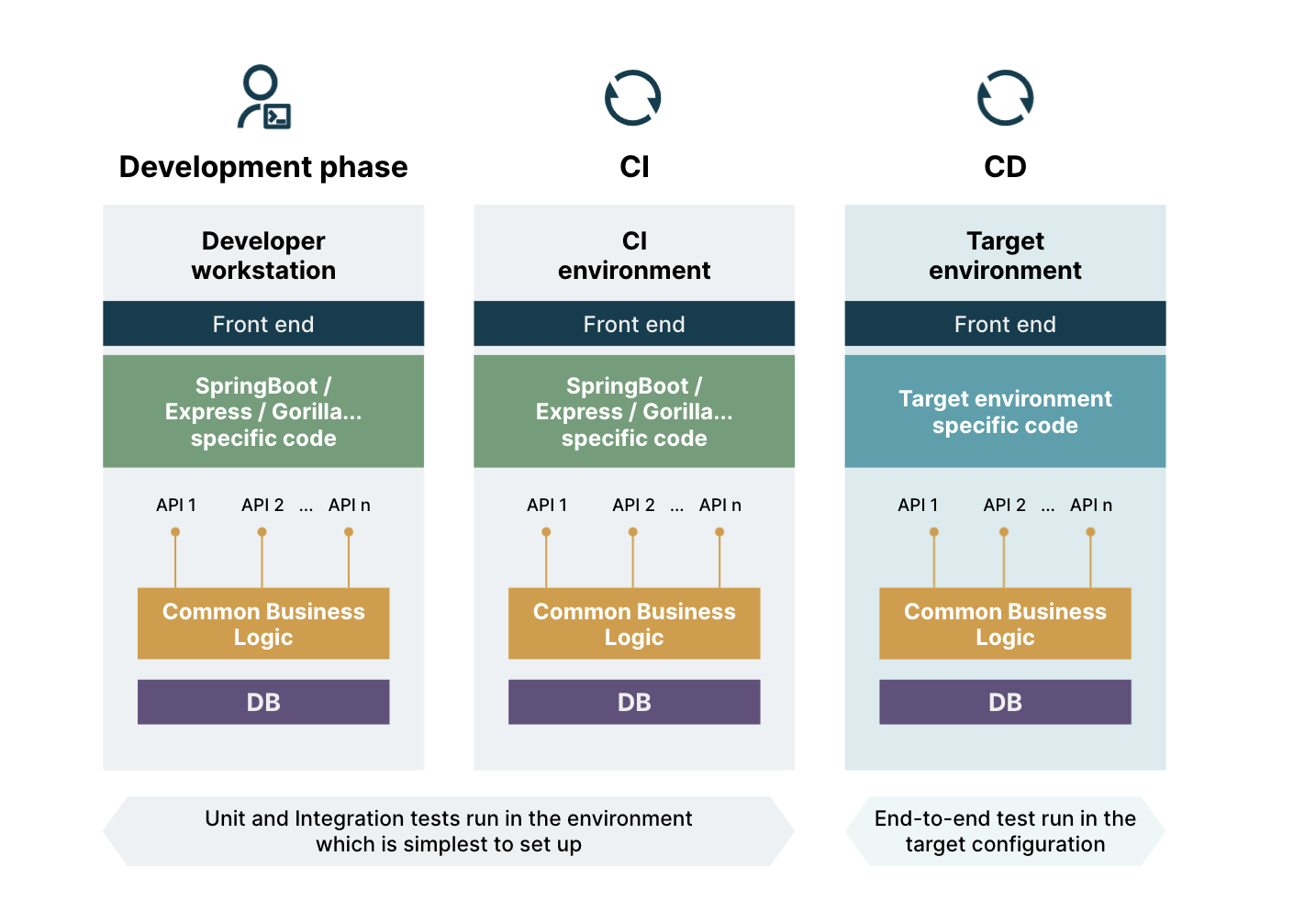

This part of the codebase is responsible for automating the build, test (including the security gateways) and the deployment of the application. The build and deploy phases, for their nature, are heavily dependent on the actual runtime environment chosen for the execution of the service. If we opt for containers, then the build phase will likely use Docker tools.

If we choose FaaS, while there is no build phase, there will be commands to download the code to the FaaS engine and instruct it at the entry point to call when the serverless function is invoked. Similarly there may be security checks that are specific to a FaaS model.

If you enforce a neat separation between business logic and deployment related code, you can also reap great benefits in testing. Both unit and integration tests can be run independently from the final deployment model – choosing the simplest configuration and running the test suites against it. Tests can be run on developers’ workstations which increases the test feedback's speed. Bulk of the test on the correctness of the business logic can be performed independent from the actual deployment, therefore increasing developer productivity.

Isolate and minimize what depends on the deployment model

Split of concerns among parts of a modern application codebase

Therefore, to optimize flexibility of deployment models:

Isolate what is not dependent on the deployment model and maximize it

Design your solutions to minimize deployment related code

Enforce a strong division of these different parts during the lifetime of the project/product, with a mix of design, code reviews and static analysis checks implemented as fitness functions integrated into the build process

In conclusion, serverless functions represent a great option in modern architectures. They offer the fastest time to market, the lowest entry cost,and the highest elasticity. And, for these reasons they are the best choice available, especially when we need to ship a new product out or have to face highly variable loads.

But, change is inevitable. Loads can become more stable, more predictable and in such circumstances, FaaS models could become more expensive than traditional models based on dedicated resources.

It is therefore important to ensure flexibility to change deployment models of applications, and to leep them at the lowest possible cost. This can be achieved by enforcing a clear separation between what is core to the application logic, which is independent of the platform it runs on, and what depends on the specific deployment model chosen.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.