Predictability and Classes of Service

Imagine you are in a line at an airport, waiting to go through immigration. The line ahead of you is huge and there's only one (not very excited) agent serving that line. You're sweating, because the air-conditioning is being fixed at this moment, so it's as hot as it gets.

After a few minutes, you get a pretty good idea about how long it's going to take. Your thinking is something like this "Five people took fifteen minutes to go. There are twenty people left in front of me, so another hour or so should do it."

Soon after you made that calculation, you start trying to convince yourself that it won't be that bad. But it doesn't take long for you to realize that your calculation missed something:it didn't take into consideration the "expedite" passengers who would arrive soon.

A plane lands 45 minutes late. Unfortunately, some passengers don't have enough time to wait in line. They might lose their connection flights, so they are handed free passes, which allow them to get in the front of the line.

"Ok, just fifteen more minutes", you resignedly said to yourself.

Later, after these passengers are through, some elderly people start appearing randomly. Sometimes one by one, sometimes in groups. They also have priority to be served before anyone else. At this point, you simply give up trying to come up with a forecast about when you'll be out of this line and free to enjoy your trip.

If this was a real story, what you just experienced were the effects of what is known as 'Classes of Service'.

Class of Service

Photo Credit: larskflem via Compfight cc

According to Daniel S. Vacanti, "A Class of Service is a policy or set of policies around the order in which work items are pulled through a given process once those items are committed to." In other words, a "Class of Service", which I'll just call CoS from now on, means that you treat some items differently once they are in your process. Probably due to their different risk profiles, or item types or whatever other reason. In the airport example, there were three different CoS (Late, Elderly and Standard Passengers) and you, my friend, were unlucky enough to be in the lowest priority one.

The idea behind CoS is that you should privilege items due to their business value or their risk profiles. One example can be found in software development, when work is stopped to give space to some late regulatory item. This happens because the expected lead time is longer than allowed by the item's due date. All pretty and dandy, but this approach takes a toll on predictability.

Prioritization

Don't confuse CoS with prioritization. If you take a closer look at Vacanti's definition of CoS, there is one subtlety: "once those items are committed to". In other words, it happens after the item enters the process, therefore after prioritization. Many teams struggle with prioritization issues, but that is a topic for another post ;-).

When you pull work into your process, you should, by all possible means, pull the most valuable piece of work by whatever criteria you are using to prioritize it. But, once it's in, it's in. Later in the process, your best approach to predictability should be to treat all items in a First in / First Served (FIFS) order.

Little's Law assumptions

Ok, let's be a little bit more technical here.

Dr. John Little, a professor from MIT, discovered a relationship between some process variables. The formula he came up with relates Averages Lead Time, Work In Progress (WIP) and Throughput.

What is important, though, is not the formula itself. It is the fact that this relationship has to conform to some assumptions to hold true. The best part is that the more your process conforms to those assumptions, the more predictable and stable your process becomes.

Let's explore the assumptions behind Little's formula:

- Arrival and departure rate should be equal. This means that the rate at which items enter your system should equal the rate at which they leave it.

- All work that is started should eventually be completed and exit the system.

- The amount of WIP should be roughly the same at the beginning and at the end of the time interval chosen for the calculation.

- The average age of the WIP is neither increasing nor decreasing.

- Lead Time, WIP, and Throughput must all be measured using consistent units.

If you properly limit your WIP, then assumptions number one and three shouldn't be such a problem. Limiting WIP guarantees that new work only enters your system when there is room to accommodate it. In other words, limiting WIP should keep the arrival rate in balance with the departure rate.

The second assumption states that whatever happens, an item that enters the system goes all the way until done. What happens somehow frequently is that something which looses business value is removed from the process (it does not flow until the end). The metrics get skewed. In the airport line example, it would be equivalent to someone simply deciding to leave the line for good. Don't get me wrong, it would be stupid to work on something that has no more business value. What I mean is that you'll have to either move that item to "done" and properly account that work in your data or treat it as having never entered your process in the first place.

It is the fourth assumption that gets bruised and battered by CoS. Some WIP will age more than it should in order to achieve shorter lead times for those priority CoS. In the case of the airport line, you had to "age" in line, so that the late passengers and the elderly gets served earlier.

The point is that every time your process violates an assumption of Little's Law, there will be a detrimental impact on your predictability.

Slack

If you have slack in your system, you may be able to cope with different classes of service without impacting your predictability. In his book, "Kanban — Successful Evolutionary Change for Your Technology Business", David J. Anderson proposes a CoS called Intangible, which comprises of important but not needed in the near future work, like upgrading the database version or paying other Tech Debts. For this CoS, there is no expected lead time associated. So, the idea is that a percentage of the items flowing in your system are Intangible items, which means some capacity is used to work on them. It is exactly this capacity that is reallocated to work on more priority items when they happen to appear. This is the slack in your system. It is almost like keeping a developer aside, but at least she does some valuable work.

I would argue that it can be a good solution if you can implement it, especially because it addresses the problem that this is hardly the type of work that would be easily prioritised by the business. However, in my opinion, all technical work should translate into either direct business value or increased capacity to deliver business value. What I mean is that technical stuff should have its value assessed and be prioritised just like any other type of work.

Shouldn't you use CoS, then?

Am I saying that CoS are evil and you shouldn't use them anymore? Not at all; what I am saying is that you should be aware of its negative impact on your process' predictability. A crash on a production server is an example of work that normally can't wait. You just want to limit the number of times you contradict Little's Law assumptions.

What about super valuable work to the business (remember, even technical stuff should be seen through a business lens)? Shouldn't it have different treatment? The short answer is: it depends. If you can assess its business value before hand, go for it. But when we talk about knowledge work, I think it is not frequently possible to figure out the business value of an item upfront. The item's business value should be assessed later, when you deliver that item and can get real feedback. So, once more, all items should be prioritized by whatever criteria possible. It's just that they shouldn't be treated differently once they enter your process if you care for predictability (the kind that would help you answer the question: When will this piece or batch of work be done?).

Like I said before, having slack is a possible solution, but it is an expensive one. Why develop something that is not top priority now, just to be able to cope with the variability blow caused by CoS?

What should you do, then?

We should ask ourselves the reason why we use CoS in the first place. I talked a little bit about business value and risk profiles. Yet, most of the times, the reason for using CoS is one of the following two. Either things are taking too long to go through your process, or their Lead Time is too unpredictable and the business needs predictability. For some items, at least.

If you could shorten Lead Time and turn your process into a predictable one, you probably wouldn't need CoS. Well, at least you wouldn't need them that frequently. And the less your process violates Little's Law assumptions, the more predictable it will be.

So, how do you make your process predictable and, at the same time, shorten Lead Time?

If I didn't offer you Little's Formula before, then here it goes (a version of it):

Avg Lead Time = Avg WIP / Avg Throughput

It clearly states that to decrease average Lead Time, WIP has to be reduced or throughput increased or both things. Normally, management likes to influence the throughput variable, using "tools" like late hours, moving people from one project to other etc. I don't consider this to be the best approach, though. WIP is a much better lever to use.

A common problem is to have an arrival rate greater than a departure one, which means WIP is uncontrolled. Remember the assumption # 1. As the formula shows, changes in average WIP greatly impacts average Lead Time.

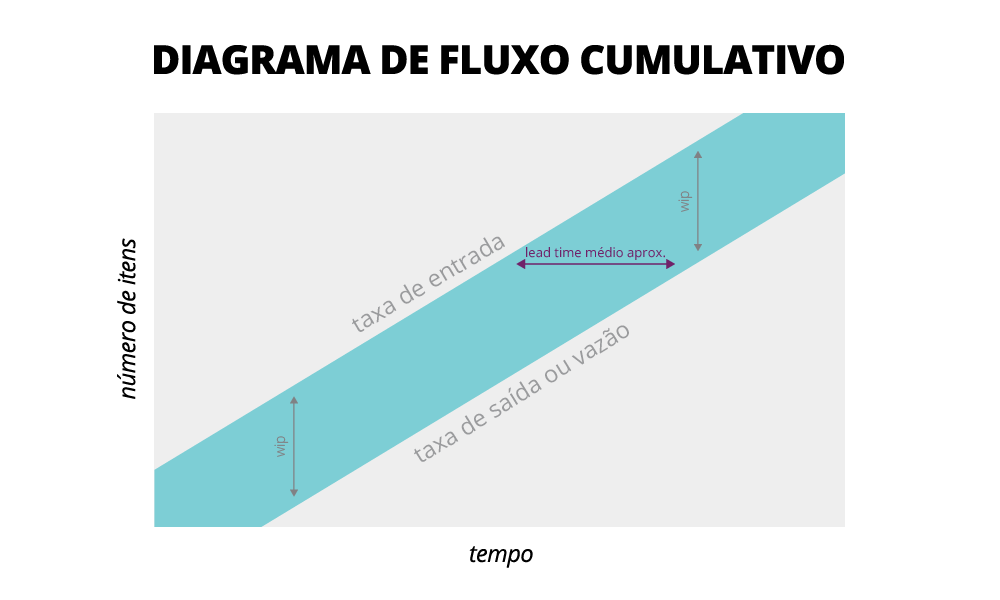

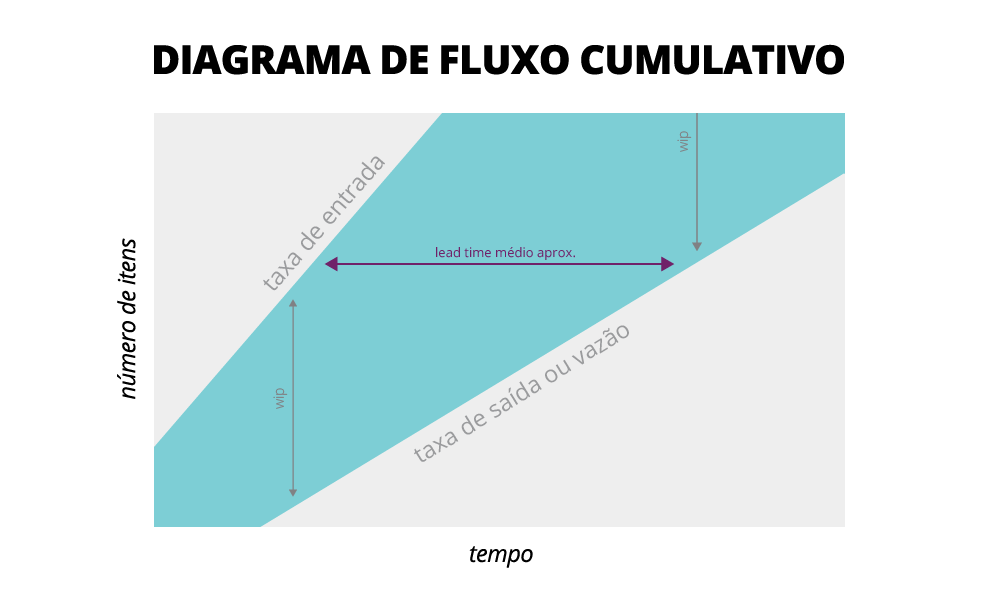

So, the first important step is for you to control WIP. But before you make any change to your process, you should make sure you can measure its effects. One of the ways to accomplish that is to gather flow data (WIP, Lead Time, Throughput, Waiting times etc). Once gathered, visual tools such as Cumulative Flow Diagram and Lead Time Scatterplots can help you to ask the right questions and later, to check the effects of changes. After you have some data, you can start changing your process' policies and then monitor the effects on your data.

Let's return once more to our airport line, how much time was spent being served by the immigration agent and how much was spent simply waiting? Waiting time is another common problem that causes Lead Time to lengthen. In most processes, items spend more time waiting to be worked on than actually being worked on. Your Kanban board and metrics should reflect that, with columns/stages representing items ready to be pulled from a downstream stage. The problem is that value is not being added while waiting. So, once measured, you should change the way your team works in order to drop or diminish these waiting times. Simply removing the columns does not solve the problem, it just hides it, ok?

Reducing both WIP and waiting times should impact positively your process' Lead Time. And shorter Lead Time has a secondary positive impact. Quality metrics show improvement, since problems are discovered faster and people still remember what they worked on. By the way, better software also produces less unexpected problems, so more predictability can be achieved.

I hope these ideas can help you improve your process' predictability and make your clients happy(ier).

References

Actionable Agile Metrics for Predictability - Daniel S. Vacanti

Kanban — Successful Evolutionary Change for Your Technology Business - David J. Anderson

Priming Kanban - Jesper Boeg

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.