In 2026, the software engineering landscape has moved beyond "vibe coding". Throwing raw prompts at a chat interface and hoping for a usable result does not work in enterprise software development. To build production-grade, industrial-scale software today, developers need to adopt a structured approach that treats AI as a sophisticated engineering stack.

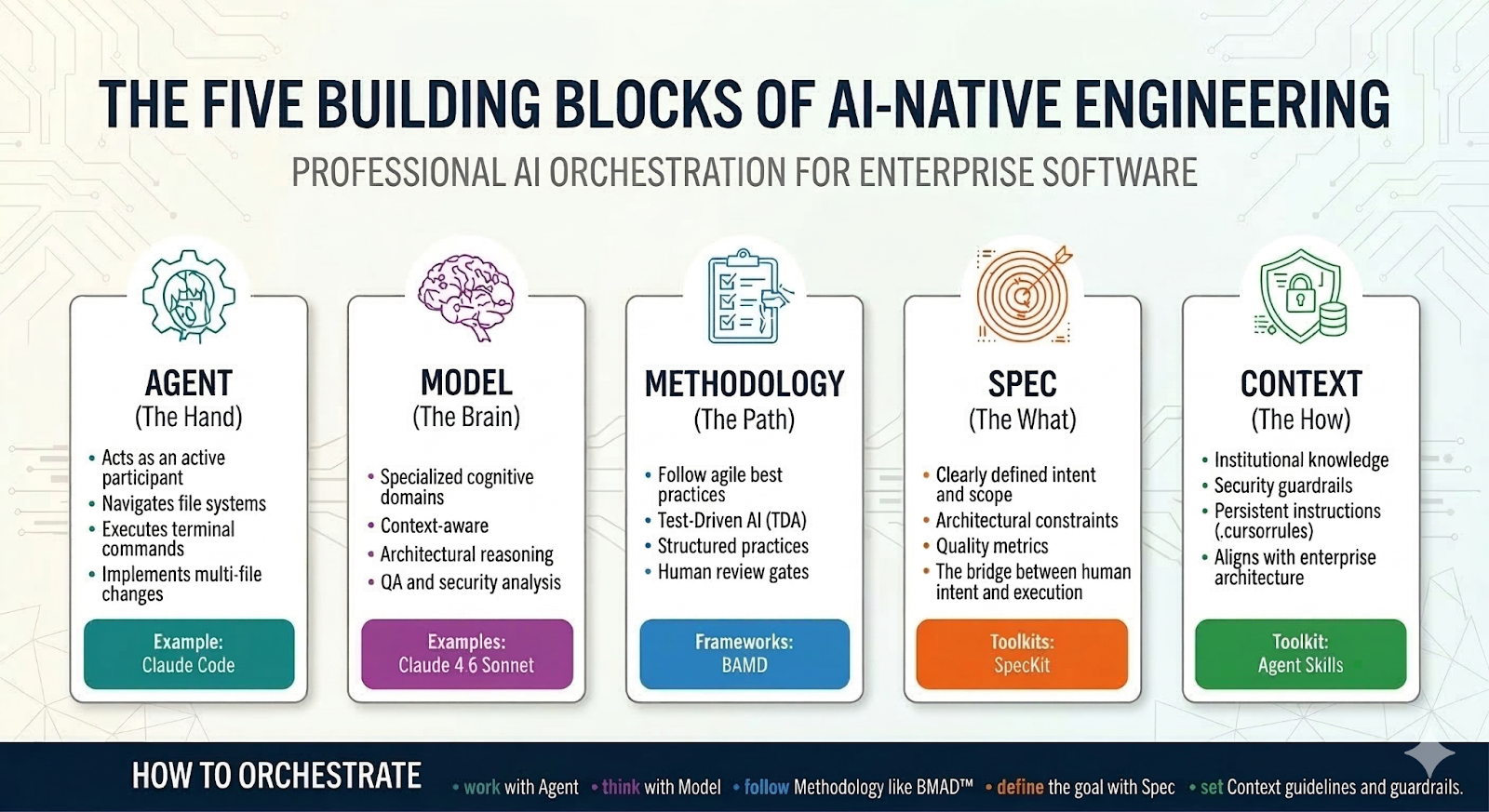

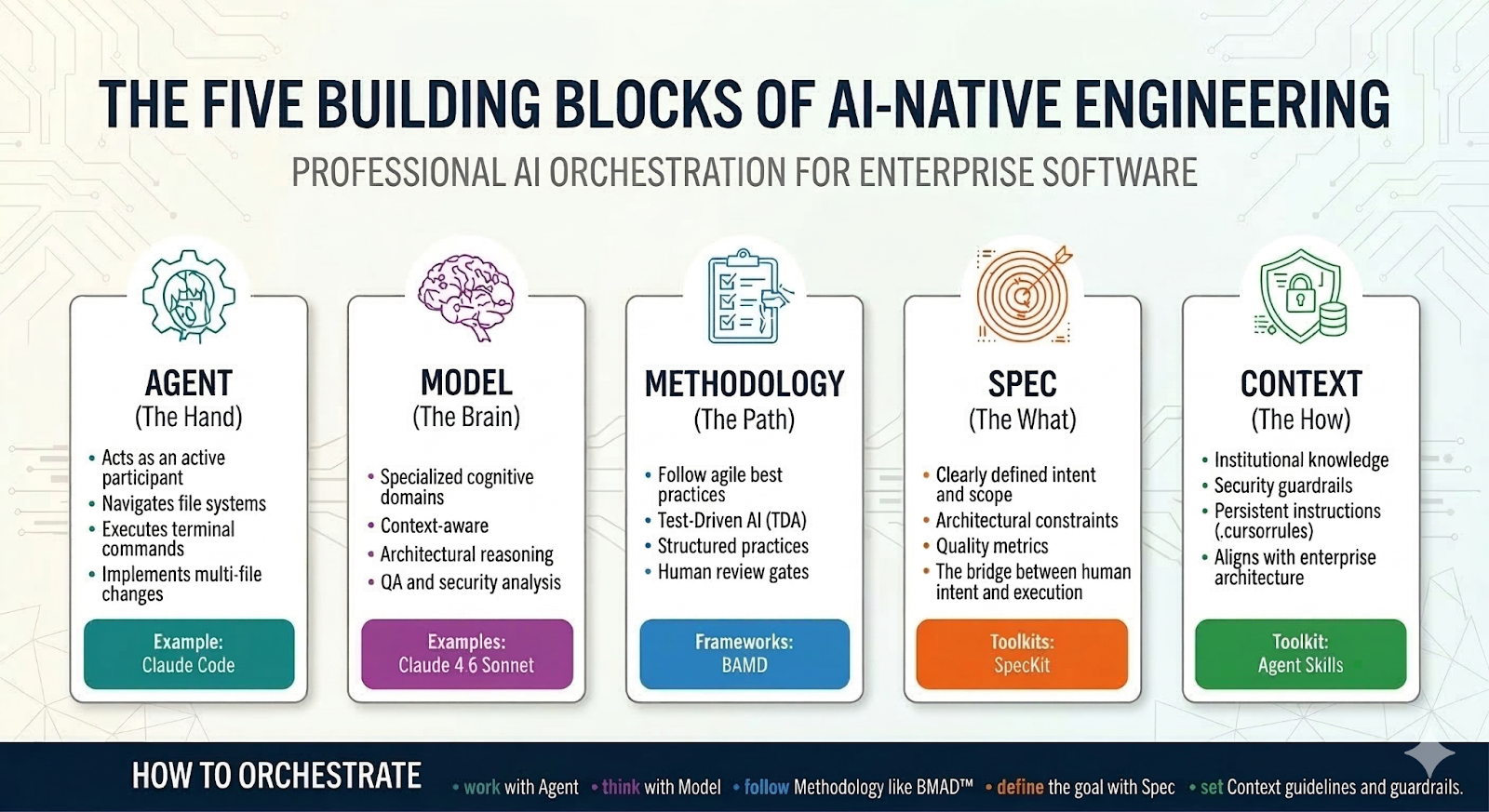

To build software effectively you should be orchestrating. You pick an agent to do the work, a model to ‘think’, a methodology like BMAD™ to follow, a spec to define the goal and context to set the guidelines and guardrails.

Whether you’re modernizing a legacy mainframe or building a greenfield cloud-native application, mastering these five core building blocks is essential for the new engineering stack to achieve professional excellence.

Choose your agent

The "agent" is the autonomous execution layer. It acts as an active participant in the development workflow, significantly surpassing basic reactive assistants.

Core competencies and functionality include:

Navigating and analyzing the file system. The agent interrogates the project's directory, analyzes architecture and understands component interdependencies.

Executing terminal commands. It executes terminal commands to install dependencies (npm, pip), run build scripts, manage source control (git) and perform diagnostics. It directly controls the environment.

Automated testing and verification. It initiates test suites (such as unit and integration) to validate code changes and uses the resulting data as iterative feedback.

Autonomous multi-file editing and refactoring. The agent implements complex changes across multiple files cohesively (e.g., refactoring class identifiers or updating API signatures) without direct human intervention.

Supervised autonomy. All operations are under human supervision; the agent works autonomously (on things such as bug resolution or implementing minor features), but its actions are submitted to the developer for formal review and final authorization (e.g., via a pull request).

There are a number of popular agents available for software development, including:

Claude Code: Anthropic's heavy-hitter. It works only with Claude models.

OpenCode: An open-source, privacy-first, terminal-native agent ideal for local models and sensitive codebases. OpenCode works with all the models including self hosted.

Cline: An open-source favorite for VS Code that offers granular control over tool-calling and file permissions.

Antigravity/Cursor/Windsurf: Specialized IDEs that treat the agent as a first-class citizen rather than a plugin.

Choosing the model

The foundational architecture of AI-driven systems in software development relies on a critical division of labor: the agent manages the execution of tasks and actions, while the model serves as the repository and processor of knowledge.

By 2026, the market has undergone significant bifurcation. It has moved away from a 'one-size-fits-all' large general-purpose model. Instead, the industry is now characterized by highly specialized models, each meticulously optimized for a distinct set of cognitive tasks essential to the software development lifecycle. This specialization leads to superior performance, efficiency and context-awareness in their respective domains.

This landscape includes, but isn't limited to:

Code generation models, optimized for syntactical correctness, idiomatic adherence to specific programming languages and complex logical structure generation, moving beyond mere boilerplate.

Architectural reasoning models, focused on evaluating high-level design patterns, microservice communication, scalability and security. It serves as a 'digital architect' assistant.

Test and quality assurance models, which specialize in generating comprehensive test cases (unit, integration, end-to-end), identifying potential edge cases and predicting failure points based on code changes.

Documentation and knowledge synthesis models, which are excellent at ingesting existing codebases, technical specifications and historical tickets to automatically generate up-to-date documentation, tutorials and context-aware summaries for onboarding new developers.

Security and vulnerability analysis models, trained specifically to recognise common and novel security flaws (e.g., the OWASP Top 10) during the coding and review process. These often operate as a mandatory pre-commit hook.

The successful software agent of the future must, then, be adept at orchestrating these specialized models, calling upon the most appropriate model (the knowledge source) for the specific action it needs to execute.

| Model | Strength |

Best use case |

Claude 4.6 Sonnet |

Adaptive thinking. |

The current state of the art for complex agentic planning and large-scale code migration. |

Gemini 3.1 Pro |

Context window and code reasoning. |

Large-scale codebase analysis, architectural thinking (2M+ tokens) and refactoring. |

GPT 5.3 Codex |

Raw reasoning and multi-modal understanding. |

Solving hard algorithmic problems, "one-shot" bug fixes and interpreting UI/UX mockups for implementation. |

GLM 5 |

Cost-efficiency. |

An open source model for high-volume boilerplate generation and unit testing. |

Choosing a methodology

To successfully integrate AI into the software development lifecycle, it's crucial to adopt a disciplined methodology that counters the inherent risks of autonomous agents. A major challenge is "agent thrashing," a phenomenon where an AI becomes trapped in an infinite or lengthy loop of self-correction, often fixing one generated error only to introduce a new one, leading to wasted compute resources and time.

To prevent this in professional, enterprise-grade development, we must shift the paradigm from informal, open-ended generative interactions, often dubbed "chat-oriented programming" or "vibe coding." This unsystematic approach relies too heavily on conversational prompts and lacks the rigor needed for production-ready code.

Instead, the focus must be on establishing a continuous, high-integrity flow where AI-driven development is firmly anchored in established engineering discipline and best practices.

This involves:

Structured prompts and context (AI as the engineer). This requires detailed, structured inputs that define the scope, expected output, architectural constraints and quality metrics, rather than vague requests. The AI is assigned the specific role of a software engineer responsible for delivering code against clear specifications.

Integration with CI/CD (AI as the committer). This involves embedding the AI within the continuous integration and continuous delivery pipeline, where its outputs are immediately subjected to automated testing, linting, security scans and code reviews, ensuring rapid feedback and adherence to standards. The AI acts as a committer; its work is instantly validated by the system.

Test-driven AI (TDA) (AI as quality assurance). Mandating AI agents generate code alongside, or even based on, comprehensive unit and integration tests, making test coverage a prerequisite for successful code generation. The AI takes on the role of a QA specialist, ensuring functional correctness before delivery.

Version control and audit trails (AI as the documentarian). Ensuring every AI-generated contribution is committed to a version control system with clear commit messages and traceability, allowing human developers to audit and roll back changes. The AI serves as the documentarian, providing clear, auditable logs of its work.

Human oversight and vetting (human as the architect/reviewer). Implementing mandatory human review gates for AI-generated code, especially for critical sections, to ensure non-functional requirements (like performance, security and maintainability) are met. Human developers take the crucial role of the lead architect and code reviewer, maintaining overall system integrity and alignment with strategic goals.

By systematically applying these engineering best practices, organizations can harness AI to accelerate development while maintaining the quality, stability and control essential for enterprise applications.

One such playbook is BMAD Method, a methodology for Agile AI-driven development that simulates a multi-role software team through role-based agent orchestration. It uses a specialized loop of "plan-analysis-design-architect-dev-test" personas to ensure code is not just generated, but validated against architectural constraints and unit tests before human review. It focuses on reducing "hallucination drift" by requiring cross-agent consensus on system design before implementation begins.

Similarly, Thoughtworks AI/works™ supports legacy modernization starting from reverse engineering, requirements enhancement and spec-to-code generation with the 3-3-3 delivery model — a concept in three days, functional prototype in three weeks and production-ready MVP in three months.

Prompt using specs

In the rapidly evolving landscape of agentic software development, the "spec to code" pipeline represents the critical bridge between human intent and autonomous execution. As AI agents become increasingly capable of writing, testing and deploying software, the bottleneck of development has shifted from raw coding to the precise articulation of requirements.

Ultimately, the effectiveness of an autonomous coding agent is directly proportional to the quality of its input specification. Therefore, mastering the translation of "spec to code" is no longer just an efficiency hack, but the foundational skill required to successfully navigate the future of AI-driven engineering.

Examples of such toolkits include:

SpecKit: Developed by GitHub, SpecKit is an open-source toolkit that brings structure to AI-assisted coding through spec-driven development. Using a simple CLI, it guides developers and AI agents through a rigorous five-step pipeline of writing specifications — constitution, specify, plan, tasks and implement — turning high-level requirements into production-ready code and eliminating chaotic "vibe coding." (See SpecKit: Master the Art of Spec-Driven Prompting.)

OpenSpec: Developed by Fission-AI, OpenSpec is a lightweight, open-source toolkit that brings spec-driven discipline to AI coding without heavy bureaucracy. Using plain markdown and native slash commands, it guides AI agents through a fast three-step workflow: proposal, apply and archive. It's especially powerful for safely modifying existing "brownfield" projects.

BMAD Quick Flow: The BMAD Quick Flow is a streamlined, three-step AI development framework optimized for rapid feature delivery. It transitions from raw requirements to a technical specification (quick-spec), immediate coding (quick-dev), and optional validation (code-review). It’s perfect for fast prototyping.

Read more about spec driven development here.

Providing context

The final layer is providing the how through what's today called context engineering. This is the strategic curation of institutional knowledge and design principles provided to AI assistants to enforce enterprise standards. Rather than accepting generic code, developers inject a rich context containing specific design patterns, architectural blueprints and strict security guardrails.

By embedding structural guidelines and security protocols — such as OWASP mandates, authentication requirements and specific microservice architectures — directly into the AI's workspace, context engineering acts as a foundational constraint. It guides the AI on how to build software that isn't just functional, but inherently secure, scalable and aligned with organizational architecture.

Context engineering and Agent Skills help define specialized skills that equip the agent with domain-specific and architecture knowledge. (See Agent Skills with Anthropic (DeepLearning.AI).)

Rules and instructions. Using AGENTS.md or .cursorrules files to provide persistent instructions like "Always use Tailwind CSS" or "Follow the Hexagonal Architecture pattern."

Security guardrails. Integrating automated security policies and "never-allow" rules can help prevent the introduction of common vulnerabilities, secret leakage or insecure dependency patterns (such as security_auditor.skill).

Design systems and architecture. High-level architecture guidelines can help ensure the AI-generated output adheres to your brand and system design.

Thoughtworks AI/works™ Context Integration. These are advanced capabilities for automated context harvesting from enterprise codebases, ensuring models understand intricate system dependencies and domain-specific logic. (See the AI/works™ Technical Guide)

The new engineering stack

In essence, software development with AI shifts from vibe coding to thoughtful orchestration. Success lies in the deliberate combination of the right agent, the most suitable model, a proven methodology like BMAD™, a precise spec, and well-defined context. This deliberate composition is the key to building effective software in the age of AI.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.