Quality strategy is integral to product success

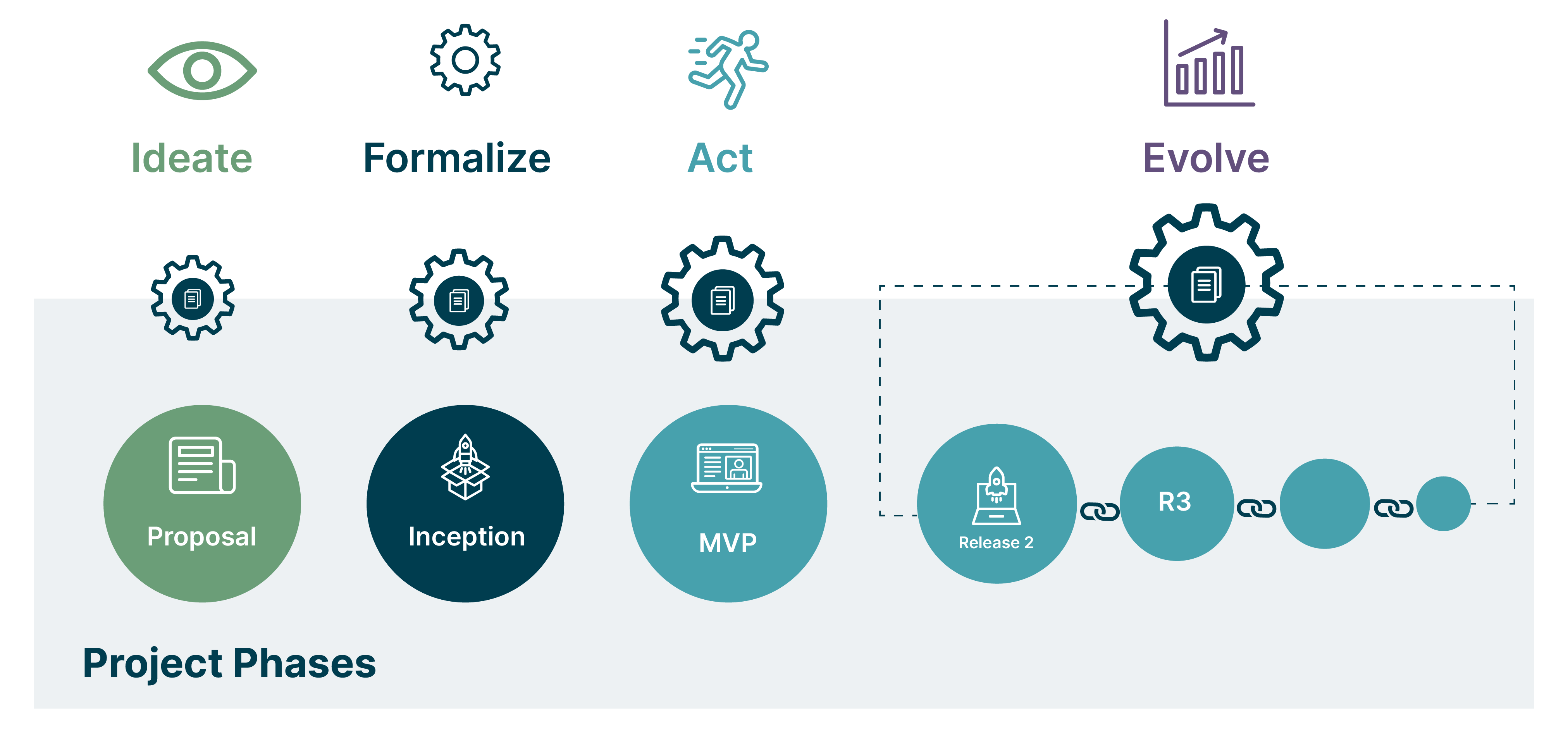

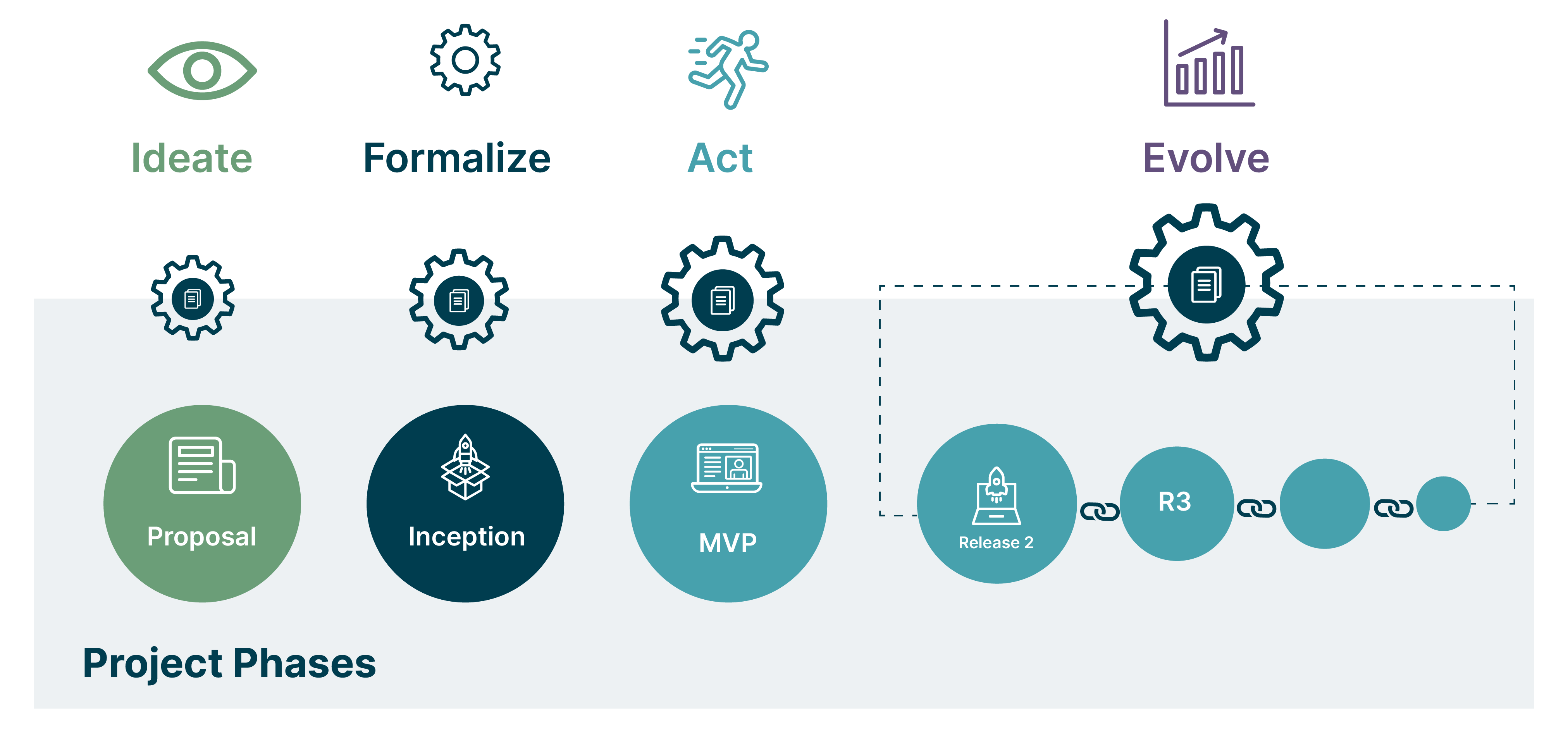

As a consultancy, most of our engagements involve delivering a bespoke product or transformation of software practices and processes. To consistently exceed our customers’ expectations, we believe quality is an integral part of the changes. And that means, we need a quality strategy that details our test approach for developing and delivering a high quality product to the customers.

And therein lies the challenge: the times we start a project where the complete test approach is crystal clear are rarer than hens’ teeth. The strategy evolves as we get deeper into the context and learn about the business and the product.

The principles of a robust quality strategy

Given our quality strategy cannot be set in stone at the outset — but rather has to evolve with the project — we need some guiding principles that increase our chances of success. We define these as follows:

The quality strategy artefact should be a reflection of the approach to quality being followed by the team. Hence, it is important everyone buys into these decisions. This helps in avoiding any clashes or a siloed working approach

The strategy should talk about the value of “why we are doing what we are doing” which helps in meaningful conversations driving towards an outcome. Also when someone comes on board to the team, it is vital for them to go through the artefact and understand the value behind our approaches

Usually anyone in the team wearing the quality hat is responsible for updating the strategy document and everyone in the team has to contribute to the decisions

Just like an Architecture Decision Record, the quality strategy should record decisions whenever they are made

Evolution is key here; drafting the strategy gets the engagement off to a good start but over time the document gets stale and people might forget it even exists

Visibility of the document is necessary, not just to the team, but to the stakeholders and the business to build confidence in our testing approach

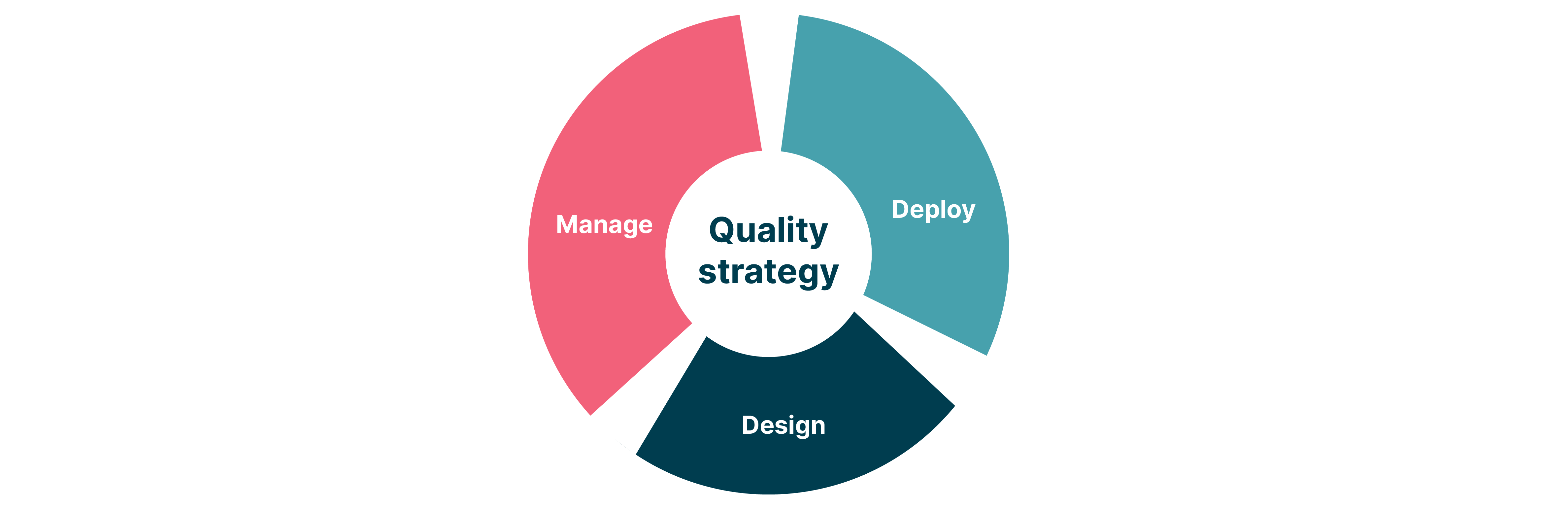

Quality strategy: the essential heuristics

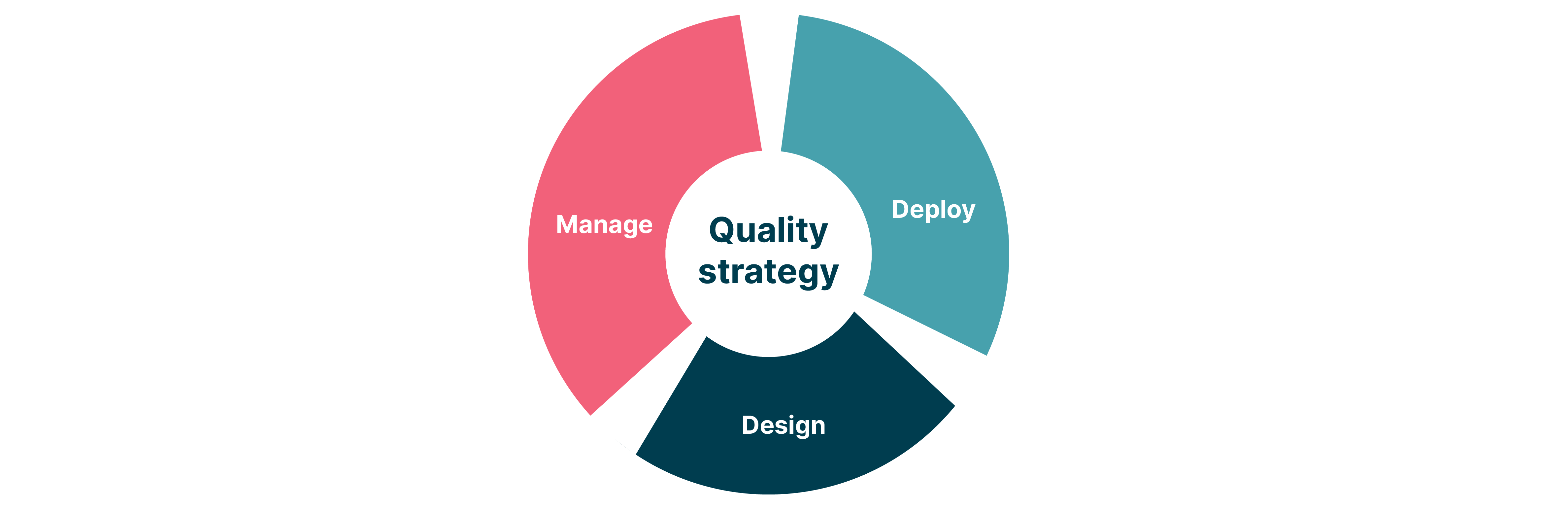

Essentially, a quality strategy can cover a wide range of areas. Here, I am classifying them in three broad areas, not necessarily in any order.

Manage

Vision

Capture the team’s vision statement for the product. It is important that the business vision and the testing vision are alignedTest coverage

It is important to set expectations of what’s inscope and out of scope for testing from both a program and a task perspective. Make these visible to various stakeholders. This helps in clearly defining the risks, gaps and responsibilities between different teams and associated systemsDependencies

Through various architectural and business conversations, identify and document dependencies that will directly or indirectly impact your team. These could be technical, system, process or any other dependencies which may impact development and testingNumber of people required for testing

In large enterprises where complex systems are being developed, there may be a need for specific people to focus on different testing activities. The strategy may require to understand the number of people required so that there is an estimate of cost and investmentMetrics and reporting

The business vision is vital to identify the metrics we would like to report. Some of the metrics can be empirical (e.g. number of registrations) and some non-empirical (e.g. customer satisfaction). There could also be some internal team metrics such as the four key metrics. These metrics could be used as a base to understand where to focus on testing to improve quality of the applicationDefinition of done

To most organizations, definition of done could be a working feature deployed and delivered to end users. For some others it could also be an exit criteria for moving tasks from one stage to another before production deployment. Document what definition of done means for your team and set those expectationsResponsibility for writing and maintaining tests

Though we like to work as a team, once we identify the types of tests to be written, it can help if we make the accountability and responsibility explicit in the document even if we later adapt as requiredDefect management process and lifecycle

This is about the team’s understanding of handling defects including raising, prioritising and fixing them. Also decide on the stages and ceremonies a defect would go through for completionAssumptions

Capture all the assumptions being made alongside the stakeholders on the basis of which, the testing will happen. Validate the assumptions periodicallyGlossary

Capture all the terminologies from a technical and business perspective for easy reference. This is useful for any new joiners on the team

Design

Types of testing

Ideally an architecture diagram would help identify the different types of functional tests required such as unit, service / component, contract, integration, system (end to end), exploratory tests

Have a discussion with representatives from various functions to understand and prioritize cross functional requirements such as security, performance, accessibility, usability and 30+ other ‘ilities’Test data

Document the way test data will be processed and used across various environments. Identify if data protection policies will allow production data versus synthetic data. Data flow between different services may affect upstream and downstream systems. In large programs with external dependencies, it’s also important to understand who will provide what sort of data and timelinesTools selection

Choose your tools for test automation, test management, defect management and document the decisions behind it in the strategy artefact. It is advisable to have the test tools and developer tools use the same language for seamless working and skills transfer

Deploy

Tests to be run on environments

Sketch out a path to production and map the types of tests you would run in each of these environments right from your local machines to productionInfra configurations on different environments

Most of the time, discrepancy of configurations across environments causes functionality to fail in that environment, such as pointing to the correct database or fetching a particular version of style objects. It is hence essential to document mitigation factors for this riskTesting migrations

If your product development requires data migrations, think of ways to test the migration and its impact on other dependency services. Migration testing may also include testing for backward and forward compatibilityTesting your deployment process

Have a backup plan beforehand in case something goes wrong with the deployments to downstream environments. Think about ways you can bring your system in a usable state as quickly as possible after deployments through rollback and roll forwardsMonitoring

Monitoring could come in various forms such as monitoring the hardware and services. Security and performance of the system can also be monitored. Identifying the right parameters to be monitored is as important as monitoring them

This list may not be exhaustive but at least it could be a good starting point for someone to start thinking of the different areas to cover, while writing a quality strategy. Think about which of these would have the biggest impact or make the biggest change and prioritize them accordingly.

Note: Usually these strategy artefacts are pretty long documents either in a Word document or in a slide deck. There’s nothing wrong with those formats as long as the team is happy. But in case you fancy creating a one page holistic quality strategy, here is another way of looking at it.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.