I recently joined Thoughtworks' Chief Scientist Martin Fowler and senior engineering leaders from some of the world’s largest technology companies for an unconference in Utah. The setting was deliberate; nearly 25 years ago, another group of technologists gathered in those same mountains to write the Agile Manifesto. We came back to wrestle with the next seismic shift: AI-native software development.

Across all our conversations a clear pattern emerged: well-established practices that have guided software development are now under strain as a consequence of the improving capabilities of AI models and tools.

This matters to technology leaders because it changes where rigor lives in the development lifecycle. This has wide ranging consequences, encompassing issues of security and software quality, employee morale and burnout and customer satisfaction.

Engineering rigor does not disappear when AI generates code. It relocates and becomes more important than ever.

I discussed some of my reflections in a recent article, but below you can find what I see as the key takeaways for business and technology leaders.

What is relocating and why it matters

One clear signal from the retreat is that when AI generates code it doesn’t remove the need for rigor; that need simply moves elsewhere.

For instance, addressing quality in the future may no longer be an issue of line code reviews but instead one of tiering risk, clarifying specifications and verifying correctness.

This may mean that the type of work software engineers do evolves into something else. During the retreat, the group identified this as work situated in the ‘middle loop’. It was described as ‘supervisory engineering’ in which developers are responsible for orchestrating and governing agentic systems.

What is 'middle loop work'?

Work between writing code and delivering to production that includes:

Managing agents

Calibrating trust in generated content

Task decomposition

Maintaining architectural coherence

Although much of the discussion at the retreat was future-oriented, there was agreement that existing and well-established practices will become even more important. In fact, there appears to be a trend for rediscovering practices like test-driven development, pair programming and trunk-based development. There was a feeling across the group that such practices can help solve some of the challenges — nondeterminism and unpredictability, for instance — of handling code written by agents.

For leadership, then, the lesson should be clear: ensure AI adoption is done with rigor and attention to issues of quality and security. This is borne out in real-world research and data: the 2025 DORA report highlights that AI is an amplifier of both what’s good and bad; success, the report tells us, requires robust foundational engineering practices.

The bottleneck in delivery is changing

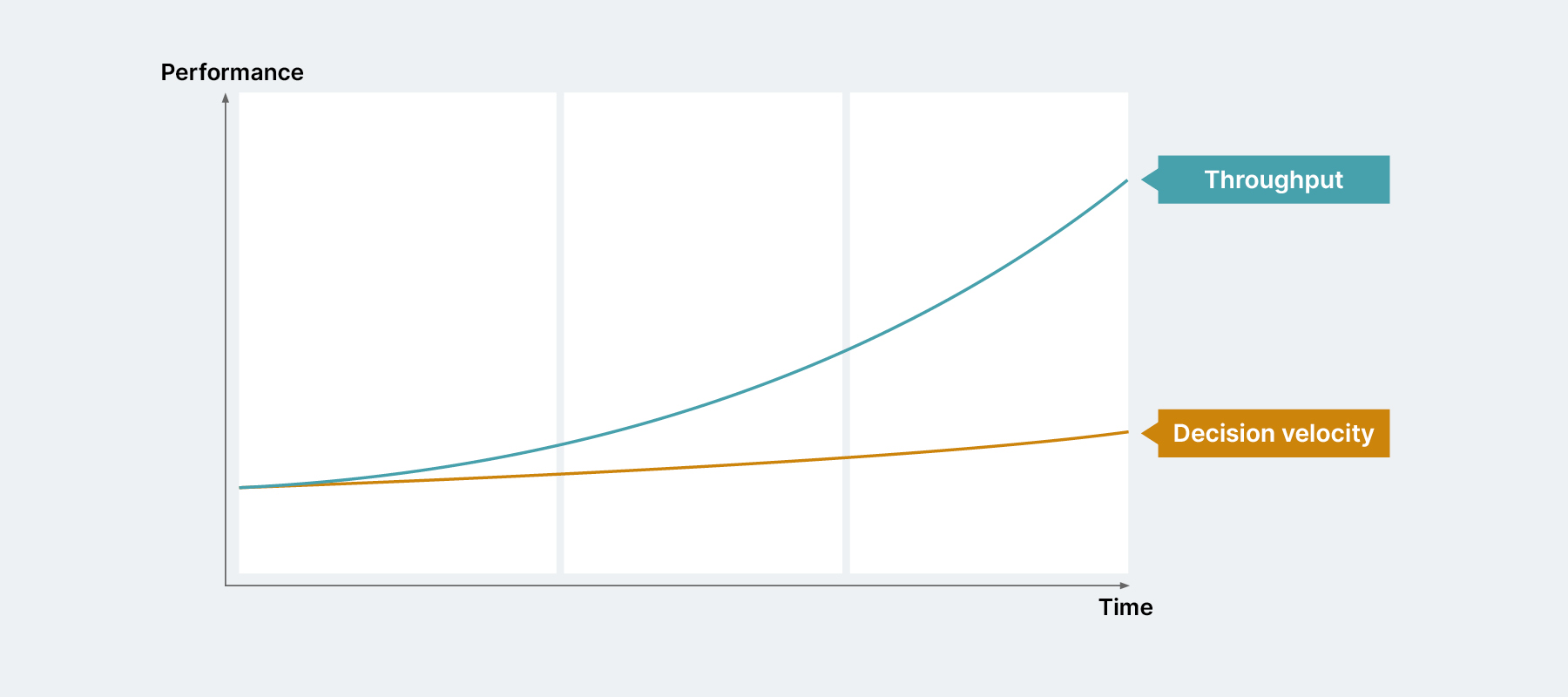

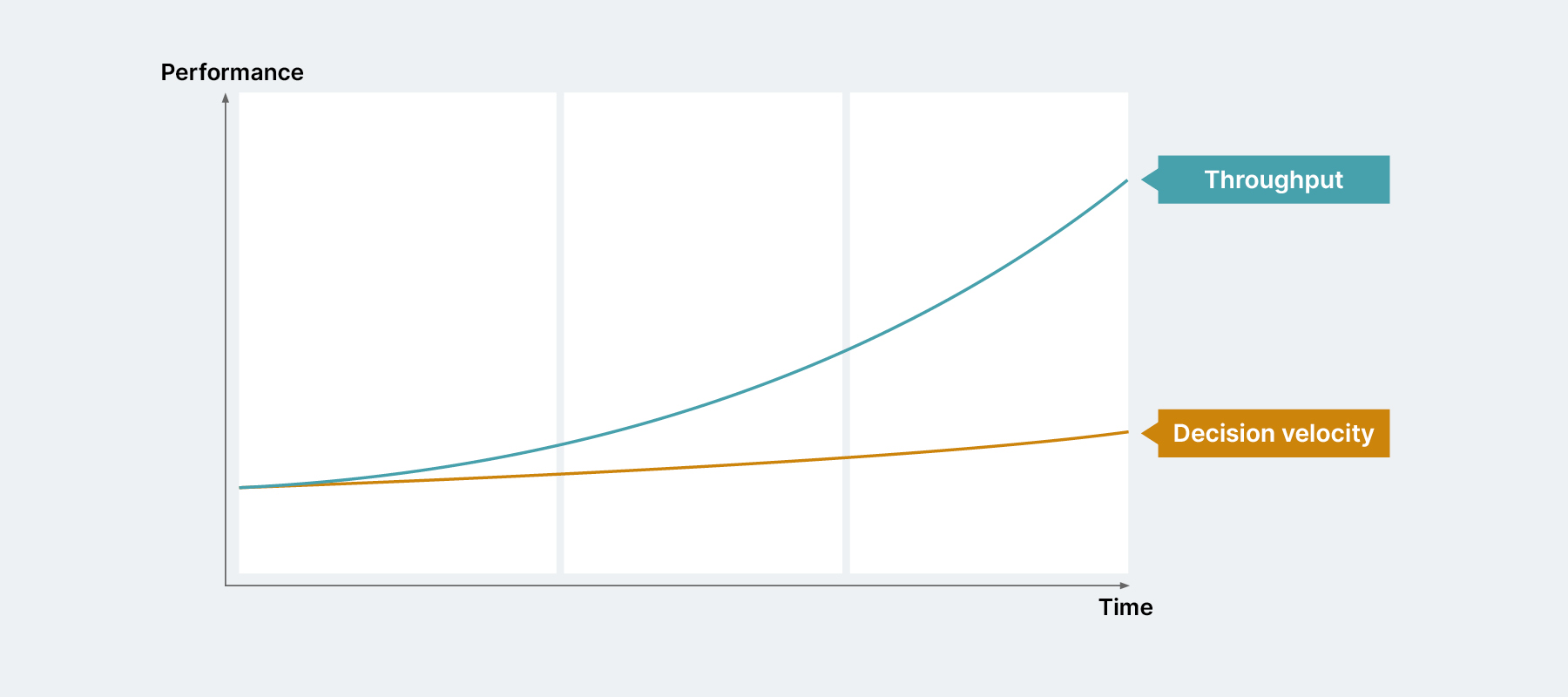

Traditional bottlenecks were about engineering capacity. That is shifting. Teams with AI tools are often able to clear backlogs quickly only to encounter bottlenecks in decision making, governance and architectural alignment.

Throughput increases; decision velocity does not. That creates frustration rather than acceleration. This shift means leaders need to look at governance processes and operating rhythms with fresh eyes.

Could productivity and developer experience diverge?

One of the more uncomfortable observations from our discussions was that developer productivity and developer experience may no longer move in the same direction. For a long time, we assumed they were tightly linked. Give engineers better tools, less friction, more autonomy and you get better outcomes; that assumption is now under pressure.

Writing code used to be a primary bottleneck; now, thanks to AI, constraints have moved in two directions:

- Upstream in decision-making about what we should build and how.

- Downstream in delivery and operations.

Why this creates a worse experience for developers

From a developer’s perspective, the job starts to feel more fragmented and constrained:

Faster creation, slower decisions.

More output, more queuing.

Increased cognitive load.

System complexity becomes more visible.

More governance, poorly integrated.

In turn, this raises important questions for leaders.

Ask yourself:

What needs to change

The response is not to optimize coding further; we need to rebalance the entire system.

- Optimize for system throughput, not coding speed.

- Treat decision-making as a first-class engineering problem.

- Strengthen both upstream and downstream systems.

These three things should inform how we approach developer experience in an increasingly agentic world.

An opportunity to embed better governance and security

It's also vital to stress that it is in this area that we have the opportunity to implement governance and security effectively, ensuring they are properly embedded inside engineering context and AI guardrails.

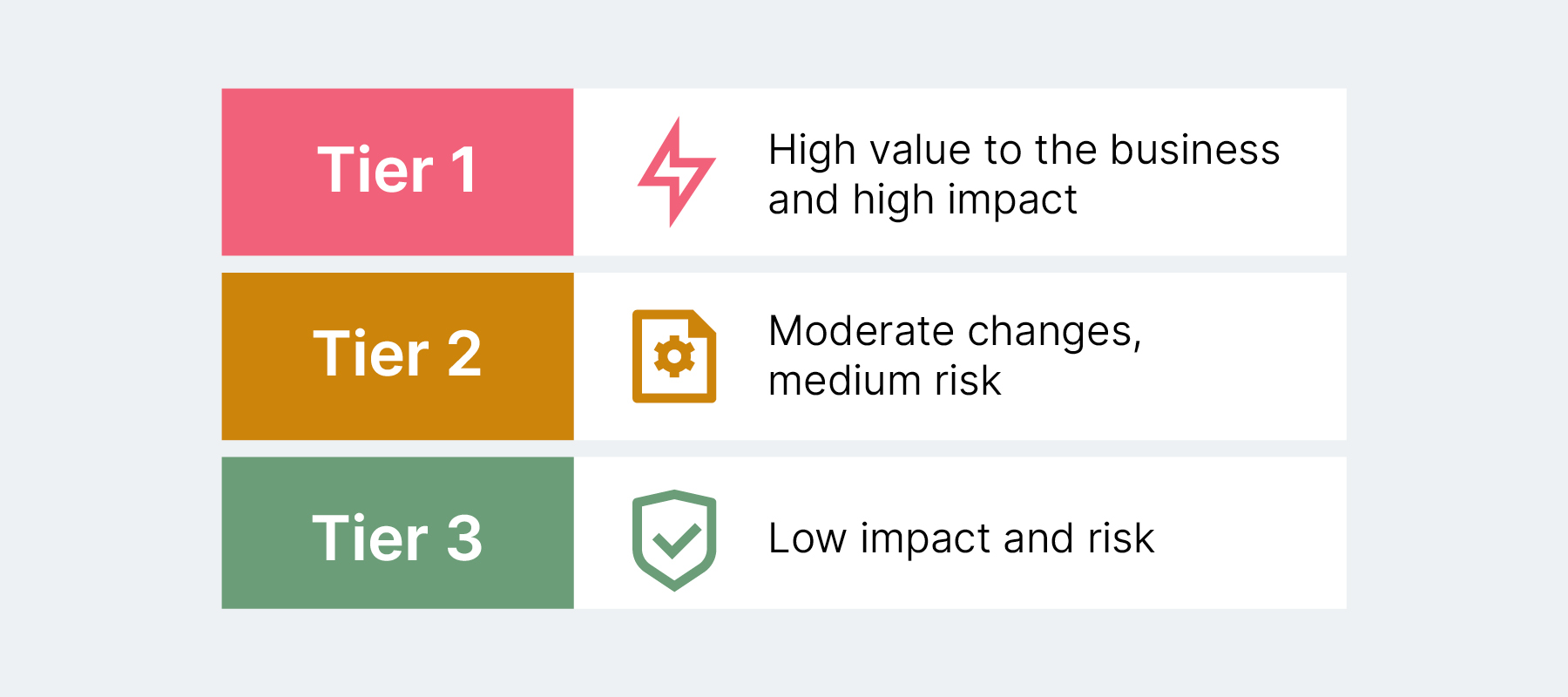

This goes hand-in-hand with treating decision making as a first-class engineering concern. Together they demand:

Clear policies, not ambiguous guidelines.

Role-based guardrails, not one-size-fits-all controls.

Automated approvals where risk is low.

Transparent escalation paths where it is not.

Despite the change we’re currently experiencing, we must not forget the fundamental foundations of building safe, secure and resilient systems: the way we think about developer experience should allow developers to take advantage of AI while also enabling long-standing testing practices throughout the SDLC that we know underpin good quality software systems.

Staying in touch with our thinking

Our thinking on these shifts continues to evolve and we share it in several places that may be of interest:

Every fortnight our Thoughtworks Technology Podcast explores technology trends with senior practitioners and leaders. A recent episode explores our new Agentic Development Platform AI/works™.

Lots of people have been asking questions about the thinking and technology behind it. My colleagues Bharani Subramaniam and Shodhan Sheth do a great job of digging into the details. We feature the podcast on the website but you can get it wherever you usually listen to podcasts including Spotify and Apple Podcasts.

In January we published Looking Glass 2026, a broader perspective on emerging technology patterns and what they mean for business and technology leaders. That report expands on themes of structural change in software delivery and organizational design.

On the subject of sharing knowledge, we’re preparing to publish volume 34 of the Thoughtworks Technology Radar.

We publish the Radar every six months. That might seem like a lot but it's worth it to gain a snapshot of the technologies and practices we're seeing on the ground in our work with clients. This means the Radar provides a considerable level of detail and granularity. It's not a trend report and it's not even about predicting the future; it's a snapshot of what we are seeing now.

Finally, I’d like to flag a new article by my colleagues Danilo Sato and Tiankai Feng. Danilo and Tiankai are both leaders in data and AI — in the piece, they explore the evolution of human and machine collaboration over the last year as agents have been adopted by organizations. The first in a planned series, it’s a useful guide for leaders currently thinking through the opportunities of agentic systems.

All these resources can guide and inform conversations taking place across your organization. Given the rapid pace of change, anticipating the questions likely to matter in the months ahead is valuable: that’s precisely why we share our thinking and experiences with others in the industry.

If you would like to dive deeper into any of the topics discussed please reach out to us. We would be happy to arrange a workshop or session for you and your team with one of our senior technologists.

Why I am writing this

The pace of change in software delivery is intense; no single organization has all the answers. What I’ve found valuable in this moment are grounded conversations about where systems are breaking and where new ones are forming.

This newsletter will share firsthand patterns, leadership questions and the implications that need consideration. It will not offer hasty answers; it will, however, help leaders see what is emerging in the field.

I hope these reflections help inform you as we adapt as leaders to the constantly changing environment.

Rachel Laycock, Chief Technology Officer

Thoughtworks