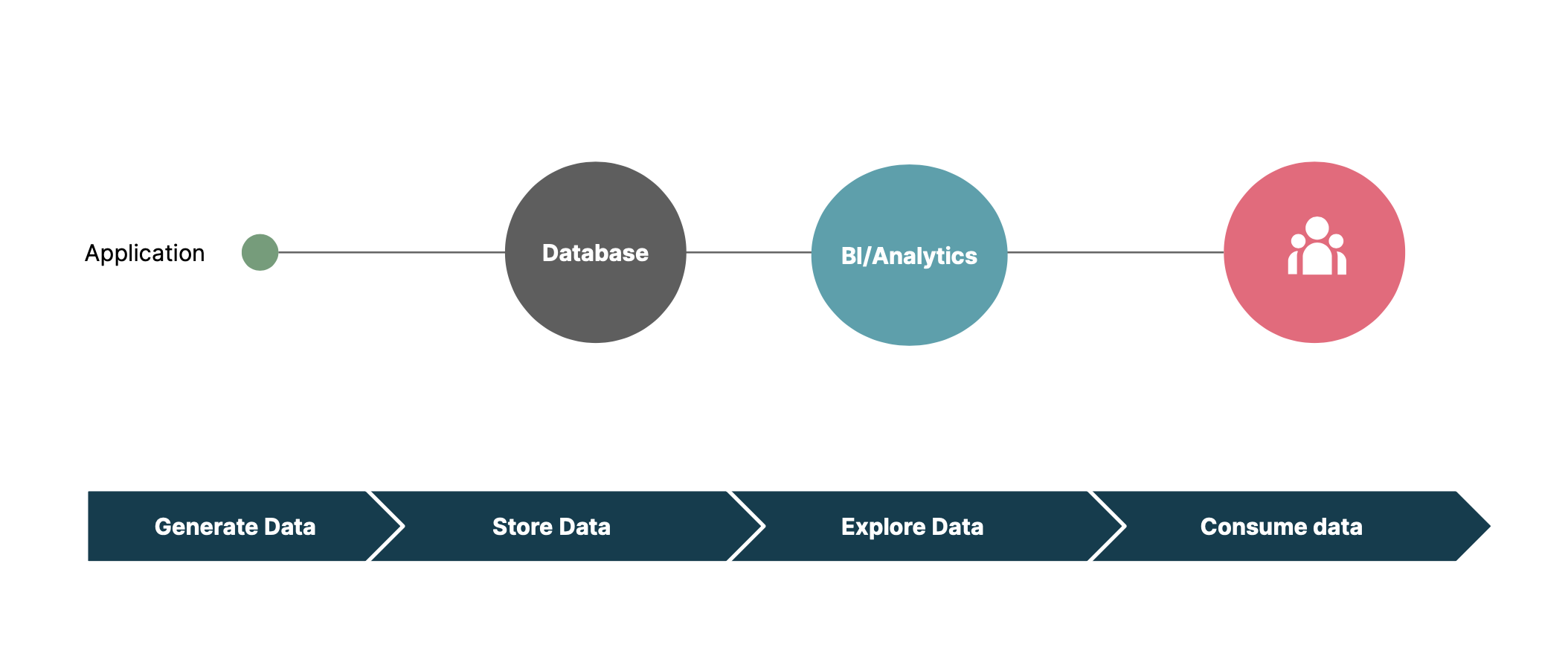

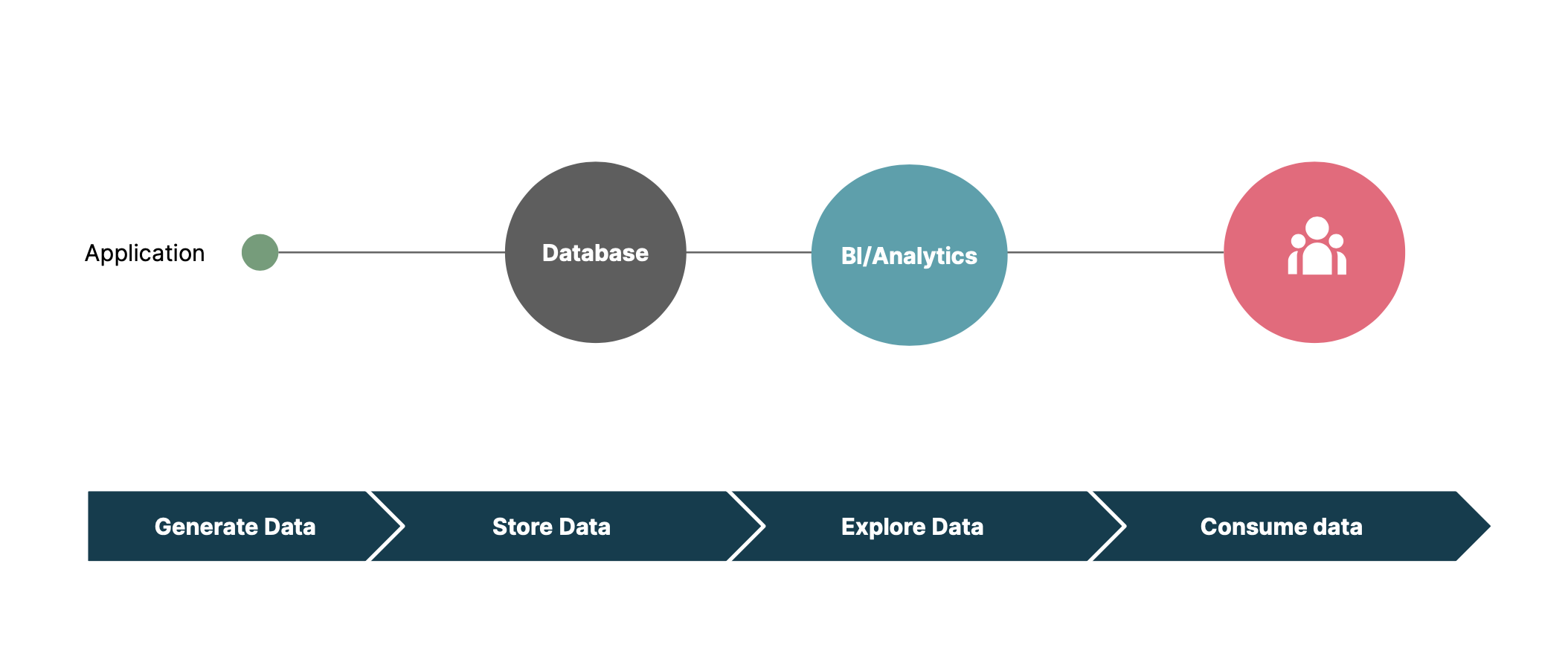

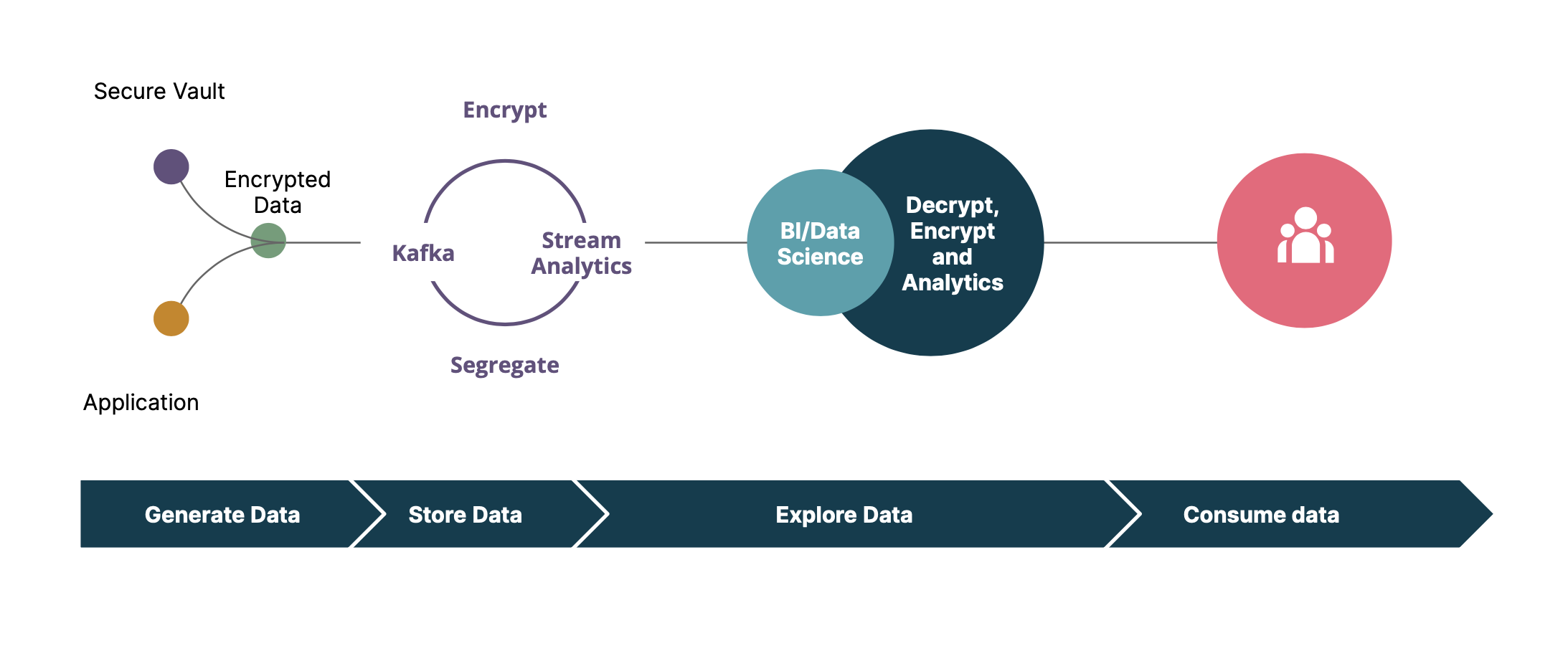

At Thoughtworks, a trend we're seeing is the rising shift to event-driven architecture (EDA). Initially, when starting on the journey of migrating to EDA, many analytics implementations may look like the following:

At this stage, the focus is generally on the ease of accessibility and implementation to save time and effort. Most time-sensitive data is not encrypted or is accessible to personas with full access permission, for example, DBAs, system/application admins, data-agnostic business users, long time employees, etc. Since data is accessed by a known group of users in a controlled environment, it is not uncommon to use “trust” based data access and protection. However, this approach may breach legal and compliance requirements.

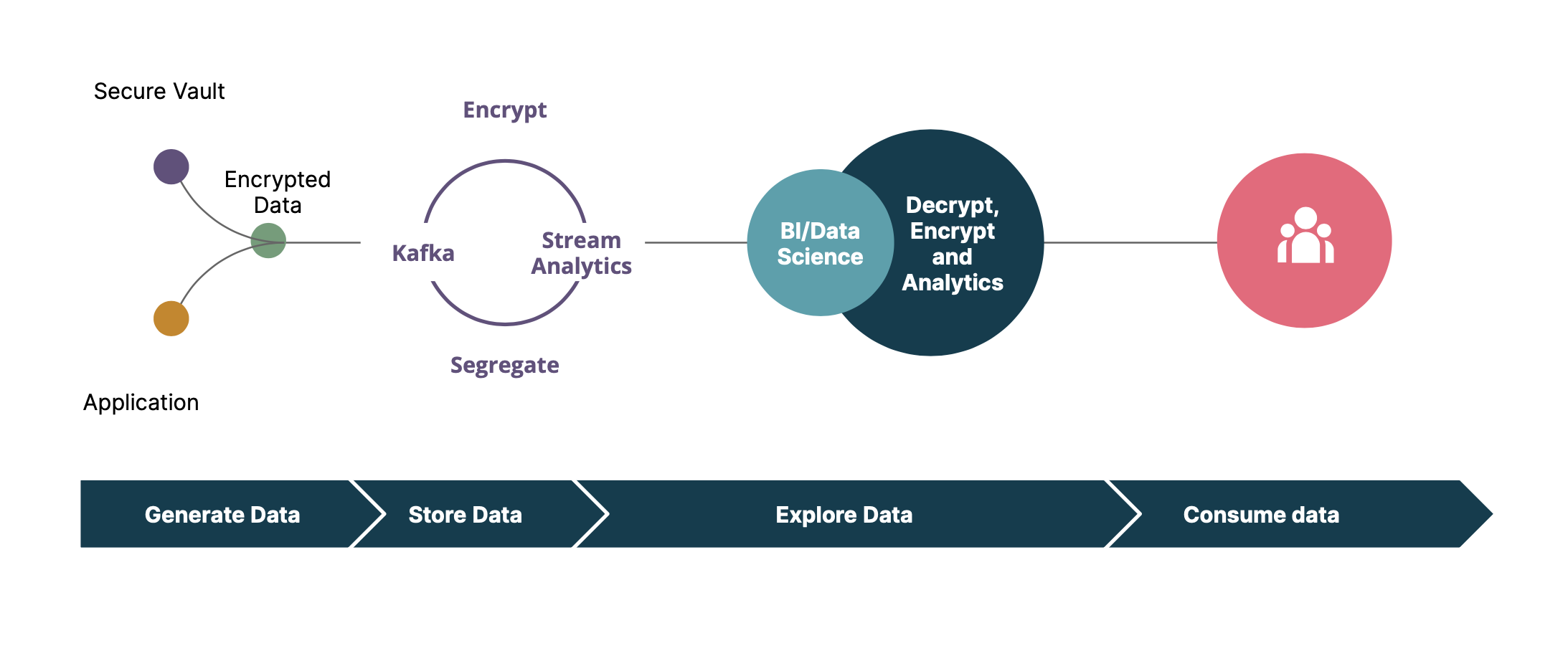

On the other hand, the desired state of an event-driven platform could be similar to the following:

Most importantly, encryption/decryption of data is implemented outside of the data sources using a secure vault that could encrypt/decrypt data, manage the keys and implement role-based access control (RBAC). This ensures that encryption/decryption is performed similarly by all the components involved. Data decryption can only be performed by roles who have access to the decryption key. Furthermore, segregation of sensitive and non-sensitive data results in better and more straightforward controls for access/ACLs.

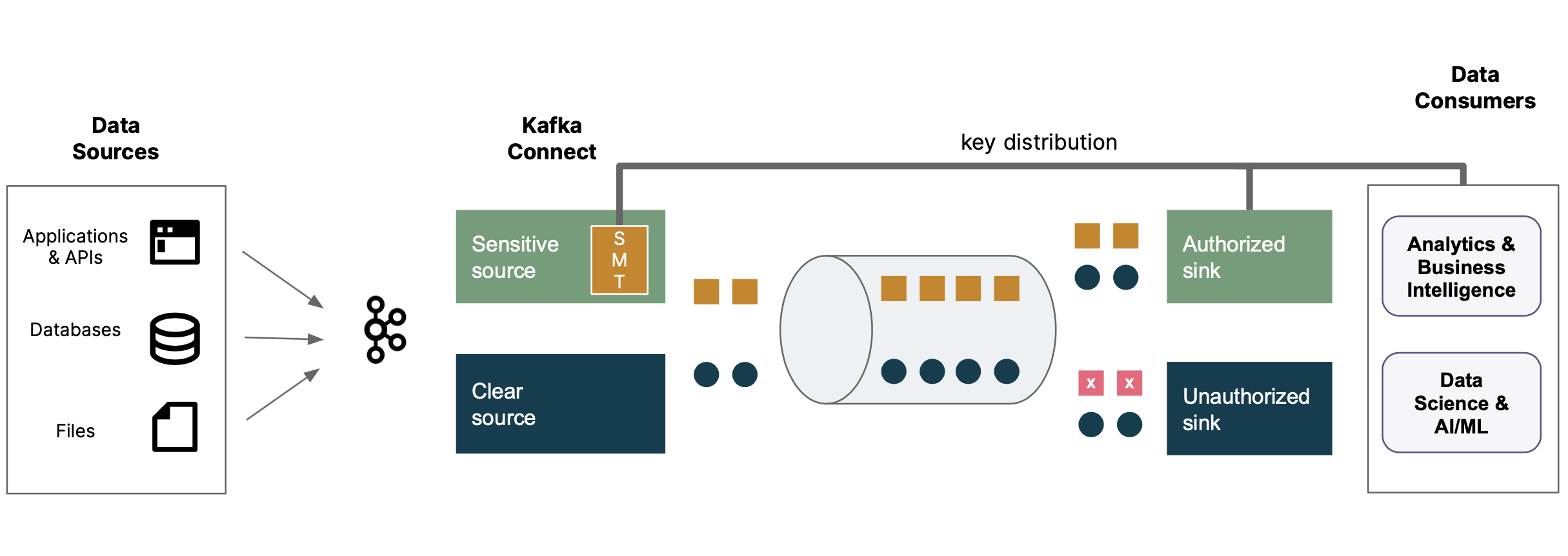

With this desired state in mind, it is common to see “intermediate” phases where data is pushed to the streaming platform from a data store. These approaches can greatly improve security in the short term without major architectural changes.

Here is one example of an intermediate approach which could overcome these challenges. Encryption/Decryption is implemented in a common layer of platform, thereby ensuring flexibility of the solution. The sensitive data is encrypted using Kafka Custom Single Message Transform (SMT). Sensitive and non-sensitive data separation is achieved using ReplaceField SMT. This simplifies and provides better access controls.

Even though Custom SMTs are flexible, they should be replaced with application based encryption once migration to desired state is completed.

If you’re interested in discussing how we could help you with these challenges, or want to chat about how you’re tackling them, we’d love to hear from you!

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.