For anyone deeply involved in technology and excited about the possibilities, it can be easy to forget that even when built with the best intentions, there can be unintended consequences. Technologists themselves sometimes struggle to predict these outcomes, and, for the vast majority of people less familiar with the dynamics involved, technology remains a tightly sealed ‘black box.’ Most people have no say in how technology is created, no means to shape its development and little visibility into its impacts – even when they’re among those most affected.

Responsible tech as an approach recognizes this fundamental imbalance, and strives to make the design and development process more transparent and inclusive. Our determination to foster a more diverse workforce internally and to build bridges with social movements externally is rooted, in part, in trying to open the black box, and to integrate the points of view of those typically excluded from the development process. This is the only way to both minimize harm and to ensure these groups can leverage technology to meet their needs.

Engagements with excluded voices can shed new light on the implications of technology for culture and society, as well as business. We’ve witnessed this in ongoing initiatives like Migracode and Thoughtworks Arts and in an evolving collaboration with the Mozilla Foundation, with the latter creating an ecosystem and avenues for learning about how marginalized populations view and interact with technology. Our intent is not simply to change our own practices but to prompt an industry-wide reckoning about the power structures implicit in technology, and where tech takes us – for better and for worse. We put our purpose into action by encouraging our clients to consider the ethical dimensions of their tech-driven transformations, and have, with like-minded technologists, developed the Responsible tech playbook to share and instill best practices.

We’re also trying to tackle one of the most pernicious legacies of technology — the rapid proliferation of dis/misinformation — by teaming up with organizations tackling it head on. Our partners include Full Fact, a fact-checking charity that organizes a network of volunteers to fight the spread of bad information online, and Tattle. We supported Tattle in improving the efficiency of a searchable archive of fact-checked content for the use of researchers and media organizations. Our approach to Responsible tech will continue to evolve, but the goals will remain the same: to create a more equitable tech future and explore how Responsible tech principles can be extended to create more responsible societies.

"We’re aiming to integrate the Responsible tech perspective not just into technology development standards, but business strategy and culture, because all businesses are becoming tech at core. Our increasingly digital world demands that businesses themselves become Responsible tech practitioners."

Dr Rebecca Parsons

Chief Technology Officer, Thoughtworks

Questioning and addressing tech power dynamics

The creation of technology requires specialized knowledge and resources. This means that technology is often built by those with power, influence and means – and designed to suit that same group. At the same time, tech has clear potential to connect and empower traditionally marginalized or excluded groups, and to help people identify and better understand entrenched power structures.

We’re deeply involved in several initiatives at the intersection of innovation, power and ethics aimed at ensuring tech contributes to equality rather than concentrating power in the hands of the few. One way to achieve this is to open the development and innovation process to different viewpoints and areas of expertise. We’re pursuing this through Thoughtworks Arts, a decade-long initiative that incubates collaborations between our employees and artists to investigate the impacts of emerging technologies on society.

Over the past year these collaborations produced a series of interactive works underlining the urgency of the global climate emergency; exploring how Artificial Intelligence (AI) systems shape and sometimes undermine efforts to understand identity and language; and encouraging people to rethink their relationships with privacy and surveillance. These collaborations will continue to develop, surprise and enhance understanding of the ways in which technology will shape society and culture in future.

(In Thoughtworks Arts) "Developers are learning about creative expression but they’re also experiencing the ethical implications of these technologies, which they don’t necessarily learn through their work."

Nouf Aljowaysir

Thoughtworks Arts Resident

Nouf Aljowaysir’s project سلف (Salaf, ancestor) explores transmission of colonial worldviews through human generations and into contemporary datasets powering AI. The development team illustrated how Computer Vision systems mis-label historic photographs of Middle Eastern desert portraits as likely to contain militaristic content. Aljowaysir’s artwork responds by using an image segmentation AI to erase the stereotypically “oriental” figures in historic portraits, leaving only the surrounding context, signifying the eradication of her ancestor’s collective memory.

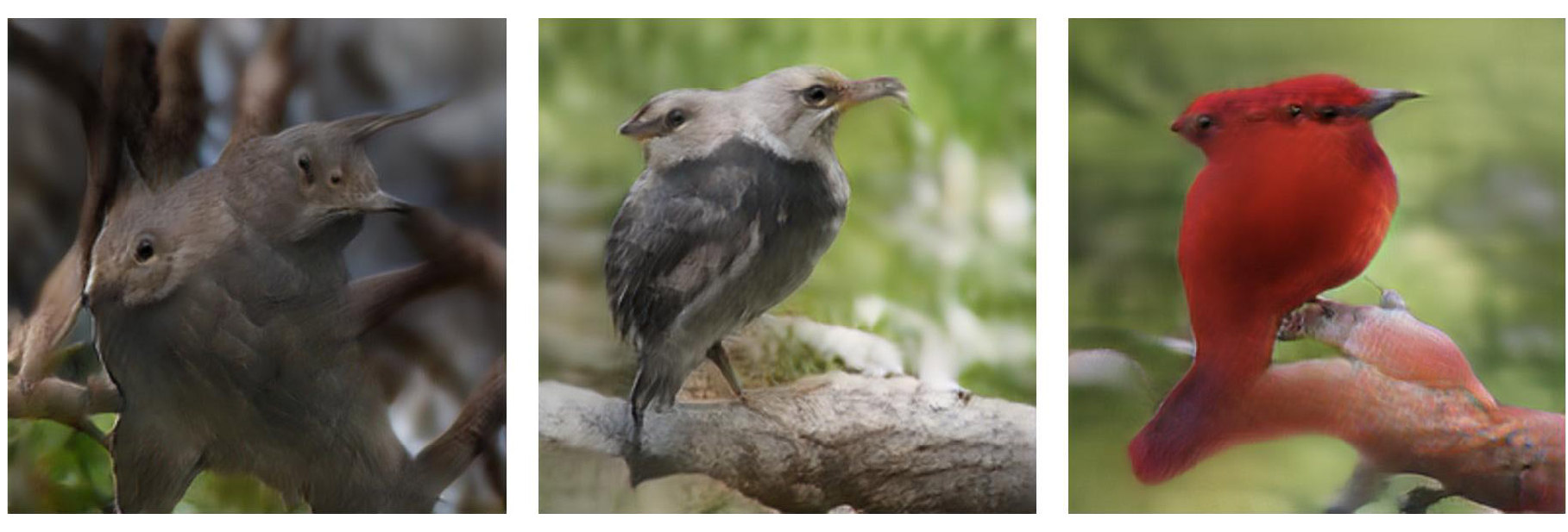

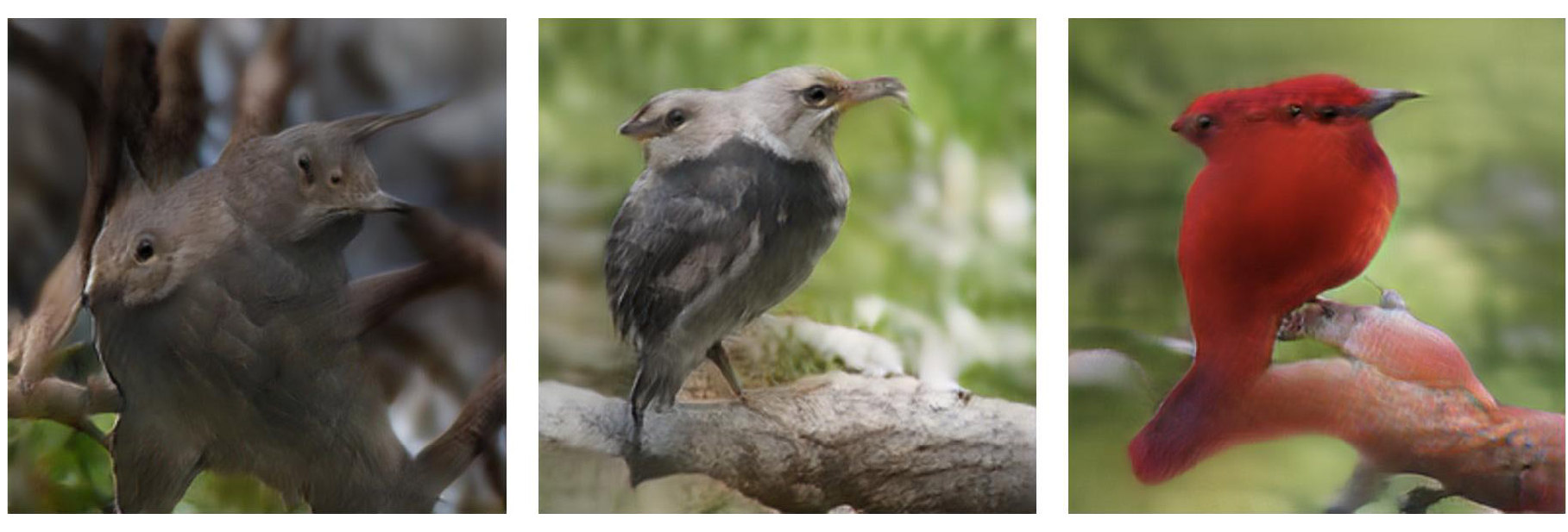

James Coupe’s work examines the role archives play in indexing colonial worldviews. The project ‘Birds of the British Empire’ focuses on an aviary in the UK which historically showcased birds from British colonies, grouping and describing them based on their perceived geopolitical status. The team worked with James to create a multi-layered AI system which examines historical literature to extract descriptions of birds, from which a new synthetic aviary of imaginary birds is generated. The project highlights the role colonialism plays in how representational technologies generate meaning and explores the potential for machine learning systems to dissolve seemingly fixed cultural hegemonies into new configurations and new possibilities.

The vast majority of the technology used in the Global South is planned and designed based on the context, needs and norms of end-users in the Global North, where it’s created. This can lead to misalignment and negative impacts that we’re trying to address with organizations such as Cuadrante Sur. Together we are working on a collective digital rights initiative, the Conectadxs project. Conectadxs brings informal and platform workers into the development process for applications that these communities will put to use. The experience has underlined how co-design and co-creation are critical to ensuring innovation serves a broader segment of society globally.

Data can be one of the most precise and insightful means to highlight how power is distributed and applied. In Australia we’re working with researchers and activists to map and visualize the complex relationships and interconnections that underpin industries like energy, providing more context on how they function and the parties ultimately in control.

Similarly, with a Swiss-based organization, Women at the Table, we’ve built a tool, underpinned by an AI model, that analyzes data to measure how international events measure up to their surface level diversity numbers – in real terms. The model allows organizers to see at a glance who is speaking, influencing and leading on which topics at key international forums. By deriving meaning from complex datasets, these projects provide campaigners, business leaders and other concerned parties with intelligence that can inform future action, drive better inclusion with women and other underrepresented groups at the table and go beyond notions of representation to participation and influence.

Making Responsible technology tangible

To operationalize a Responsible tech culture, an organization should have a set of defined principles and governance models. To this end we published the Responsible tech playbook in August 2021. This freely available resource collects and summarizes some of the leading tools, methods and frameworks — successfully trialed by us and others — which enable organizations to comprehensively assess, predict and mitigate the ethical values and risks of technology projects. organizational culture can ensure that unintended consequences of digital products are minimized, and that informed strategic decisions can be made concerning adversarial components.

Rather than rigid rules or codes of practice, the playbook’s emphasis is on interactive exercises and workshops that stimulate open conversations, and draw in diverse perspectives to flag issues like bias and negative consequences early in the development process. Together with our industry peers, we intend to refine and expand the playbook through successive editions, so it serves as a comprehensive reference for enterprises seeking to ensure their digital endeavors proceed along ethical lines.

While we are deeply committed to these principles, we also acknowledge the complexity of the questions they raise. We understand that every organization will need to define their own journey and way of approaching the ethical, social and environmental implications of the innovations they adopt. This is why, alongside taking further steps to embed Responsible tech principles and practices in our own process of building technological solutions, we are also creating specific tools, frameworks and offerings to help our clients navigate this complexity.

While we’re not expecting to move the needle overnight, we are moving forward via a combination of reflection and targeted advocacy to develop what we’ve come to see as sensible defaults — standards around technology that we hope will eventually be integrated as best practice.

These defaults include things such as accessibility for people with disabilities and neurodiversities; data privacy and security, and environmental impact. By helping our clients understand, visualize and factor these considerations into their technology plans and strategies, we’ve been able to build the business case for each, and to articulate the value of a more Responsible technology approach. We hope to take this work further by both solidifying these standards, and creating tools and techniques that make them easier to adopt.

Explore all chapters

Introduction: Sustainability, solidarity and service

Chapter one: Responsible tech and innovation

Chapter two: Beyond diversity

Chapter three: Inclusivity and social justice

Chapter four: Sustainability and climate action

Chapter five: Healthcare as a human right

Chapter six: Education

Chapter seven: Operating with integrity