The Case for Continuous Delivery

By now, many of us are aware of the wide adoption of continuous delivery within companies that treat software development as a strategic capability that provides competitive advantage. Amazon is on record as making changes to production every 11.6 seconds on average in May of 2011. Facebook releases to production twice a day. Many Google services see releases multiple times a week, and almost everything in Google is developed on mainline. Still, many managers and executives remain unconvinced as to the benefits, and would like to know more about the economic drivers behind CD.

First, let’s define continuous delivery. Martin Fowler provides a comprehensive definition on his website, but here’s my one sentence version: Continuous delivery is a set of principles and practices to reduce the cost, time, and risk of delivering incremental changes to users.

Continuous Delivery reduces waste and makes releases boring

One of the main uses of continuous delivery is to ensure we are building functionality that really delivers the expected customer value. Take a look at Microsoft Partner Architect Dr Ronny Kohavi's presentation on the pioneering work he did on online controlled experiments (A/B tests) at Amazon. This approach created hundreds of millions of dollars of value for Amazon, and saved similar amounts through reducing the opportunity cost of building features that were not in fact valuable (even if delivered on time, on budget, and at high quality). This approach depends on the ability to perform extremely frequent releases. The same practices are being used at many Microsoft properties, including Bing, as well as pioneering companies such as Etsy, Netflix, and many others.

Second, continuous delivery reduces the risk of release and leads to more reliable and resilient systems. Last year, Puppet Labs, in collaboration with Gene Kim and I, did some research last year on the effect of using DevOps practices (in particular automated deployment processes and the use of version control for infrastructure management). When we analyzed the data we found that high performing organizations ship code 30 times faster (and complete these deployments 8,000 times faster), have 50% fewer failed deployments, and restore service 12 times faster than their peers. The longer they had been employing these practices, the better they performed. John Allspaw, VP of ops at Etsy, has a great presentation which describes how deploying more frequently improves the stability of web services.

Implementing CD has second-order effects that reduce the costs of software development

For me, perhaps the most interesting effect of continuous delivery is the cost reductions it brings about by reducing the amount of time spent on non-value add activities such as integration and deployment. In continuous delivery, we perform the activities that usually follow “dev complete”, such as integration, testing and deployment (at least to test environments) -- continuously, throughout the development process.

By doing this, we completely remove the integration and testing phases that typically follow development. This is achieved through automation of the build, deploy, test and release process, which reduces the cost of performing these activities, allowing us to perform them on demand rather than on a scheduled interval. This, in turn, enables effective collaboration between developers, testers, and systems administrators.

This change in process has extremely powerful second-order effects on the economics of the software development process.

The HP LaserJet Firmware team re-architected their software so they could apply these practices (even though they were not releasing the firmware any more frequently). In the excellent book they published about their journey (from 2008 - 2011), they record the outcomes they achieved:

- Overall development costs reduced by ~40%

- Programs under development increased by ~140%

- Development costs per program reduced by 78%

- Resources driving innovation increased by 5x

Using continuous delivery practices, the LaserJet team (400 people distributed across 3 continents) is able to integrate 100-150 changes - about 75,000 - 100,000 lines of code - into trunk on their >10m LOC codebase every day, which are batched up into 10-14 good builds of the firmware (verified using extensive automated testing that runs continuously on both simulators and emulators). Using these practices they eliminated their integration and release testing phase:

We know our quality within 24 hours of any fix going into the system... and we can test broadly even for small last-minute fixes to ensure a bug fix doesn’t cause unexpected failures. Or we can afford to bring in new features well after we declare ‘functionality complete’ - or in extreme cases, even after we declare a release candidate.1

Of course this required significant investment. The team built a software simulator for their complex custom ASICs so they could run automated tests in a virtual environment, created 30,000 hours worth of automated acceptance tests, and created and evolved a sophisticated deployment pipeline. Check out Gary Gruver’s talk on implementing CD at HP from FlowCon last year that discusses how they did it.

The Microsoft team building Visual Studio is also employing continuous delivery practices to build their software (personal communication) for the same reasons, plus the ability to respond more rapidly to customer needs. We use these practices for the user-installed products we build within Thoughtworks with similar results.

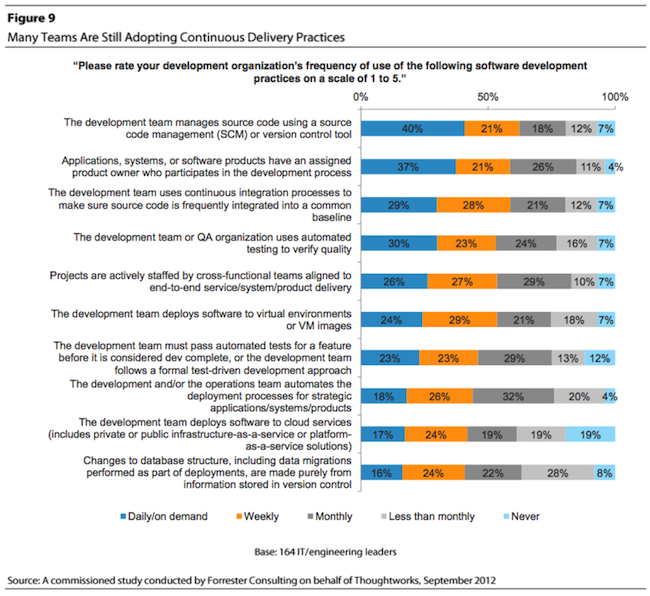

Finally, some numbers on adoption. Last year we collaborated with Forrester on researching continuous delivery in enterprises. It’s a great study that reveals the maturity of enterprises implementing CD and the drivers behind it, and provides a maturity model for people wanting to implement it. But the research reveals that, as an industry, we have some way to go in adopting the practices:

For more on implementing CD at scale in an enterprise context, check out my forthcoming book, Lean Enterprise.

1Gruver, G, A Practical Approach to Large-Scale Agile Development, p60.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.