Since the 1950s, a bottleneck in software development has been the speed of humans typing code into a terminal. Today, that constraint is receding. With the emergence of autonomous AI agents that can generate, test and refactor code, it seems we’re about to overcome this bottleneck. However, this also poses a challenge: code is now produced at a velocity that’s limited by our ability to perform traditional line-by-line manual code reviews. Humans shaping software development remain necessary because a human is accountable for the output — rightfully, we still want to be in charge.

In other words, we need humans with skin in the game to be part of the process and ensure that the intent given to agents is actually fulfilled. But if we insist on being the "human-in-the-loop" for every single code change, we will become more than just a bottleneck; we’ll become a fracture point. This means we either need to choose between slowing the agents or "rubber-stamping" code we don't truly understand.

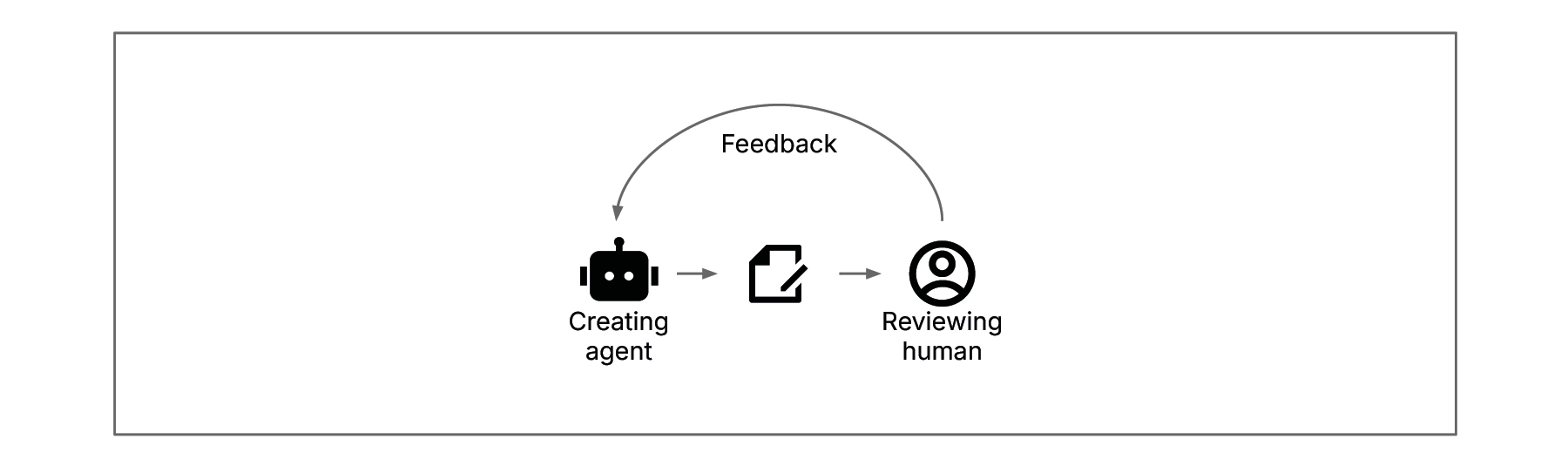

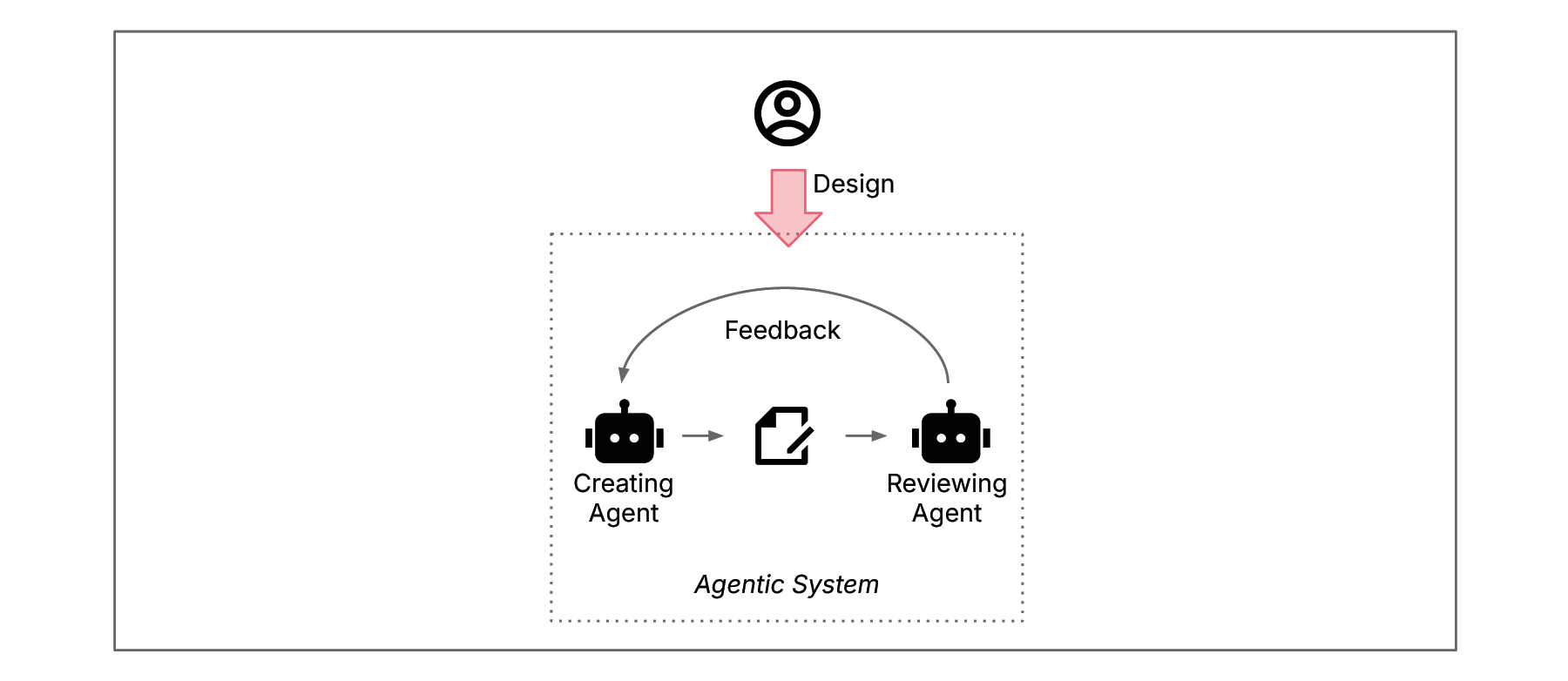

Human-in-the-loop

Lead an agentic SDLC like an organization

Understanding that LLM-based agents are non-deterministic is the key to overcoming this fracture point. This non-determinism implies that we cannot blindly trust AI to get it right, but it also mirrors a fundamental truth about our own workforce: humans are fallible as well. Yet organizations, which are essentially assemblies of fallible humans interacting in complex ways, frequently achieve high performance and consistent results.

How do they do it? Through structures, processes, capabilities and behaviors leadership establishes and management implements and guides.

Managers don't review everything a human is doing, but they are in charge of the system. They design the organization, lead by objectives, intervene when exceptions occur and establish boundaries that allow for productive emergent structures and self-organisation. Leading an agentic SDLC presents similar challenges. If we want to step up and lead agents effectively, we must take on a new steering role in the SDLC and stop trying to review every line of code. I believe that for successful steering we can be inspired by and adopt the practices of the management craft. Orchestrating and steering agents has a lot of parallels with what a manager does when leading an organization.

Example: A developer acts like an Head of AI Workforce. They define the measures of success, e.g. reduce API latency by 20%, and establish a team structure and process, e.g. one agent writes code and a QA agent independently verifies it. The developer intervenes only when a dashboard flags a violation of the objectives or the agents have a dispute.

Cybernetic science as the bridge

For many engineers, viewing management as a "craft" — or even a science — feels alienating, if not entirely suspect. Applying management practices to software engineering may not be obvious. However, Cybernetics can provide a necessary bridge. The concept was introduced in the 1940s by Norbert Wiener and taken up by a diverse group of thinkers ranging from mathematicians to neurobiologists. Cybernetics is a study of communication and control in complex systems, which treats a biological organism, a social organization and a machine as governed by the same universal laws of feedback and regulation.

Stafford Beer developed the viable system model (VSM) in the 1970’s. This connected cybernetics to discipline of management. The VSM is a model of wiring an organization to be viable in a complex world. In the 1980s, Fredmund Malik began integrating the VSM into his work Strategy for Managing Complex Systems, which helped form the backbone of the cybernetic-oriented St. Gallen School of Management. This body of knowledge provides a vast amount of scientific thought leadership that can help engineers treat a system of AI agents not as a black box, but as a functioning, steerable cybernetic system. And, since cybernetics as a science isn’t only applicable to organizations but also to technical systems, it greatly provides the bridge to overcome the friction point of “Human-in-the-loop” in the agentic SDLC.

Example: Consider a self-healing infrastructure with agents. An engineer designs the mechanism where a monitoring agent detects a memory leak and prompts a coding agent to patch it. The engineer doesn't view this as isolated scripts, but as a cybernetic loop where communication between the agents is regulated by feedback, ensuring the infrastructure remains viable and stable under stress.

Leaping to the meta level with steering

In order to understand how a human can effectively lead agents, we must look to one of the foundations of cybernetics: Ross Ashby’s ‘law of requisite variety’: "Only variety can absorb variety." Variety is a measure of complexity, a number of distinct system states.

In the context of the SDLC, the variety of an agentic system is the overwhelming volume of code changes, design decisions and bug fixes generated at machine speed. In order to control this, the human must have requisite variety (the same or more), which is usually above their cognitive abilities. If a human tries to match this variety at the operative code level by being “in-the-loop” for every change, they will inevitably fail or slow down, reducing variety and, therefore, the speed and capabilities of the agentic system.

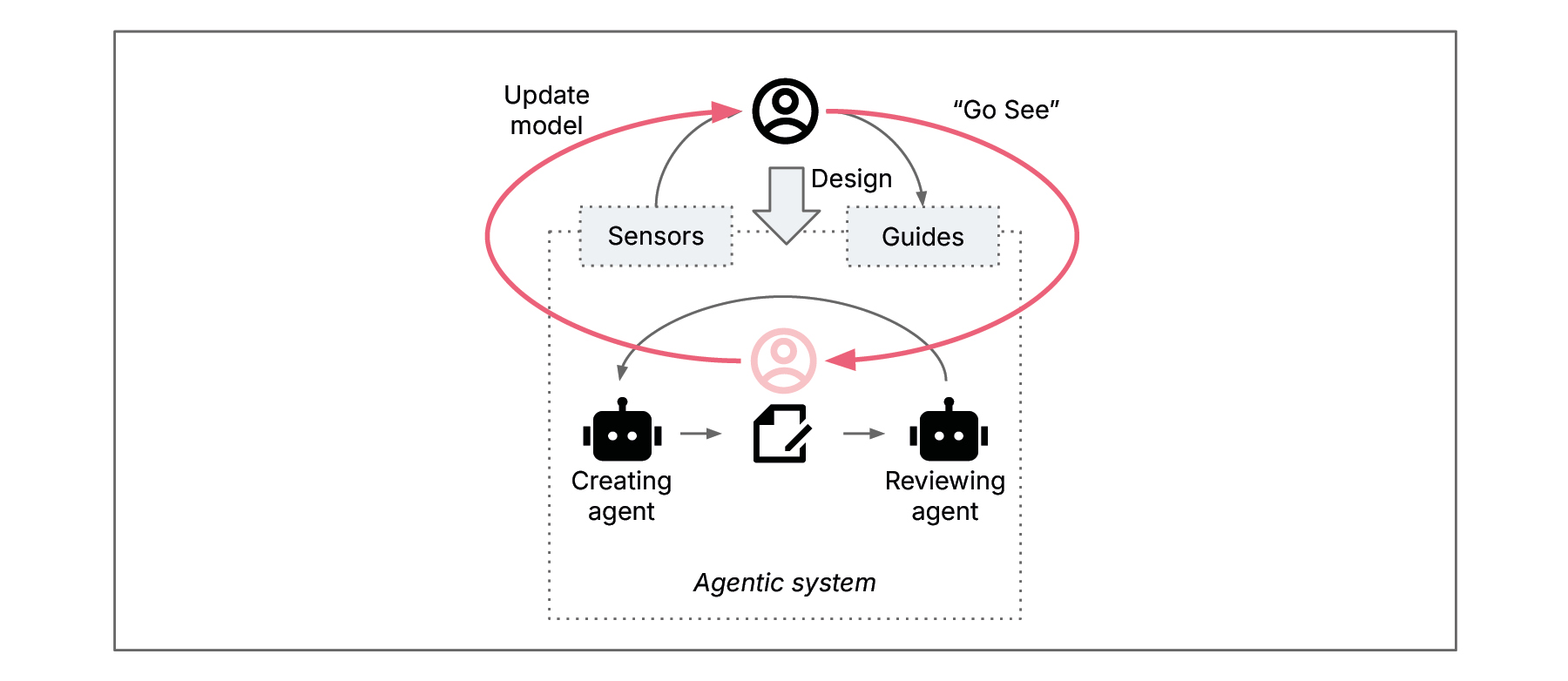

Humans must therefore step out of the problem-solution level and move to a higher level of abstraction: the meta level. Fredmund Malik refers to this as meta-systemic steering. That’s what it means to be “on-the-loop”. This is done by shaping the agentic system, designing and configuring it for achieving desired objectives and adding proper mechanisms to steer the agents towards these objectives. The following diagram shows how the human steps out of the system.

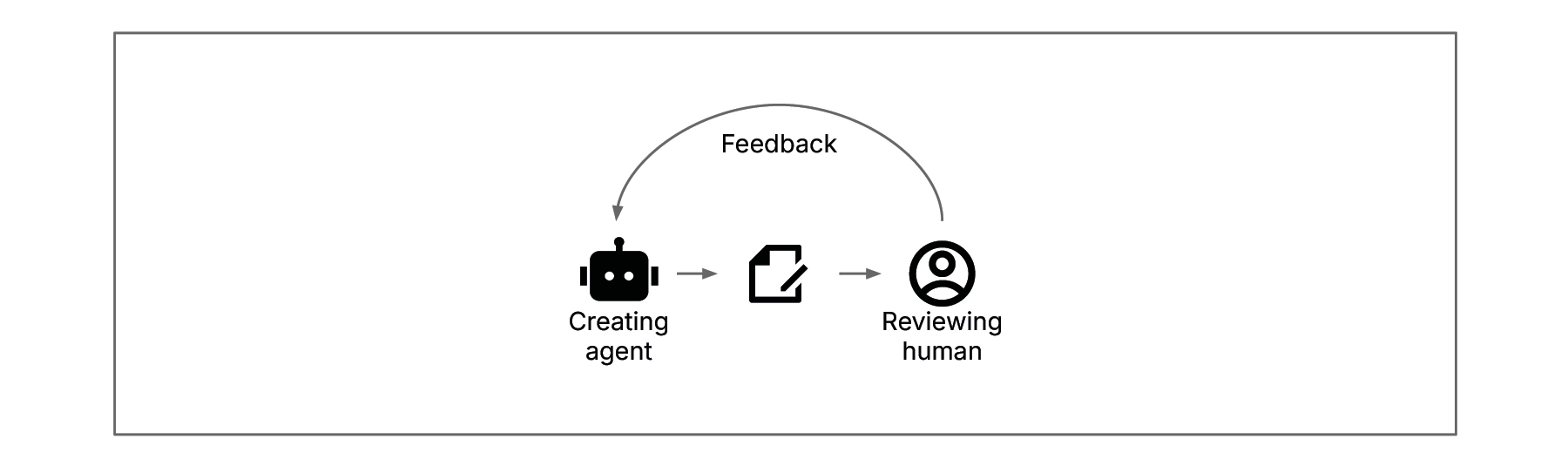

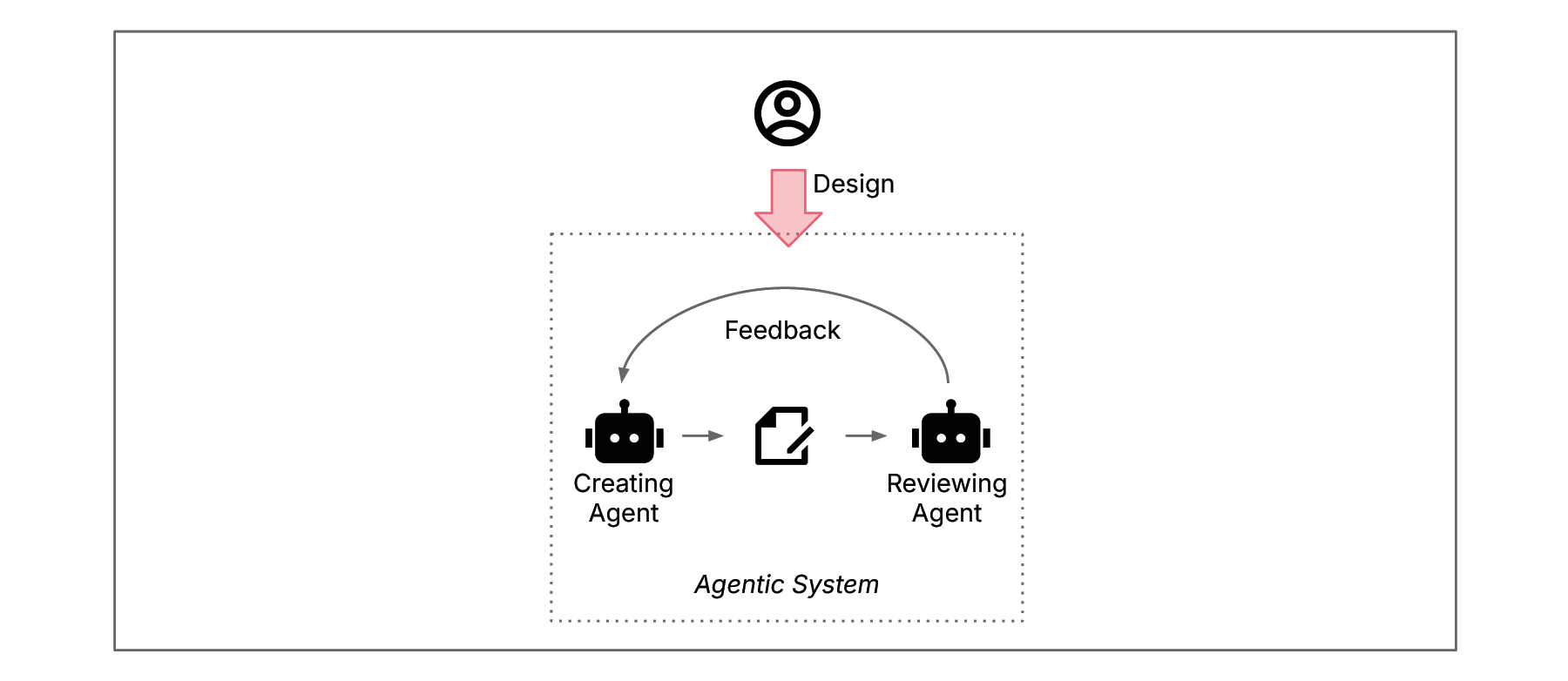

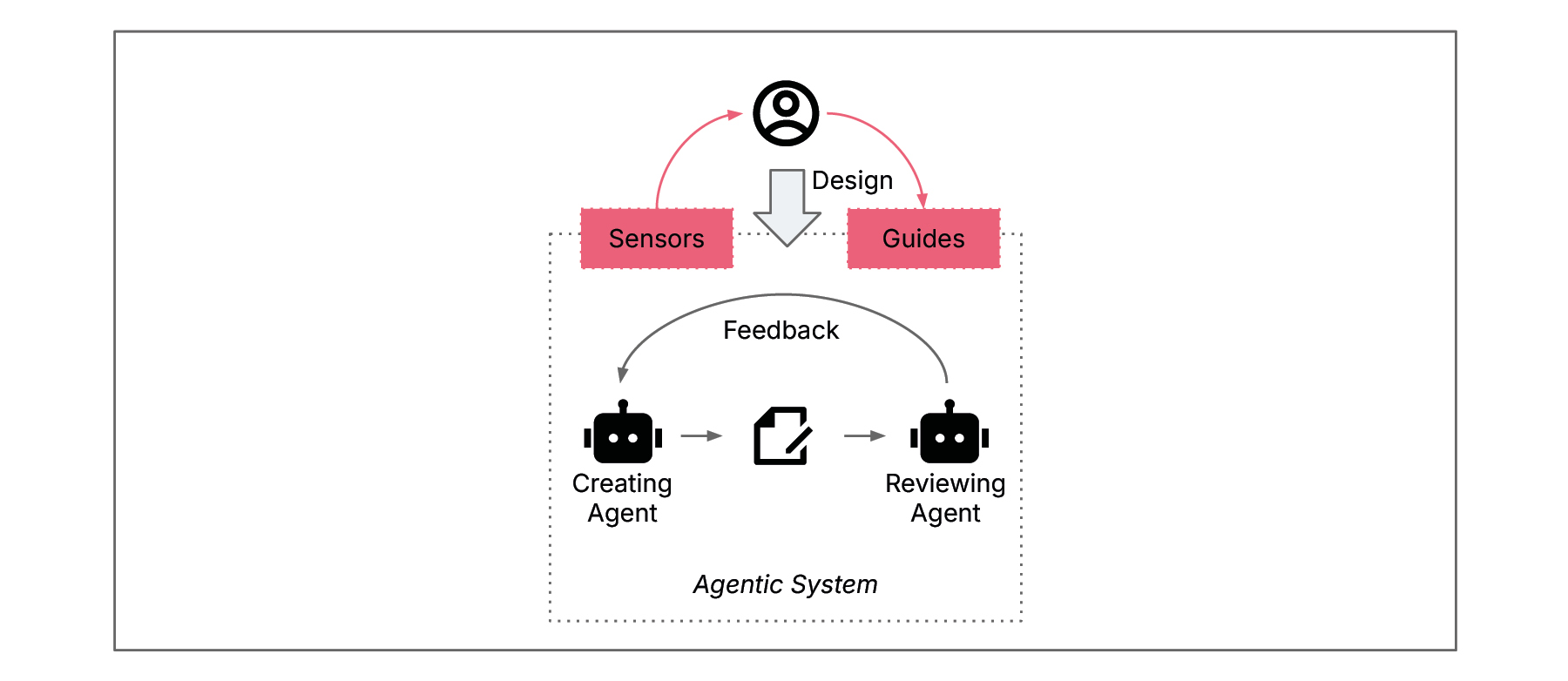

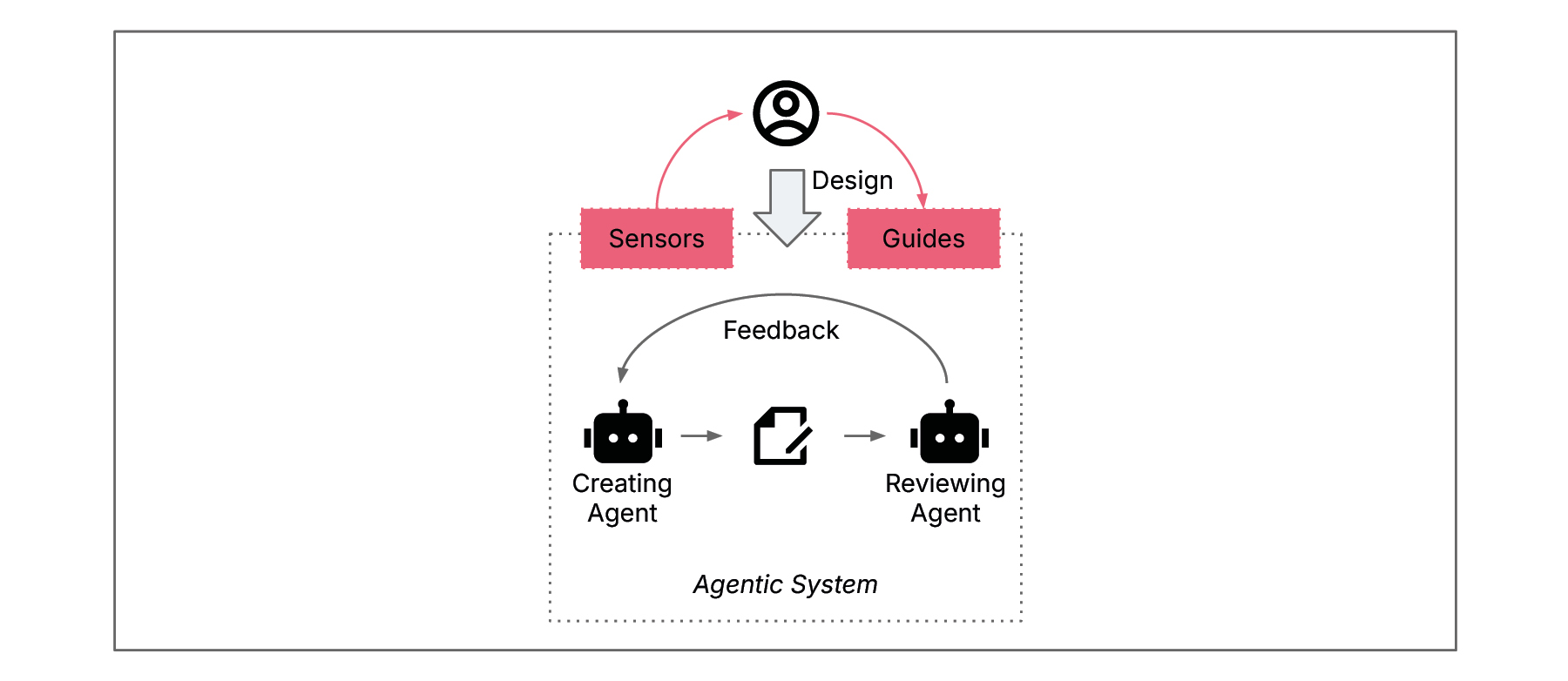

The above mentioned steering mechanisms bring the variety of the agentic system and the capabilities (variety) of the humans into a state of homeostasis - a dynamic balance where both sides remain stable and aligned. So, in order to step out of the problem-solution level and reach this dynamic balance of variety we must have the right sensors and guides. Stepping out of the problem-solution level and steering on the meta level is then what we can call “human-on-the-loop” as shown in the next diagram.

Human-on-the-loop

This completes our “human-on-the-loop”: The agentic system runs its own inner feedback loop and the human outside steers on a systemic level. Having the right sensors and guides that support the steering of agents is critical.

To design them we should take inspiration from two cybernetic mechanisms:

Attenuation of variety coming from the agentic system side by filtering or aggregation to not overwhelm the human side, for example:

Aggregate outputs into condensed standardized reports or dashboards (e.g. code quality and agent success metrics).

Escalate exceptions only based on build-in thresholds .

Foster self-management within the agentic system (like an agile team is able to solve more complex problems without management involvement).

Example: Instead of reading 200 daily logs from a QA agent, a developer configures a dashboard that only alerts them if the success metric drops below 98%. This filters out noise (variety) to make it manageable for the limits of human cognition.

Amplification of variety from the human side to effectively influence the agents, for example:

Encode decision rules into agent policies (e.g. AGENTS.md).

Provide knowledge bases with domain or technical specifications.

Distribute control across multiple humans or a team.

Deepen human understanding through training and mental models.

Example: A developer writes a comprehensive DESIGN_PRINCIPLES.md file including specific security and coding style standards. By feeding this guide into the agent’s context, they amplify their influence across the entire code base.

For this attenuating or amplifying the term harness engineering has recently been coined. It means to create a “harness” for high variety inside an agentic system. If you want to dive deeper, my colleague Birgitta Böckeler wrote an article and made practical suggestions, how to shape these harnesses for coding agents.

Finding the right attenuations and amplifications requires the management side to have a well-enough model of the agentic system. This is the Conant-Ashby-Theorem: “Every good regulator of a system must be a model of that system.” So humans that want to steer an agentic system must have a good understanding of how it’s supposed to be working. By designing and configuring the agents for the SDLC, the human will already have an initial model. In reality the system may differ to this model, e.g. drift away or fail in certain unforeseen cases. That’s why we need another element to make the “human-on-the-loop” successful.

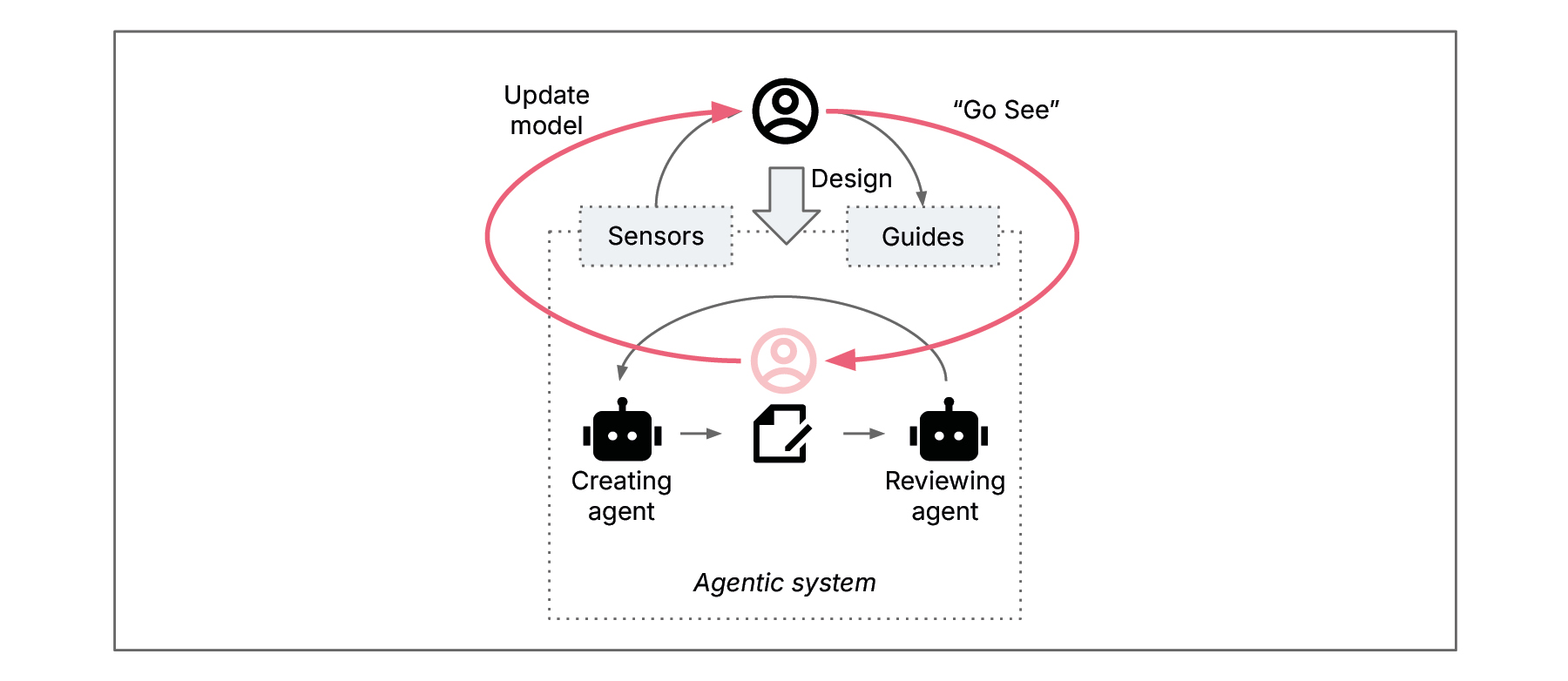

"Go see" in the agentic system

I want to introduce another successful management practice from Lean that we can connect to cybernetics to complete the picture. If the meta level is where we steer, then Gemba is where we verify that our steering is actually connected to reality. Gemba describes the actual place where value is created — the agents working with the code.

In Lean management, "Go See" or Genchi Genbutsu is the practice of leaving the management ivory tower and going to Gemba. For the engineer of an agentic SDLC, this means that while they are primarily a manager of the agentic system, they cannot lead with sensors and guides alone. Engineers must occasionally descend back into the "problem-solution level”.

Applying “Go See” with “Human-on-the-Loop”

It’s necessary because it keeps the model the engineer uses for steering up to date. LLMs can produce code that looks right and pass unit tests and fulfill basic requirements, but may introduce decay or contain hallucinations sensors won't flag.

Sensors attenuate variety through aggregation and filtering and “Go See” deliberately reverses this; the human steps back into reality. With their engineering expertise and real-world experience in software engineering, they identify patterns of failure, detect code smells and get a sense if agents are doing things right. By performing manual spot-checks on specific code modules, the engineer uses their human technical intuition as the ultimate sensory input.

Example: Consider a developer, who might usually look at a dashboard with success metrics and refines the guides for agents. However, once a week, they perform a "Go See" session by randomly selecting three complex PRs generated by the coding agent and performing a deep-dive manual code review. They might discover that while the code passed all automated tests, the agent has used an inefficient data structure that will cause scaling issues later. The developer then needs to rethink their model of the system and improve the harness to guide the use of a more sufficient data structure when appropriate. The developer might also adapt the agentic design to detect scalability issues earlier.

Calibrating the steering ensures the sensors aren't filtering out the truth, and that the guides are actually having the intended effect in the code. “Go See” is a corrective; it should be a fixed feedback loop in the “Human-on-the-loop” system. It’s actually an application of double loop learning.

Conclusion

The transition from "human-in-the-loop" to "human-on-the-loop" is the evolution of the software engineering profession. Exploring cybernetics and the management craft provides the foundational expertise. It explains how new agentic systems can be shaped so they’re still controlled by humans and create reliable and consistent results.

The engineers and architects of the agentic systems are essentially becoming "cybernetic system designers". They will be successful if they are:

Applying cybernetics, using the concept to better understand how to balance variety.

Learn steering, adopting appropriate practices to manage the agents.

Practicing “Go See” and regularly going to Gemba and updating the model (as required).

It also means that effective steering alone is not sufficient. "Go See" still demands technical expertise and intuition. Therefore, the question remains: how can we preserve and cultivate these essential skills?

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.