The IoT Testing Atlas

For instance, I recently worked on a product, where a mobile app talks to a connected machine. The various states that these two devices could be in made it particularly challenging while coming up with test scenarios. I would like to present the framework that I found useful when testing the IoT-based product. This framework, which I’ve called the IoT Testing Atlas helps manage the various permutations of states that can get extremely complex in IoT deployments.

IoT Testing Parameters

When we consider some common states or variants that is tested in a simple web application, we see four basic results:

- Server down

- HTTP timeouts

- Slow networks

- Authorization and authentication errors

When testing any internet application, we need to be mindful of these four states. Now, consider a mobile application. It’s imperative that we keep in mind, the additional set of states and variants that arise from operating in a mobile environment. These states include:

- Offline mode

- Online mode

- Activity kill

- Background behavior

- Languages

- Location

Now, if we then look at the variety of states that ‘connected machines’ introduce, we see four new states:

- Machine Wi-Fi off

- Machine Wi-Fi on

- Machine is busy

- Machine is sleeping

This means that even with the given set of example states, there are about 96 (4 times 6 times 4) states that the entire system can be in, at any point in time.

Each of these states cannot be treated as a standalone entity, as the state transition within a system introduces additional constraints. For example, the state change from ‘offline’ to ‘online’ is likely to trigger a set of events.

The above set of parameters is only the tip of the proverbial iceberg. As we go deeper into specifics, connecting the different states to logical scenarios could become overwhelming.When I tried using the existing web-based techniques like all pairs, equivalence partitioning, boundary value and similar, I found that they did a good job at deriving scenarios with a variable data set for a static system. These techniques apply elimination logic to arrive at the most optimum data set for testing.

For example, the all pairs technique advocates eliminating the repetitive data pair combination. But when we apply the same technique to the variable states of the system to derive scenarios, the discarded system states may leave us with a non-communicable system, making it unreliable. Nevertheless, these techniques will still work well, inside a single unit of the IoT system.

Which is why I saw the need for an IoT Testing Atlas.

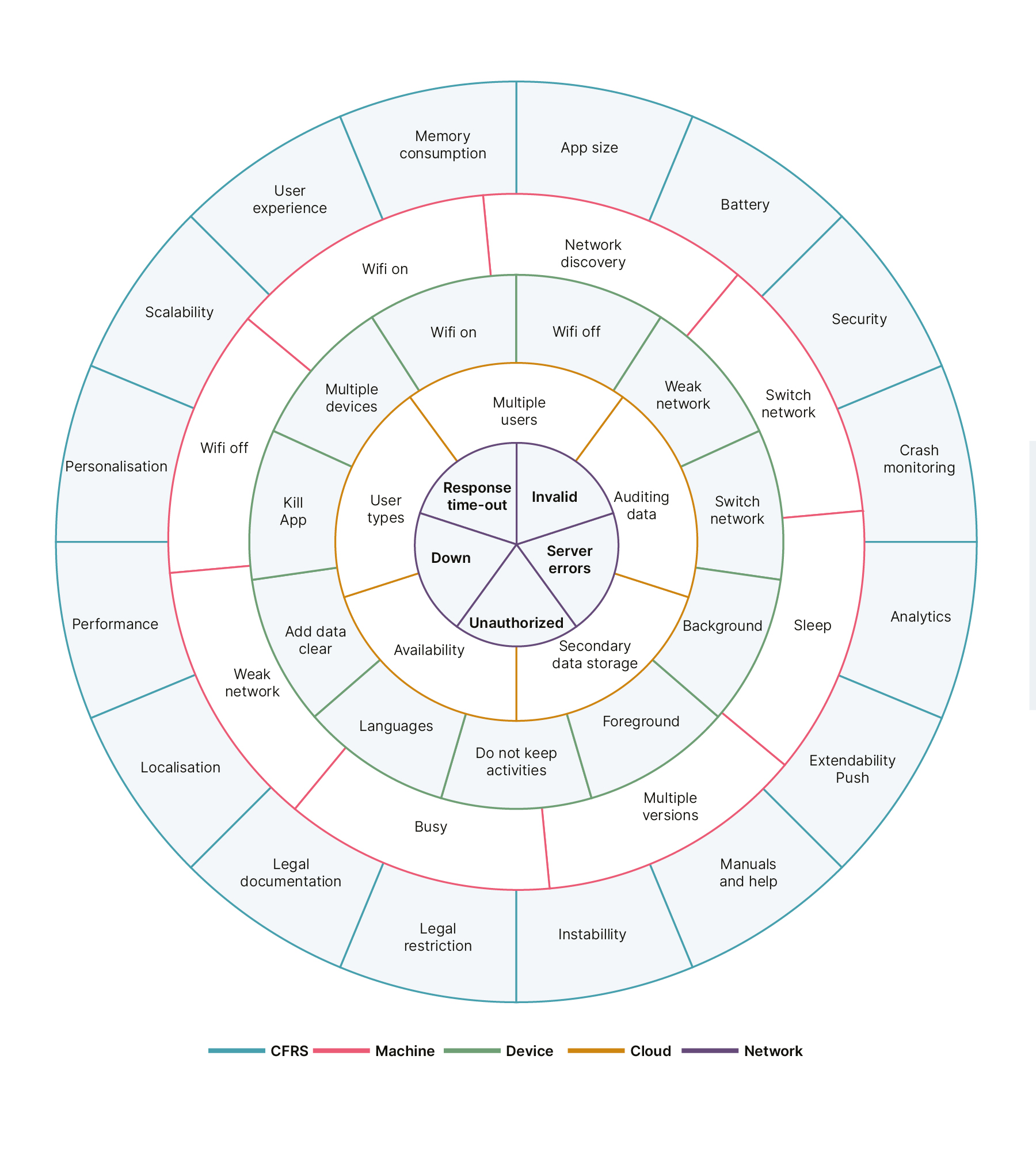

Visualising the Atlas

Most of us would have flipped through an atlas in geography classes. The atlas, in my context, outlines all of the potential system parameters and derives meaningful scenarios that are required to test a feature.Every system within the product is captured as a circle with its n states. The logical next states are placed close to each other. These circles are rotatable entities. I have included a separate circle for the NFRs too, because they easily slip out of our minds when we test such complex integrations.

The following image is my view of the IoT Testing Atlas:

Let’s look at a few scenarios of using the Atlas, and derive logical scenarios for some features that involve only mobile and machine interaction. This means our focus circles are Device, Machine and Network.

-

Fix the mobile device and machine at Wi-Fi On state. On rotating the Network circle, we get the following scenarios:

- Unauthorized user tries to access the machine which triggers the ‘Access Denied’ error message on the app

- Server down and server errors should trigger appropriate business error messages like ‘Something went wrong. Try again later.'

- Response timeouts could do one of two things. Either re-trigger the same request using the prolonged loading icons or show a similar error message to the one listed above

- Invalid requests should trigger messages such as ‘Your app needs to be updated’

-

Continue to keep the mobile device on Wi-Fi On and move the machine circle, a step at a time:

- When the machine is in the offline mode, the app should indicate ‘check the machine for network connections’

- When machine is busy, an alert is needed, such as ‘unable to complete your request as the machine is busy’

- When the machine is sleeping or on another network, then a ‘machine not found’ or similar messages should be displayed

- Now, switching the machine to the right network should enable the connection between mobile and machine again

-

Switch the machine circle to Wi-Fi On and rotate the mobile circle to find more scenarios:

- When the mobile goes offline, then appropriate messages/behavior (like disabling the button) should pop up

- When the mobile comes online, appropriate calls should go from the app to make connection with the machine

- When the mobile switches network Wi-Fi to 3G - what would you identify as the appropriate action?

- When a user gets a call and puts an app in the background - should they still see the completed request or is he back to square one?

- As Android kills an app that is in background for a while, should the user’s last screen status be saved?

- Apps with localization scope need verification at every scenario level

On a practical level, when a team that’s testing an IoT product has more than one QA, this Atlas should provide a common point of reference. The Atlas accomplishes such efficient collaboration because it covers the unique requirements for testing—the permutation of tools, devices, scenarios and protocols in a comprehensive manner.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.