Sundar Pichai, CEO of Google and its parent firm Alphabet Inc, has called for responsible regulation. He says organizations must strike a balance between setting up safeguards and fostering innovation — on the foundation of certainty that comes from a legal framework. A similar sentiment was in our survey, conducted in collaboration with MIT Technology Review Insights, earlier this year. Organizations, we found, understand that their technology can be deemed responsible only when it completely complies with existing regulations.

Interestingly, organizations also expect a reward for being compliant. They anticipate ROI, improved efficiency, enhanced security and better relationships with customers, partners and employees.

To reap all these benefits, can the responsibility of defining the do’s and don’ts be left with the regulator alone?

It depends.

The dependence

When Buy Now Pay Later (BNPL) emerged in Asia, it was hailed as a solution that made intelligent use of alternate data (such as purchase history) to enable the financial inclusion of those without a credit history.

Given that one of the key functions of a regulator is to protect the public interest, this body has to monitor the ecosystem for corner cases. And regulators often find market players over-extending the use of their licenses. In India, for instance, the regulator disallowed an extension of credit on pre-paid payment instruments by non-bank issuers. It forced many players to either freeze or pause their operations and further pushed BNPL players into uncertainty.

This ambiguity doesn’t bode well for businesses. So, corporate leaders are demanding certainty in the legal framework which can only be achieved if regulators front-run the markets, especially for emerging technologies.

Even then, complexities come into play in the cross-over of technologies, sectors and how organizations work. For instance, regulators define frameworks for quality, safety, governance, privacy, consent and ownership of data for AI in healthcare. However, different regulations govern the diversity and inclusion aspects — and their implications on the product. This could include issues such as unethical facial recognition or biased algorithms. Perhaps the ideal solution would be for organizations to take responsibility for diversity in their ranks and influence their AI models to be inclusive too. But realistically, this is too far down the line.

We believe a regulator’s actions on a particular regulatory framework depend on:

The technology

The regulator’s view on it

How the market players are putting it to use

And the cross-over of other technologies, sectors and organizations’ ways of working

Regulatory mitigation

In response to the above dependencies and complexities, regulators are working on several measures. Chief amongst them is creating a responsible tech ecosystem. It includes the following building blocks:

Collaborative data platforms

Currently, there is a universal lack of information-sharing amongst financial institutions leading to fraud-prevention models that are either unexplainable or do not yield the desired results. To address this, regulators are creating data platforms and functioning as fiduciaries which will aid in formulating appropriate regulations as the ecosystem evolves.

A classic example is MAS’s new digital platform; COSMIC (Collaborative Sharing of ML/TF Information & Cases), co-created by six major commercial banks in Singapore. With COSMIC, financial institutions can securely share information where customers or transactions cross the material risk thresholds. Such information-sharing will help financial institutions identify and disrupt illicit networks, safeguard the Singapore financial center and enhance stability and trust in the system.

Regulatory sandboxes

Sandboxes encourage innovation while building familiarity with emerging technologies such as Web 3.0.

Recently, the Dubai International Financial Center (DIFC), a special economic zone in UAE regulated by the Dubai Financial Services Authority, announced a new ‘metaverse platform’ where developers can test their technology in a physical studio. The platform also addresses policy development and legislation on open data, digital identity and company law frameworks in the metaverse.

The European Commission (EC) launched a blockchain regulatory sandbox in February this year, establishing a pan-European framework for dialogues aimed at increasing legal certainty in the space. The EC is also incentivizing regulators with an award for the most innovative regulator in the sandbox.

Multiple regulators are launching sandboxes under “cooperation agreements” to foster fintech.

These sandboxes want to create a regulatory environment that keeps pace with technological innovation, gain experience in the oversight of new technologies and develop frameworks. As the World Bank says, “Sandboxes provide the empirical evidence needed to substantiate decisions.”

Organizations should approach these sandboxes not only as boundaries to comply with, but also use them to realize the regulator’s vision.

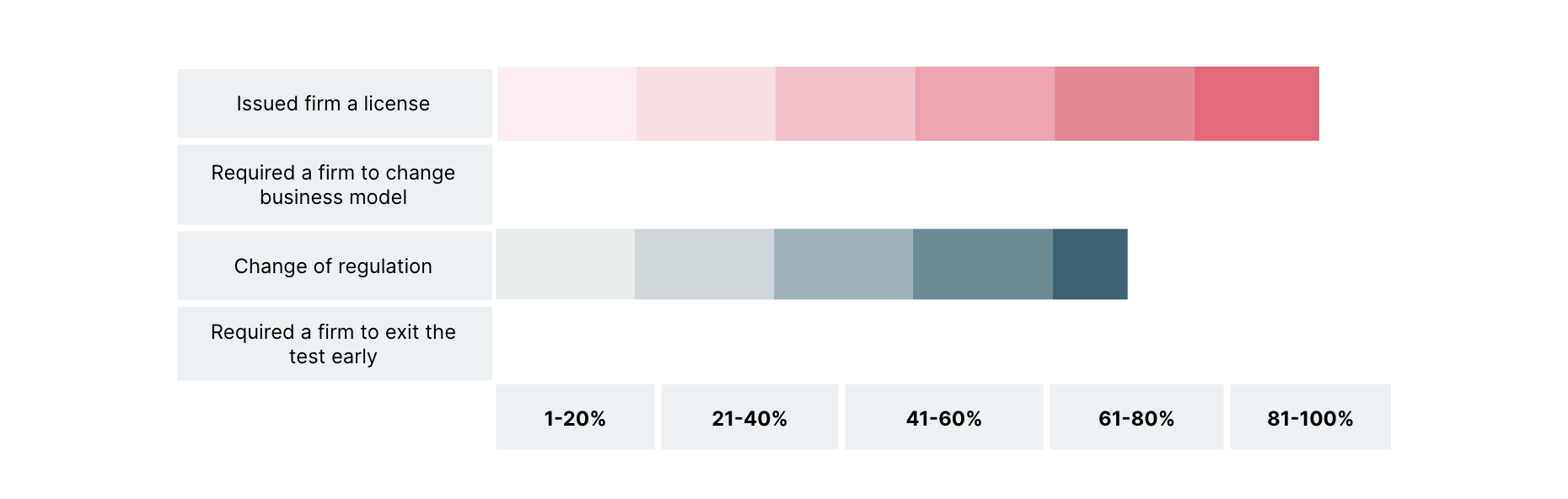

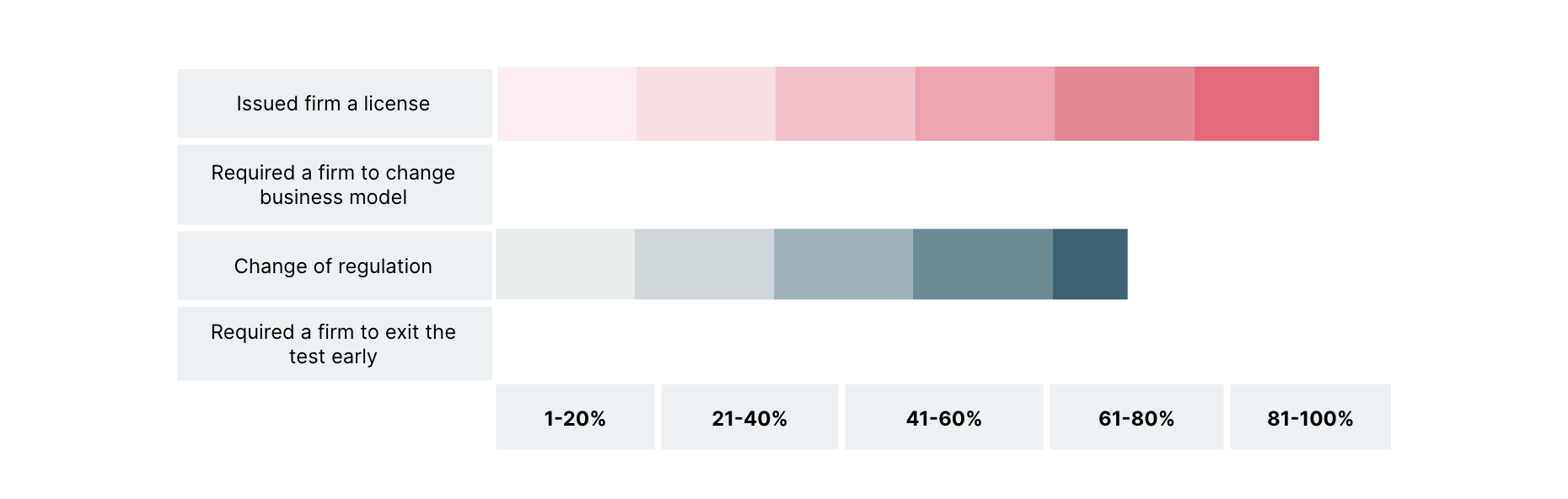

Fig 1: From CGAP, World Bank Group - successful sandbox tests result in full authorization and/or regulatory change

Supervisory technology

Regulatory technology (regtech) is typically two-fold: compliance tech when regulated firms use it and supervisory tech when regulators use it. As regulators monitor and enforce compliance, regtech presents new opportunities to formulate frameworks.

For instance, today, AI activities of market firms are governed under disparate regulations such as data protection, consumer protection, financial services regulations etc. However, threats of unfairness, explainability and accountability are yet to be addressed. Regulatory gaps expose unmitigated risks that supervisory technology can resolve. In a perfect world, even without prompts from regtech, organizations should adopt measures to address these gaps and work towards diversity and transparency, which have a direct impact on their AI models.

Not every innovation or its ensuing disruption needs to be welcomed. We see this in the raging debate in the AI & Art spaces. Any regulator has the moral obligation to react to emerging technology, even if post-facto. This makes a case for market players that harness these technologies to have a principle-based approach rather than waiting for rules to be formulated.

By leveraging collaborative data platforms, sandboxes and supervisory tech, organizations can:

Enhance the regulatory dialogue between regulators and innovators by offering a trusted environment for them to engage in

Appreciate activity-based risks and develop complementary regulatory frameworks

Influence policies across multiple impacted sectors and benefit from working in cross-sector (and cross-border) ecosystems with evident interdependence for a public use case

In effect, a responsible tech ecosystem puts market players in the seat of co-pilot with regulators, enabling responsible regulations pragmatically and collaboratively.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.