Incorporating Security Best Practices into Agile Teams

Security is a big deal, and businesses are the ones to bear the cost.

Every day, it seems like we read another headline about a large data breach affecting major organizations and the many people they serve. As a result of these breaches, websites such as haveibeenpwned.com provide searchable information on many of the Internet’s largest breaches. When a big data breach happens, organizations suffer great losses, both financially and in reputation.

Current state—the security sandwich

In spite of the known risks of security breaches, the current standard for security across our industry is suboptimal, in particular during the process of upgrading or delivering a new technology feature. Teams often do a lot of planning and analysis before development and after a feature Is delivered. In some cases, penetration testers check for vulnerabilities during the process; however, it is less common for security processes to be in place throughout the actual development process.

In spite of the known risks of security breaches, the current standard for security across our industry is suboptimal.

We call this the “security sandwich”—lots of up front planning and discussion around security, and post-development testing and fixes, but no security in between.

The security sandwich is risky for a number of reasons:

- Feature design, planning, and implementations are done without security in mind;

- The process to catch vulnerabilities is not enhanced;

- The teams do not share responsibility for security; and

- If an incident does occur, you might not be able to recover quickly.

How does an agile process affect security?

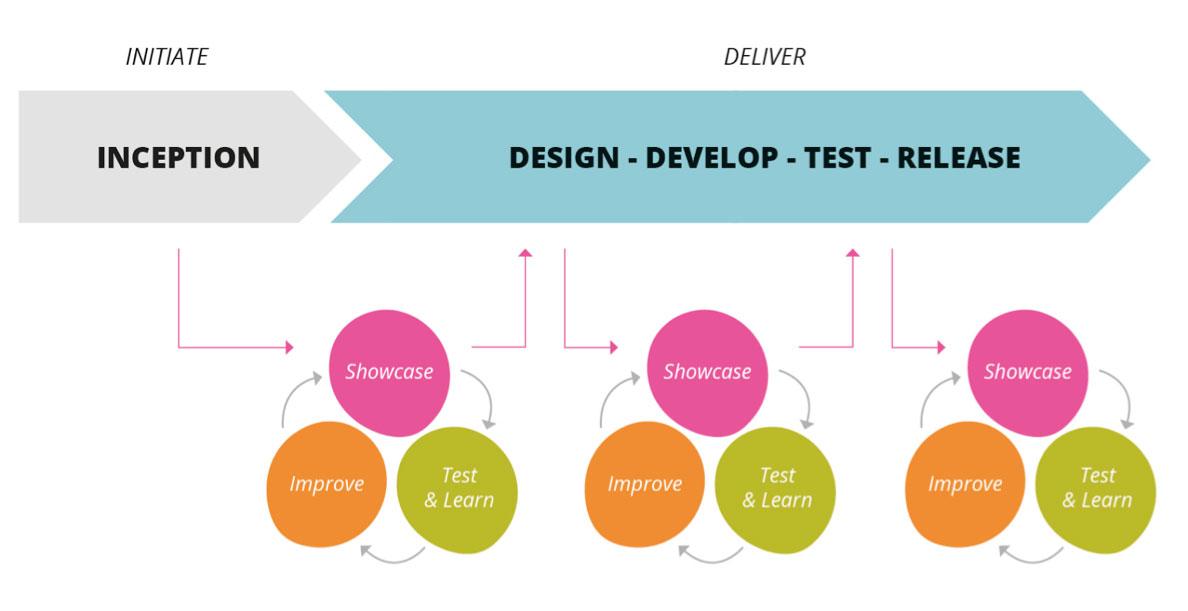

The fast and disruptive nature of today’s business cycles means that organizations must incorporate agile processes in order to remain competitive. In fact, more and more companies are following the Continuous Delivery concept that was first described in the eponymous 2010 book co-authored by Thoughtworks alumni Jez Humble and David Farley. But security wasn’t initially included in the model. We believe that must change.

Security has an important place in the agile process because of the extreme risks that a breach poses to the organization. Consider the Continuous Delivery model below:

When a team incorporates security throughout its agile process, the benefits gained are similar to the qualities gained by incorporating other practices on a continuous basis. These benefits include the following:

- Higher confidence in what is released into production;

- The ability to make changes successfully on a micro basis;

- Better testing and planning; and

- A faster reaction to making improvements or fixes.

The OWASP Proactive Security Controls recommends verifying for security early and often, rather than relying on penetration testing at the end of a process to catch bugs. That’s because the latter approach is prone to failing to find all potential vulnerabilities, a manual process, and hinders the ability to release software early and often. Furthermore, waiting until after a feature has been developed to test for bugs also increases cost and time to release the product into production, as that bug must be returned to the development team to be fixed before release.

Building Security into the Agile Timeline

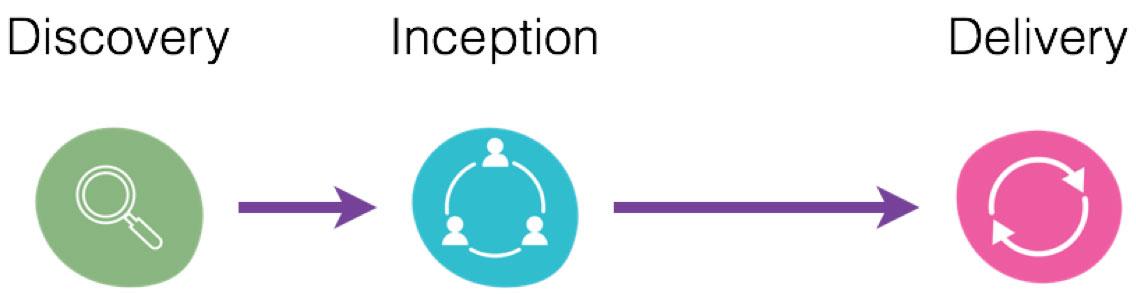

At a high level, a typical agile software delivery project in Thoughtworks consists of several steps that can be organized under three distinct phases: discovery, inception and delivery. Each step is described in greater detail below.

1.Discovery: What is the current state?

The purpose of the discovery phase is to understand the current state of the system. Information that can be gathered in this phase includes:

- A list of existing systems/applications as well as their users, and people who are involved in these systems

- A map of how existing systems talk to one another

- A map of the current path to production

- A review of past incidents/attacks, in order to avoid recurrences in the future

- A review of existing security policies and how they will impact the scope of the project

This information can then be used to inform decisions in the following phases.

2.Inception/Project Kickoff: What do teams need to protect?

The purpose of the project kickoff phase is to understand the following:

- What will be built

- Which people or services must be involved

- Which assets need to be protected

- How attackers might compromise the system

- How potential threats can be prioritized and mitigated

It is vital that this conversation should continue throughout the development process, as the product evolves, so as to ensure the right security throughout the process.

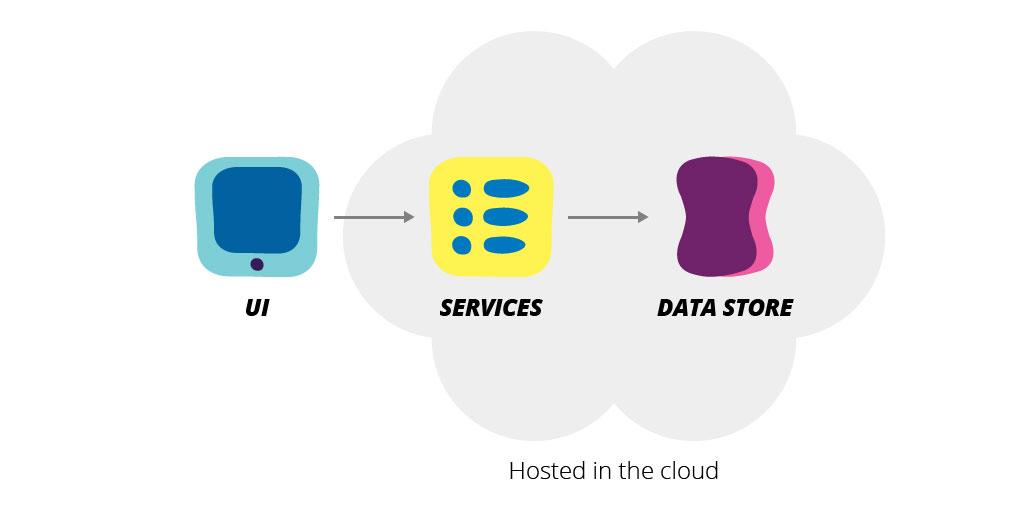

What will be built?

By the end of a project kickoff, your team should be able to start creating high-level architectural diagrams and defining trust boundaries within these diagrams. Teams don’t need a fine-grained API contract or the complete tech stack this early on, but they should have some idea of the major components and interactions between them. For instance, if we are building a new ecommerce site, we know that there will be a UI that the end user will interact with, a set of services where the business logic will reside and a data store. Based on past projects, we may also make some initial assumptions on where those component would initially reside (i.e. internally in corporate datacenter or externally in the cloud).

As we progress in the development, some of these assumptions will be valid, some will not, and it is important to update this model regularly as the trust boundaries will change over time. A trust boundary is where data moves from a less trusted source to a more trusted system. One example of a trust boundary is when a user submits a form, as the data is moving from a user-controlled environment to a trusted environment (our server). Wherever data moves across a trust boundary is a good place to focus on taking steps such as modeling threats or codifying validations.

In short, by the end of this process, a team should have the beginning structure of a high-level architecture and an understanding of the trust boundaries within this architecture.

What is the current threat landscape?

A common saying in security is that “you can’t protect what you don’t understand.” As a result, it is wise during a project kickoff to enumerate the actors (people, resources, and services that are involved in the system), assets (things of value), and attackers that are involved, as well as attack goals and potential vectors that could compromise your system.

Threat modeling and attack trees can be helpful tools to identify threats and increase visibility. We recommend first assessing how common security threats might be relevant to the project, then using threat modeling as a tool to identify threats unique to the project and business domain. For example, if the project is a web application, you should first consider common vulnerabilities inherent to web applications in general, and then use threat modeling to identify and mitigate vulnerabilities specific to your domain, user base, etc.

What can you do about things that could go wrong?

When a threat to your system exists, the ideal solution is to mitigate, eliminate, or transfer the threat. For example, if a threat to the system is that a user’s password can be guessed via a brute force attack, a mitigation to this threat could be to include multi-factor authentication, or locking a user out after a number of failed attempts.

These steps are not always available to take, and the business must assume the risk, after assessing the cost and potential impact of the threat. For example, social engineering is a hard threat to completely mitigate, as this is often in the control of the user. Therefore, some threats must be accepted, but your team should have sufficient infrastructure in place to detect compromises and quickly recover with minimal lasting damage. To achieve this, make sure that you have regular data backups, robust logging in at least two separate locations, and a secure and effective build pipeline.

3. Delivery: What is the best scenario for a secure delivery process?

Even though each business process is different, there are ways to identify where good security practices can be incorporated on a continuous basis. Below, is a summary of each step in the agile story life cycle:

Iteration 0: Environment Set-Up

Most projects will start with an “Iteration 0,” when necessary infrastructure is established before delivering features.

Pre-production environments provide an entry point into more valuable targets, which is why teams must incorporate greater security measures in the entire build pipeline. Exploitable weaknesses in pre-production environments are often overlooked, such as dangerous default settings or unnecessary privilege. Services often have default passwords or are configured to be accessible without authentication and thus are easy targets. For example, Redis by default is accessible without authentication. While teams might mitigate these risks in production environments, pre-production is often seen as less of a risk and these kind of vulnerabilities can be overlooked – creating access points for attackers.

When setting up development environments, make sure that your services are configured to follow the Principle of Least Privilege, in which access to information or services is granted only to people who have a legitimate need for the information. As part of this, review configuration settings for services (such as your CI server) in both production and development environments.

Iteration 0 is also a good opportunity to begin adding proactive security tools and practices into your development pipeline. SecDevOps (often referred to as Rugged) is an emerging practice that emphasizes this, and we will see a lot of movement in this in the future. We recommend starting out with proven tools that provide dependable behavior in your pipeline, such as OWASP Dependency Check.

Finally, having a streamlined and consistent build pipeline will assist in confidently releasing fixes that have been found in production. Firefox’s “chemspill” release plan is a good model of having sufficient infrastructure in place to recover quickly and confidently from a vulnerability.

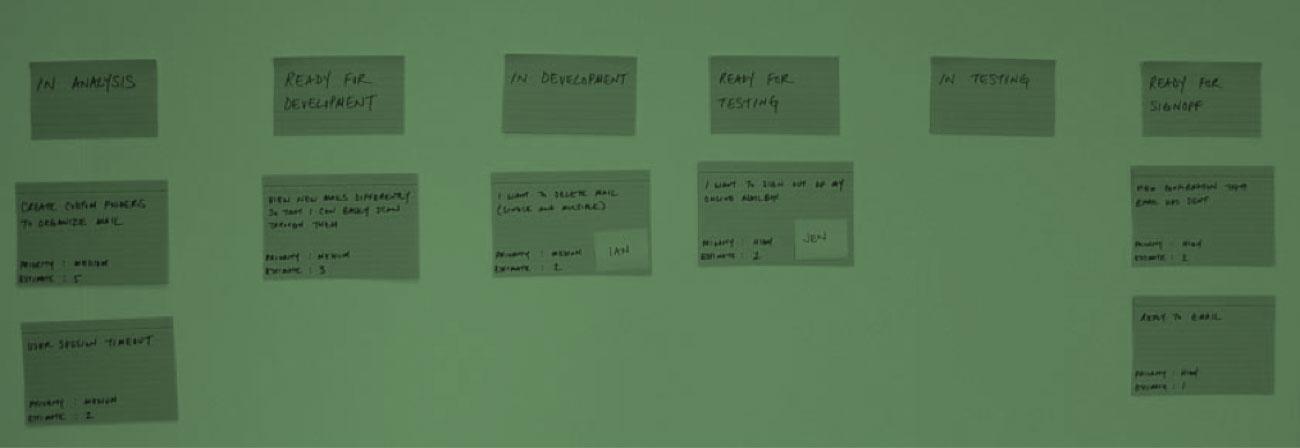

Story Lifecycle

User stories are the base of any agile development. Usually at the beginning of a project, the team sits together and agrees how these stories are going to move along the wall. This simple guide can also contain security criteria that stories need to meet so they can be considered complete.

Ready for development

A story can be defined as ‘Ready for Development’ if a team has identified and analyzed the unique security requirements involved in that story.

For example, if the story involves collecting user data, one question to consider is how this information is handled safely in the story. Is any of this PII (Personally Identifiable Information)? Are there established conventions for handling sensitive use data?

Teams should write acceptance criteria that contain information about the level of security that needs to be considered in the story. A good example is how we treat unauthorized or unauthenticated users is the following:

Given an unauthenticated user enters the system

When she tries to view her profile

Then she is redirected to the login page

We noticed that not everything can be written as an acceptance criterion, but still want to make sure the developers and the QAs take them into account when working on the story. These can also be identified in the Cross Functional Requirements (CFRs) of a project along with others such as performance and usability.

Examples of cross-functional requirements might include requirements for secure and informative logging and error handling, or usage of browser controls. Specifically, one criteria might be sending headers to enable browser protections against common attacks. A good place to start is the OWASP Top 10 Cheat Sheet.

In Development

While software developers are in the process of implementing a feature, both security thinking and testing should remain tightly engrained in the same way that Test-Driven Development has become interwoven into the development lifecycle to ensure software quality.

What does continuous security look like when implementing a feature? Similar to the implementation of other Cross Functional Requirements and user acceptance criteria, developers should implement the feature with security requirements in mind. Furthermore, developers should keep in mind the existing threat model of the system, as well as a baseline security standard in which solutions to common threats is well-understood (such as the OWASP Top 10).

For example, if a feature involves writing a new query to a data source, a pair of developers should be aware of existing common threats and how to handle them. These might be queries to a SQL database that must be parameterized to mitigate SQL injection. Or if a system’s threat model includes protecting highly sensitive user data, developers will review how this data is stored, encrypted, and accessed during the implementation of a feature.

In the development process, teams can also write automated tests against identified security criteria, such as using a user journey test to validate intended behavior if a non-privileged user attempts to access privileged information.

Ready for testing

The developers know the story is ready for a round of QA process when the following steps have been completed:

- The system meets the acceptance criteria

- CFRs have been taken into account and implemented as part of the story, if necessary

- Established code conventions have been met

Teams would normally agree on code conventions that help them create a consistent and easier process to maintain code. How teams handle security should be part of these conventions. For instance, the team should agree on where and how to use server side validations when data is submitted across a trust boundary—even if that data has been validated on the client-side.

In Testing

The QA process is a good point in the development process to validate security requirements. Specifically, your team’s QA process can incorporate checking against attack trees, CFRs and identified security acceptance criteria.

Security can also be incorporated into code retros. If your team follows XP practices, a pair of developers (or QAs) should have worked the story and have done constant peer reviews. However, it is important to share this knowledge with the rest of the team. In most of our projects, teams dedicate time every week to code reviews. In these sessions, the entire technical team discusses implementations and patterns introduced during the week, gives feedback and suggests improvement. These sessions can also include how the story has been implemented from a security perspective.

Production

Once a feature has gone to production, it is important to ensure that a sufficient monitoring infrastructure is in place to detect any attacks and compromises. For this, both log aggregation and network monitoring tools ELK, Splunk, LogWatch or fail2ban are important.

In addition, it is vital to develop a sufficient incident response plan in the event that an attacker is successful in compromising your system. This response plan should consider how to notify any impacted users, an internal escalation plan, and how to ensure this vulnerability won’t occur again in the future.

Similarly, it is a good practice to maintain a record of architectural and technical decisions made in the project for future reference. These include documenting attacks and incidents so a team can learn from them and apply the findings in the current or future projects.

Continuous Improvement

As with any agile process, teams can learn and improve in an iterative manner. Specifically, teams should continuously update their threat model and attack trees as knowledge grows about the product, the systems that are being built, and the interaction between the users and these systems.

We also recommend continuous collaboration between a development team and a security team or penetration tester throughout the development process, not only at the end of developing a feature and before releasing to production. For example, a penetration tester might join iteration planning meetings or work with teams to identify requirements for acceptance criteria. With this approach, we can avoid “throw over the wall” testing and ideally eliminate bugs before the feature is developed.

Going forward: What to start with

From our experience, it can be daunting for teams to decide where to begin after hearing a long list of best practices. Doing anything but 100 percent can feel like failure, yet the bar can seem impossibly high. Here, we recommend that teams start with small, high-impact practices, iterate, and evolve.

1. Develop code conventions for OWASP Proactive Controls.

Having a plan for how to proactively mitigate general vulnerabilities is helpful, as attackers typically start attacking a system by scanning for the most common vulnerabilities using widely-available tools such as ZAP or Metaspoit. Furthermore, we recommend following well-understood mitigations when possible, as this reduces unpredictability and possibly unforeseen bugs in implementation.

2. Have security acceptance criteria in relevant stories.

If there are security criteria unique to features that are not covered by a Cross Functional Requirements (CFRs), we recommend capturing these criteria in stories and validating these in the QA process.

3. Have an incident response plan.

Your team should identify 1) who should be involved in the fix; 2) who within the organization should be notified of the breach; 3) when and how users should be notified. Furthermore, everyone on the team and potentially involved should be aware of this plan and understand their roles and responsibilities.

4. Build security into your pipeline.

A good place to start is automating security best practices in your pipeline. Static and dynamic analysis tools can help identify vulnerabilities that were missed during development and testing. Automated checks for libraries that need to be updated is also fairly simple to include as an automated pipeline check. Keep in mind that your team must have a plan for how to update external dependencies when needed.

What to grow into

1.Threat modeling

It can take time to learn how to thoroughly identify the potential holes in a system. First, establish a baseline security standard from established standards like ASVS, and then use threat modeling as a tool to identify additional vulnerabilities unique to the system.

2. Good security culture

Overall, the goal should be for security to be a part of a team’s everyday process and culture, similar to the movement of incorporating Test Driven Development (TDD) and DevOps on a continuous practice. Overlooking security can have catastrophic consequences, and the more that teams can incorporate good security practices into our team’s everyday workflow, the better that this team can manage vulnerabilities before significant damage occurs.

To meet this end, teams should start with a few important practices, have frequent conversations about how to improve, and iterate and adopt additional security practices gradually.

Conclusion

Until recently, security has often been treated as an afterthought in the software development lifecycle. However, due to major recent security breaches, teams are investing efforts in changing the status quo, to incorporate security practices into the process of updating a product or system.

Agile methodologies have provided teams with an excellent process for incorporating practices continuously in every stage of the development lifecycle. We recommend approaching security practices in a similar manner, by developing a strong culture and standard practices throughout the development lifecycle. But as with transformation or adoption, this process can be long and difficult and there is no secret formula. As a result, it is vital for all teams to take steps toward security now, to introduce practices where they make the most sense, evaluate the results of these changes, learn from them, adapt—and then repeat.

In this episode of the Thoughtworks Beacon Podcast, Thoughtworkers Jonny LeRoy and Chelsea Komlo talk about security sandwiches and how security fits into the development process of an agile team.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.