You’re ready to move to data mesh, but you’ve never done federated delegation of governance — particularly regulatory, compliance and privacy governance. How can you properly upskill people to ensure you are setting them up for success? How can you loosen your grip on your strategic reins without losing global oversight? Is it possible to empower colleagues and coworkers to make informed choices around privacy, rather than surveilling, guiding, and instructing and restricting them from a central control tower?

Federate privacy, federate success

To federate privacy, you’ll have to rethink your privacy initiatives. Hopefully your privacy team has already been thinking through privacy by design, creating champions across the organization and dispersing knowledge. To support a properly functioning privacy organization, you need ambassadors across the organization who not only deeply care about privacy, but also understand overall organizational standards, risk evaluation, and mitigations for sensitive data. If you’ve already designed this type of governance from the ground up, you’re in a good place to move to federated governance.

If you don’t already have a strong privacy community, there are some initial steps you can take as you move from a centralized model to a federated one. Similar to any governing body, you need to create documented and aligned standards and/or principles. (If you haven’t yet built privacy standards or principles for your organization, a good starting place is reviewing the core principles of GDPR and the NIST Privacy Framework.) What exactly is your guidance for data owners on how data should be collected, assessed, stored, accessed and used? How are privacy risk assessments managed and who should be involved? Who is in charge of monitoring and accounting for held privacy risks? Is the standards and assessment process well outlined and repeatable? How are privacy and security mitigations documented, assessed and deployed? Who performs internal auditing and new policy design, and how do they work with data and product owners to do so?

If these questions and their answers are all defined in a document or binder that you sent around only to be met with a wall of silence, you probably need a federated approach sooner rather than later. By approaching privacy questions in a federated manner, you will be able to work in a more agile way, able to iterate, continually learn, and incorporate feedback.

So, what exactly does agile privacy look like?

Develop agile privacy initiatives

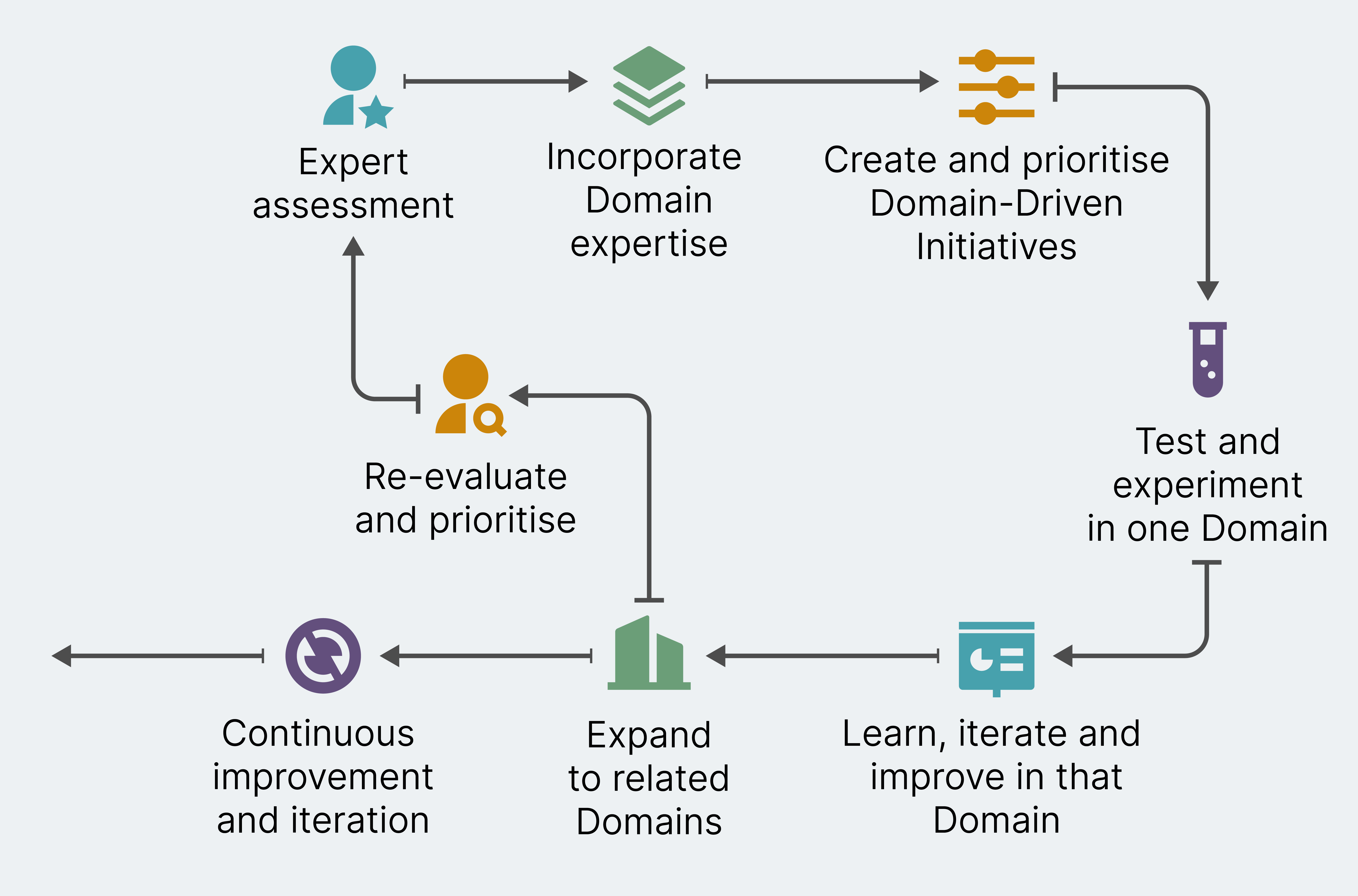

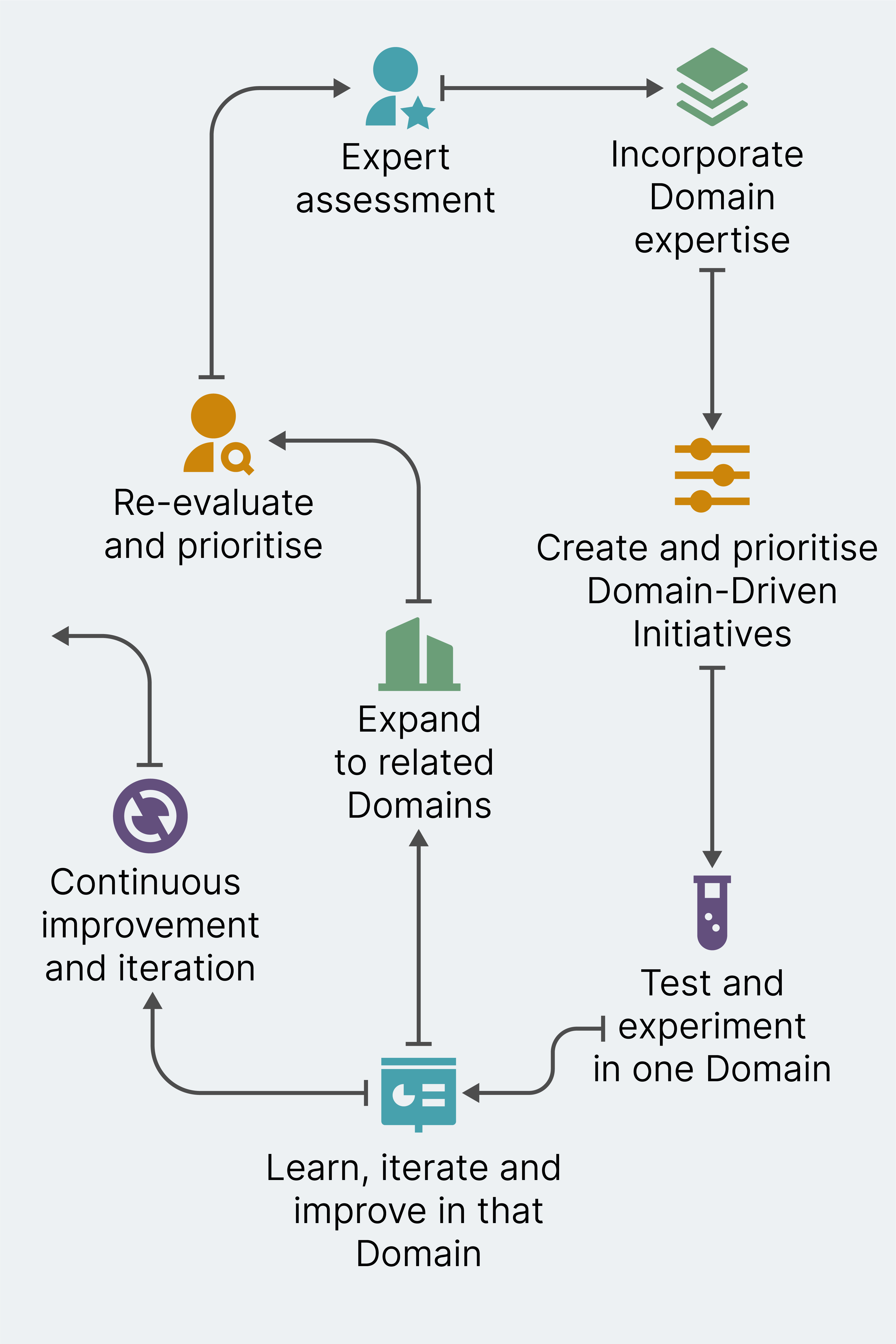

An effective way to begin developing agile privacy initiatives is to launch an expert assessment of the legal landscape and review how your organization currently understands privacy risk. Your legal professionals should be keeping an eye on changing regulatory conditions and how they position your business processes and technology. Understanding how your organization manages risk means a review of what processes, teams and stakeholders are already a part of privacy risk management. This includes people and processes used to identify and evaluate privacy risk — and to determine how to accept, mitigate and communicate that privacy risk.

The expert assessment and current landscape — both internally and externally — will not only help you to answer some key questions, but also to make your responses to them explicit. So, it should help you not only determine if you really are an organization built on consumer trust, but also the more specific ways you are and are not. Similarly, it can also help you to be specific about your legal obligations in the jurisdictions in which you operate and to clearly articulate your attitude to risk — legal and reputational.

Once this initial assessment is complete, you’ll want to incorporate domain stakeholders. You’ll need to ask questions like:

- What data is required to operate the business today?

- How is data used across domains and products?

- How do you communicate with your customers about the use of their data? What happens when that use changes?

- What does coordination to implement and track privacy and security standards across teams and products look like?

Make sure you get a lay of the land first. Asking someone who has never implemented a privacy standard or initiative to implement four at once is going to be too much! If you can meet people where they are, you’ll move a lot more quickly. It probably won't be perfect the first time around, but that’s exactly why we iterate!

The next step is to empower teams by creating domain-specific action-oriented initiatives. This can range from rolling out new technologies (like privacy technology!) to support privacy deployment, to workshops and training to upskill data owners, so they can manage and own privacy risk in an informed way. It’s important that you don’t try to roll out everything across all domains at once; you should, instead, take an agile approach, choosing thin slices (in this instance persons and data products) that are well-placed to experiment and who would also benefit from additional privacy support.

You can then create a few initiative ideas that will showcase the organization’s privacy goals and work well with domain work that has been planned. To prioritize these initiatives, analyze their likely benefit across the organization and select according to business value, risk reduction and learning. Try it with a smaller team first and gather feedback. The key thing is to experiment and iterate; this is where your privacy experts and your technologists will learn from each other.

You now have some initiatives that are ready for a fully-federated roll out. If you haven’t worked in this way before, this might feel like a big step. However, the results will be incredibly effective. To manage it safely, there are a few questions you need to explore and answer:

- Which domain owners at the initial domain can train and support other data owners?

- Which teams have already been using the new technologies successfully?

- How do teams level each other up and spread knowledge?

Once you’ve answered these, you can begin to develop processes that allow cross-domain and cross-organization information flows. You should also ensure data owners feel supported by having privacy experts play facilitation and advisory roles. It’s important to remember that a federated approach to data isn’t just about withdrawing support from data owners.

This frees up your privacy experts’ time to restart the cycle. What is the next priority based on the competitive and regulatory landscape and based on business needs right now? What’s in the backlog to roll out and test? It is critical that you keep the build-measure-learn cycle flowing and keep collecting feedback!

Create channels for transparency and accountability

So how do you ensure things are properly operating and there is enough transparency and accountability without centralized management? My question for you: is centralized management actually solving this problem?

Transparency and accountability are first developed on an interpersonal level, not an organizational one. When we create transparent and accountable communication channels as humans or within a team unit, we ensure our colleagues feel empowered and trusted. We allow failures to surface early and make sure people can come to team leaders with problems and ideate or co-create solutions. This communication should be supported and reinforced at all levels of the organization — via incentives and celebration of transparency and accountability. For example, at Thoughtworks we acknowledge Go Wrongs — outlining times when a security or privacy-related incident occurred and sharing the initial issue, the reporting, the new learnings and remediation.

If we are supporting teams to operate in this way, we can then empower teams to communicate this way across domains. If we support cross-team fearless communication, we are operating more transparently than before and with a stronger sense of accountability. As many experts on human psychology and workplace culture have studied, this almost always comes from federated support and rarely comes from C-level. It must be cultivated at an interpersonal level and rewarded by leadership, rather than cultivated by leadership and “communicated down” to team members.

Leadership plays a strong role in establishing and supporting this culture by setting priorities, properly communicating the role of privacy in the organization, and providing clear decisions on policy and process. If leadership can set clear expectations of transparency and accountability and provide open channels for the federated communication (both between teams and between management levels at the organization), this helps incentivize and expedite the development of trust across federated parties.

This is the same way for our transparency and accountability on privacy and compliance initiatives and goals. If we warn people and tell them “No” and how “wrong” their ways of working are in terms of privacy and compliance, we create a fear-driven instead of fearless communication pattern. This is a privacy antipattern!

Instead, we need to create judgment-free communication flows that allow us to spot privacy issues or failures early and often. Leadership helps communicate expectations for compliance by creating easy-to-use principles and frameworks and ensuring those are translated into processes that meet people where they are at — in the workflows that they are already using daily. We empower teams to be a part of clean-up and help correct any missteps, so they learn and grow. When we do this properly, we also create more privacy awareness across the organization.

In a federated governance design, this might mean regional leads who embed regularly with teams and level up team knowledge and understanding. These roaming experts can be pulled in for emergencies and or simply to work alongside teams to create and grow privacy competency. They should be there to proactively enable privacy and have agency to test new ideas and initiatives. With a “Yes, And” mindset, this starts to change the conversation around privacy to one of enablement and proactivity instead of disenfranchisement and reactivity.

Developing a privacy community that fosters open communication — like privacy champions, a privacy guild or practitioner community — can create channels for people to share information, upskill one another and share wins and failures. When sharing failures and “Go Wrongs”, ensure you are creating psychological safety by building practices similar to security practice of “blameless post-mortems” — where you avoid naming *who* did something and instead focus on how processes, procedures and normal human behavior contributed to the error. Only then can people appreciate how to learn from mistakes because they are decoupled from blame and shame.

Design and architect systems to support privacy goals

As you are building organizational co-ownership and shared responsibility via federation, you also need to design systems that allow for federated design, development and use. This means pushing your architects to think about data governance in a federated manner, which might be new for them!

For privacy experts at your organization, this means working closely with your information security and technical counterparts. How can we implement the privacy goals set by the organization in our architecture and software? How does our architecture design support privacy first? You may need to spend time exchanging knowledge to get to a place where this conversation can happen more fluidly and in the same language.

If technical leaders at your organization haven’t already heard about Privacy Engineering — this is a great place for them to start learning the current technologies, processes, design and theory around incorporating privacy in a technical environment. There is a growing field of research and practice — with technologies that can support advanced data and machine learning initiatives while still holding rigorous definitions of privacy. These technologies will be described further in the next article of this series.

If your organization has already embraced microservices or even just moved away from monolithic structures, you’ve likely had to re-design and determine how to support multiple applications across different types of infrastructure. Creating a functioning federated governance system means rethinking your security and data governance in a federated manner — across multiple applications via a distributed infrastructure — in a simple and straightforward way where it can be appropriately monitored.

Here are a few useful patterns for architecting privacy into your systems, which can be implemented as part of Data Mesh’s federated computational governance:

- Identify and outline trust boundaries, where data flows are moving across environments with different properties (such as access rights, actual hardware or networks). Build a zero-trust approach to all systems — ensuring that identity and authorization are set up in a federated manner for resiliency and to more easily manage distributed systems.

- Evaluate data ingress and egress at edges of trust boundaries. Set up monitoring and testing at these points to determine if boundary rules are properly maintained. Test your interfaces regularly via integration and regression testing. If possible, use data loss prevention software or network flow monitoring to establish if a boundary rule has been broken.

- Map data flows and ensure that lineage tooling is present in your data governance or cataloging software. Whenever possible, make the lineage and governance information explicit and keep it close to the data. As Zhamak Dehghani writes in Data Mesh, this means ensuring it doesn’t hide in metadata that no one views or accesses!

- Explicitly call out and architect interfaces with third-party tools and systems where sensitive data resides. Outline the SLAs/SLOs expected for those systems and privacy, security and authentication requirements for interacting with those systems. Don’t leave missing parts of sensitive data in your architecture — even if these systems are managed separately. In particular, these separate systems can create Shadow IT operations or security issues if the access is limited and the data is managed outside the organization. If your architects or privacy champions find this is the case, find ways to surface parts of that information to a larger audience with appropriate privacy controls for the information needed.

Since data is owned by domains, this also means setting those teams up with the appropriate tooling to do their job without violating trust boundaries or privacy principles. In a data mesh world, this likely means having your infrastructure teams regularly consult your privacy and security experts. Together they design self-serve platforms where data owners can enforce and uplift privacy by design and security by default — using the appropriate containers, services, infrastructure and applications maintained by the platform team(s). This platform enablement is the focus of the fourth and final article in the series.

In the next article in this series, we’ll focus exclusively on privacy technology and more aspects of computational governance — which is the ability to analyze governance using data and services. How might we analyze network traffic across our mesh to determine security vulnerabilities or privacy violations? How might we determine data sensitivity in a quantitative manner? And how can we inform humans via quantitative analysis, keeping them involved and providing the required human-in-the-loop oversight?

Stay tuned for more and keep privacy alive and well in your newly federated organization!

Post Note: In this four-part series, we’ll be diving deeper into some details on how to leverage a data mesh architecture to meet privacy and security goals. The first article in the series outlines how a data mesh architecture can support better data privacy controls. The third article will outline how we can develop a federated computational governance model with examples. The final article will elaborate on how the self-serve platform within data mesh can be used to further the privacy and technology goals of the organization.