Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.

When I wrote Patterns of Enterprise Application Architecture, I coined what I called the First Law of Distributed Object Design: "don't distribute your objects". In recent months there's been a lot of interest in microservices, which has led a few people to ask whether microservices are in contravention to this law, and if so why I am in favor of them?

It's important to note that in this first law statement, I use the phrase "distributed objects". This reflects an idea that was rather in vogue in the late '90s early '00s but since has (rightly) fallen out of favor. The idea of distributed objects is that you could design objects and choose to use these same objects either in-process or remote, where remote might mean another process in the same machine, or on a different machine. Clever middleware, such as DCOM or a CORBA implementation, would handle the in-proces/remote distinction so your system could be written and you could break it up into processes independently of how the application was designed.

My objection to the notion of distributed objects was although you can encapsulate many things behind object boundaries, you can't encapsulate the remote/in-process distinction. An in-process function call is fast and always succeeds (in that any exceptions are due to the application, not due to the mere fact of making the call). Remote calls, however, are orders of magnitude slower, and there's always a chance that the call will fail due to a failure in the remote process or the connection.

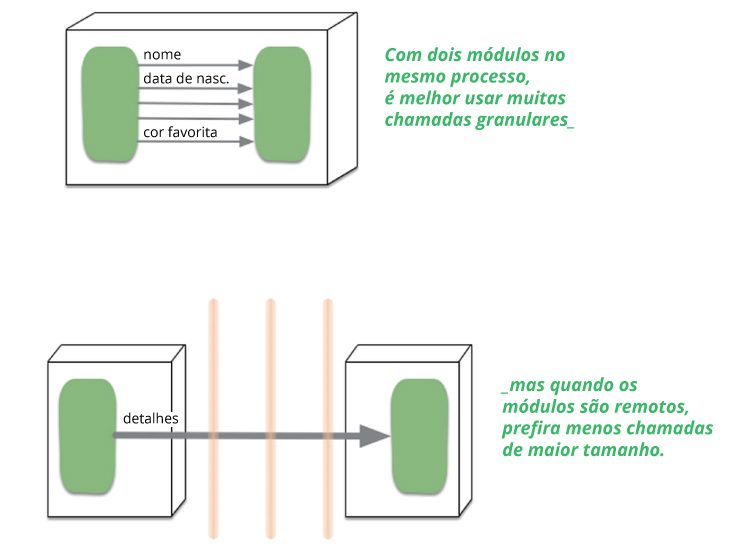

The consequence of this difference is that your guidelines for APIs are different. In process calls can be fine-grained, if you want 100 product prices and availabilities, you can happily make 100 calls to your product price function and another 100 for the availabilities. But if that function is a remote call, you're usually better off to batch all that into a single call that asks for all 100 prices and availabilities in one go. The result is a very different interface to your product object. Consequently you can't take the same class (which is primarily about interface) and use it transparently in an in-process or remote manner.

The microservice-advocates I've talked to are very aware of this distinction, and I've not heard any of them talk about in-process/remote transparency. So they aren't trying to do what distributed objects were trying to do, and thus don't violate the first law. Instead they advocated coarse-grained interactions with documents over HTTP or lightweight messaging.

So in essence, there is no contradiction between my views on distributed objects and advocates of microservices. Despite this essential non-conflict, there is another question that is now begging to be asked. Microservices imply small distributed units that communicate over remote connections much more than a monolith would do. Doesn't that contravene the spirit of the first law even if it satisfies the letter of it?

Read more: For the entire article, please go here: martinfowler.com/articles/distributed-objects-microservices.html.

Disclaimer: The statements and opinions expressed in this article are those of the author(s) and do not necessarily reflect the positions of Thoughtworks.