If you were told to design a system with privacy first, what would you do? Perhaps you’d think about properly collecting user consent and giving users options to opt-out of data collection. Maybe you equate privacy with security, and you’d ensure everything is encrypted and data is sent securely with Transport Layer Security (TLS). Or you enable personally identifiable information (PII) detection and scrub data as best you can, hoping that you caught all sensitive information.

These are all valid approaches, but they are band-aids on the privacy problem. To properly address privacy holistically, you need to think differently about your data. You have to architect systems that incorporate Privacy by Design. You need to ensure that collection, storage and access respect the privacy standards you are developing, and are aligned with privacy experts at your organization and those where your data is sent or received. As privacy is also a part of your brand and company culture, you need your communication to complement your technological efforts.

The data mesh paradigm allows us to see data in a new way. Instead of slurping data from anyone, anywhere, we are asked to appoint knowledgeable data owners for a given domain. These owners can then decide what data needs to be collected to build their respective data products. Instead of a massive data swamp with toxic leaks of unknown provenance, we have collaborative teams who curate their data and provide useful services. We move from a water-based pipeline approach to a series of interconnected libraries. We can see what books are available, order them from other libraries, and ask an expert librarian if we are unsure how to find what we are looking for.

This approach, similar to the decades of privacy work by librarians, is inherently more private. By federating data and allowing curation by those who know the data best and those who understand how data will be used, we can enable actual privacy by design. By putting experts and domain owners in charge of data collection, storage and use, we let those who know the data the best make informed privacy and security choices. We enable them via self-serve platform technology to appropriately turn the knobs and enforce privacy standards at the source, and even during collection. By giving them oversight and enabling more collective power over data, we also empower our users and build trust. This is because data use and data collection are brought together instead of remaining isolated in disparate undocumented underground sewers, where it’s anyone’s best guess how the data is actually used once it enters the pipes.

This article is the first in a four-part series, where we will look deeper into the relationship between data mesh and privacy. The series will cover:

- How a data mesh architecture can support better data privacy controls.

- How to shift from a centralized governance model to a federated approach.

- How to focus on automation as a cornerstone of your governance strategy.

- How to bake privacy tech into your self-service platform approach.

Data federation over data centralization

Federation allows better service and greater oversight. We don’t look to a central government to ensure our local post office is functioning. Sure, we need coordination across federated units, and we need to understand what shared goals and purposes we are working towards, but when we actually empower individuals and work collectively, we are doing so in smaller, agile units. Data mesh is the data approach to federated, agile and empowered smaller product teams, who can assess how their local data product is functioning and coordinate across related and neighboring units as needed.

Federation is good for privacy because it ensures that data is locally owned and operated. Without a central pot of data that can attract both insider and outsider threats, we can instead create distributed pockets of value that each have their own oversight, use, access and control. We can do away with Shadow IT and credential sharing, because there is no longer one central place to which people need access. We can also start thinking of new paradigms that collect fewer data and keep data on user devices or other endpoints, as is the case with federated learning and several secure computation protocols. This begins to shift data ownership out of our organization while still allowing us to deliver insights and business value from data artifacts and derivatives. Moving to an architecture where a user can actually own their data and interact with our services in a product-oriented way becomes a very real possibility instead of a faint glimmer of hope in a distant future.

Trust boundaries

When we set up domains as part of our data mesh, we establish trust boundaries. Trust boundaries that we outline as part of our security architecture operate like semaphore within the mesh. This requires special access, special protocols and technologies or special protections in order to traverse the semaphore. Since we have domain data owners at each node in our mesh, we can empower them to make this possible and decide when a firmer barrier should be set. We also enable architects and security analysts to ensure the trust boundaries match our understanding of risk. Compared with a centralized model, this allows us to clearly “see” our boundaries and architect them appropriately. Because these boundaries now exist not only as a diagram in a presentation, but in the actual design of the system, they become explicit; they allow us to more easily protect the secure nodes in the mesh. Data Loss Prevention technology or other network protocols can be used to greater effect, since there are clear boundaries that need to be crossed; this means anomalies can be more easily identified.

This allows us to begin testing our architecture and designs to ensure they are built in the way we expect. This supports what is called federated computational governance, something outlined in the original principles of data mesh. (These will be the primary focus of the third piece in this series.) Federated computational governance asks us to find automated ways to answer the biggest governance questions we have and to allow them to be implemented in a federated manner. When we map trust boundaries, we are able to design a system that provides controls at those boundaries and verify that data flows match our definitions. To support this, organizations can develop cross-organizational governance that is enabled through the testing and verification of the compute infrastructure and running services.

Distributed Access Control

The data product itself should become the main point of access. The advantage of this is that because the product is designed by domain owners, it will (or should) inevitably have privacy and security constraints. Ask yourself: who do you most want making decisions about data access? The answer should be obvious: those who actually work with the data daily and understand the data population, its nuances and risks.

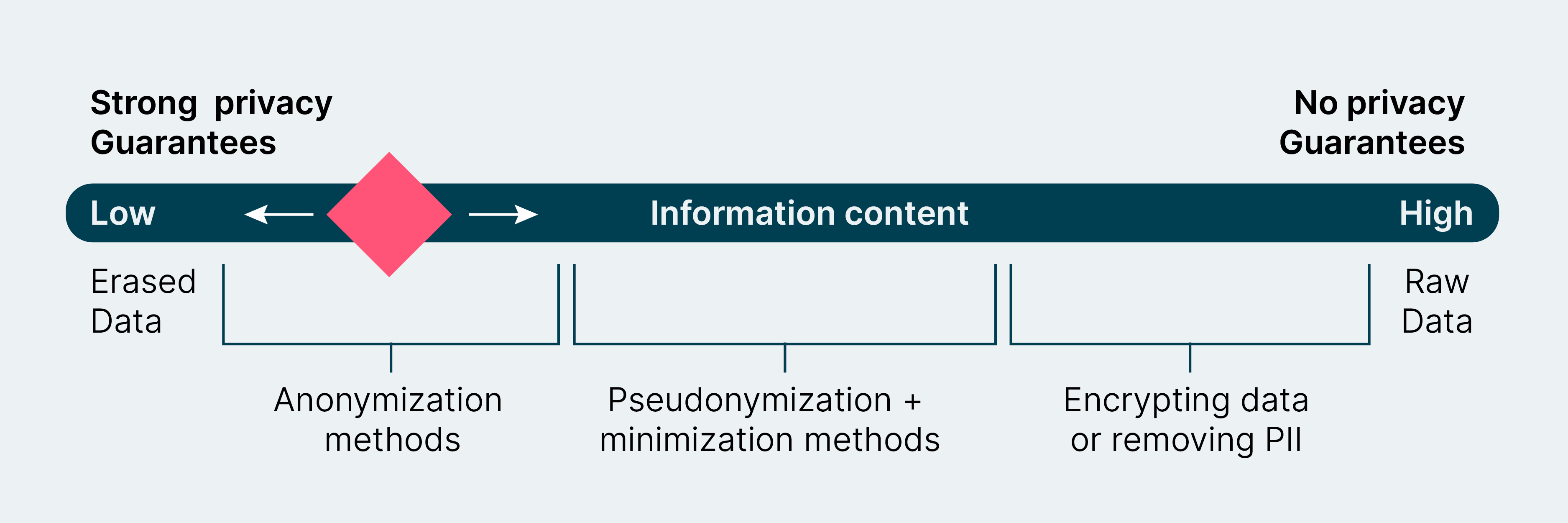

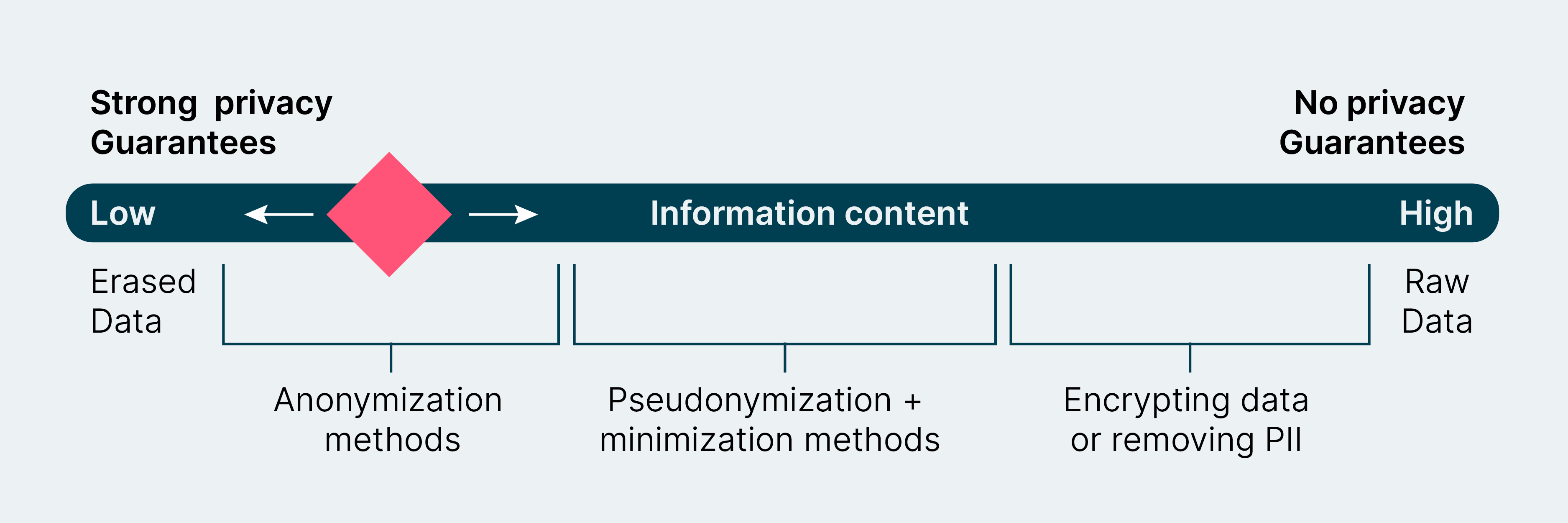

Diagram of privacy vs. utility

In developing access with a product mindset, data owners (i.e. domain owners and data experts on the domain team) can address difficult questions about privacy versus information. These are questions such as:

- What utility do customers require?

- What privacy protection is needed for our users or the persons whose data we collect?

- How can we navigate this continuum between full access and no access, to meet the needs of all parties?

These questions should be answered by those who know the data best. An effective approach is to coordinate the various pockets of expertise — from data scientists and engineers, to product developers, to privacy experts — so they can support a project collaboratively. If you build privacy in at this foundational level, you will have the added benefit of growing privacy expertise and champions across the organization.

Meeting the needs of external users and internal stakeholders

If you do this, it becomes much easier to build and design data products that meet the needs of users — whether they’re consumers or internal stakeholders. On one side, for instance, you might have users who could fine-tune their privacy settings, or might want their usage data completely anonymized. On the other side, you might have internal analytics teams who need to measure engagement metrics. Balancing the needs of both these groups can be challenging, but privacy technologies like differential privacy and federated data analytics allow us to strike it. With them, we can better manage the trade-offs between completely tracked insights on user behavior, giving our analysts zero insight on user behavior. (A deeper dive into the privacy technology possibilities will be outlined in the fourth part of this series.)

When you properly define privacy expectations and informational data flows, you can use that information to build increased auditing and transparency for all parties. Your privacy experts and legal team will thank you when they work on new privacy policies or principles for the organization, because they can then actually chart data usage across the organization by following data flows in and out of data products as well as the privacy and security measures for each data flow. Governing these flows will also become easier, since lineage is built into the mesh itself. This level of clarity and visibility can also shift what data each domain collects, as they learn what data product endpoints or services are most used and which have barely any traction. Data minimization can happen as a normal part of data product design when it is clear that parts of the data are underused or simply not helpful anymore. Monitoring usage, access, privacy and regulatory changes become a daily part of data domain work that neatly fits into the data product lifecycle.

Keep data close to knowledge

There is one key lesson here: keep data close to knowledge. By doing so, you will not only shift how data products are built (to your advantage, by the way!), you will also build trust and data competency. If you’ve ever wondered why your AI initiatives or data initiatives are failing, look closely at who owns data in your organization. If the responsibility for data is unclear, the project will lack sufficient accountability to make it successful. This is one of the core promises of data mesh, and, more broadly, of thinking through the governance and privacy of data in a holistic manner.

When we empower colleagues to deepen their knowledge and fully design and own their services we bring out the best in people. When we give data owners responsibility for the data they care for, we are supporting privacy by design. There is ultimately no other way; there is no such thing as top-down privacy by design (we’ve been trying that for nearly 30 years and so far it’s not working, so maybe now it’s time for something different).

If you are a large organization struggling to develop data initiatives in a privacy-first way, you need to strategically rethink how you approach both data and privacy. By moving to a mesh, you are reorganizing power and knowledge. It can be scary and new, but ultimately this distribution of power will help your best and brightest shine and remove cultural and technical roadblocks so your organization’s approach to privacy and internal accountability can grow. By building privacy and security principles directly into your architecture and software development, you will not only ensure they are upheld, you will also ensure your developers and data engineers learn by example.

Delegate privacy with a mesh and see the benefits of uplifting your librarians.

Post Note: In this four-part series, we’ll be diving deeper into some details on how to leverage a data mesh architecture to meet privacy and security goals. The second article in the series outlines how to move to a federated decision-making model for your data governance. The third article will outline how we can develop a federated computational governance model with examples. The final article will elaborate on how the self-serve platform within data mesh can be used to further the privacy and technology goals of the organization.